Eigen: The Swiss Army Knife of CFD libraries

A new series, a new excuse for me to spend even more hours diving deep into coding and talking about a guilty pleasure of mine. In this new series, I want to highlight and demonstrate C++ libraries that have my life a lot easier when writing CFD solvers in the past, and that will help you write better CFD solvers as well.

The examples I'll provide are taken from real-life use cases where I have used these libraries myself, and the first library we look at is the one library I cannot live without anymore: I am talking about Eigen.

Eigen is, at its core, just a linear algebra library. But, as we will explore together in this article, it can be used for essentially anything that vaguely operates on vectors and matrices. We'll start by looking at basic examples for how to work with vectors and matrices, but then very quickly dive into examples that show how I would use Eigen in a real CFD solver.

We look at examples like discretising PDEs and computing the laplacian within a Poisson solver, computing gradients on unstructured grids using the least-square method (and related methods), computing and storing geometric cell/face information for unstructured solvers, solving linear system fo equations, i.e. [katex]\mathbf{Ax}=\mathbf{b}[/katex], easily computing the CFL number, and, how to compute derivatives exactly, down to machine precision.

Eigen can do all of that, and more, and in this article, I hope to convince you to learn Eigen and use it for your own projects as well. The best part: Eigen is so simple to use that you don't really have to learn it. You just use it. It has an extremely easy API interface, and even if you do get stuck, it probably has one of the best library documentations I have seen in a long time.

So, are you ready to become a better, more proficient CFD programmer? Then this article is for you!

Download Resources

All developed code and resources in this article are available for download. If you are encountering issues running any of the scripts, please refer to the instructions for running scripts downloaded from this website.

- Download: Eigen examples

In this series

[custom_category_posts_list category_slug="essential-libraries-for-cfd-solver-development"]In this article

- Introduction

- Installing Eigen and the example code

- Code examples for Eigen

- Basic Eigen usage examples

- CFD-specific examples

- Computing the CFL number

- Computing geometric information: The Triangle-Point example

- Discretising partial differential equations with Eigen

- Interfacing Eigen with other data types

- Computing gradients on unstructured grids using the least-squares and related approaches

- Working with sparse matrices (and where to best enjoy royal milk tea)

- Using Eigen to solve linear systems of equations

- Computing the flux Jacobian using automatic differentiation

- Summary

Introduction

Whenever we start writing a significant piece of code (and I consider CFD solvers to fall in this category), we rarely want to reinvent the wheel ourselves, meaning, unless we have to, we want to make use of other people's code in the form of libraries, to reduce the amount of code we have to write. This allows us to concentrate on the core logic of our code and then delegate, where we can. There are many reasons why this is beneficial, but these are probably the most important ones:

- Library code, especially high-quality libraries that have rigorous software testing in place, providing you with some degree of confidence that the library is doing what you expect it to. Calling a function from a third-party library is easier than implementing, testing, and maintaining that function yourself.

- Even if bugs exist in that library, you can either report a bug yourself or, for a large enough user community, others will likely come across that bug and request a bug fix themselves. If you regularly update your dependencies (all the libraries you are using), chances are you'll never have to worry about external bugs.

- Libraries are often written by domain experts. You tap into their knowledge, and even if you don't use all the library has to offer, the parts that you do end up using are likely a better implementation to whatever you could ever come up with.

- Library code is often optimised, and an implementation you can come up with may not be as fast and efficient as the library code.

- If you plan to collaborate with other people on your code, using common libraries will help others to find their way around in your codebase. Even if they don't know the library, they can pick it up quickly by referring to the library's documentation, which, let's be honest, will be better than whatever development guide you put together.

- The best part is: You get all of this for free. Forever. You can download the libraries without having to pay for them, and you enjoy all of the benefits stated above. Where else do you get that? Imagine the pharmaceutical industry uploading their recipes for creating drugs to cure cancer, for free, to the internet. We don't often acknowledge this fact, but free and open-source (FOSS) software is a remarkable concept!

Despite these, in my view, overwhelmingly positive advantages, many people, especially when they are starting out with code development, opt for doing everything by themselves, setting them up for failure. I'm not judging; this pretty much describes my personal learning path (and that of those around me when I learned programming and CFD).

I have come to realise that learning a new library is easy, but getting a library integrated into your project is probably the hardest part. And worst of all, some of the most well-known, most-used libraries in CFD simply stick up their middle finger, essentially telling you: "if you can't integrate me into your project, you don't deserve to make use of my capabilities".We'll get around to this particular problem child eventually in this series.

If your first steps with third-party libraries are with one of those libraries that are not very developer-friendly, chances are you give up and just try to write all the code yourself. But then there are these libraries that really stick out from the sea of libraries. They are like a good friend, holding your hand and telling you, "everything will be OK". These are the friends you keep around, and the same is true for libraries, some of them, well, I can't live without.

My last series 10 key concepts everyone must understand in CFD started as a list of topics I wanted to discuss and though are essential to master CFD. My list contained, originally, 9 items, and I thought, "I'll just come up with the last remaining topics as I write this series". Poor planning on my behalf ...

I eventually did come up with the last remaining topic (which was high-performance computing). I had a few topics to pick from, including the role of software engineering in CFD, though that, perhaps, could be explored in a separate series and in a lot more practical terms.

Be that as it may, I have learned from my mistakes, and so for this, brand spanking new series, I have done some soul-searching and thought about which libraries I want to cover in this series. Looking at that list, there is one library in particular I just can't live without; it is my BFF in the world of liraries.

I am talking about Eigen, which is also referred to as Eigen3, even though it is now on version 5 (curiously, there is no version 4. What happened here?).

Eigen is a high-performance, header-only C++ template library for linear algebra applications, providing matrix and vector types, as well as operations you can perform on matrices and vectors. This includes decompositions, computing eigenvalues and eigenvectors (duh, its sort of in the name), as well as solving linear system of equations. On top of that, it does come with some additional features you wouldn't necessarily expect in a linear algebra library, and we will get around to those features as well in this article.

The best part about Eigen is that you don't really have to learn it. Sure, the documentation is there if you need it (although, it seems whenever I want to look something up, gitlab (where the documentation is hosted), is faking an aneurysm and the documentation can't be found), but the library interface is so well designed that with intellisense set up in your code editor, you can start writing code without having to consult the documentation. It is that good!

And this also describes how I got to learn Eigen. I was once working on a solver where Eigen was used. I didn't know the library back then, but I had to start using it. Just by looking at the code that was using Eigen, I immediately understood what the library was doing, and I started using it.

When I started working on my own projects, I just continued to use Eigen. It was such a natural thing to use, and so, when I say, Eigen is the library I can't live without, I mean it (no clickbait). I use it all the time.

The fact that it is header-only also means that you can simply download the library and place it in your project, no additional steps required. You can simply start using it, and your compiler will then compile those parts of Eigen that you are using; no compilation of the library is required ahead of time.

Since it is header-only, it also means that liberal use of templates is allowed, and let me tell you, Eigen is template-galore! Templates are brilliant once you understand them (they have a step learning curve), and they offer optimisation potential at compile-time that is quite impressive.

As the end-user, though, we don't really have to worry about templates, but we will touch upon template vs. non-template code later, as Eigen does provide support for both, and each approach has its own advantages and disadvantages. But I leave that for later when we actually look at code.

So, hopefully, I have successfully whet your appetite for Eigen. It is one of those libraries you will come to love and use in all of your projects. So in the remainder of this article, I want to show you some examples that demonstrate how to use Eigen in the context of CFD solver development.

We'll be using it to compute gradients on unstructured grids, compute geometric information for triangular mesh elements in 3D space, solve a fully implicit partial differential equation, and compute the flux Jacobian exactly without round-off or discretisation errors. And these are just some of the things you can do with Eigen, but hopefully this will help you get an idea of what is possible.

Before we jump into our code examples, let us look at how we can install the library first.

Installing Eigen and the example code

There are two ways of how you can test Eigen, as well as the code samples provided in this article. The first is the easiest, where you can do everything in your browser. The second option requires you to install all dependencies onto your PC, though with the right tools, this can be automated, and it is pretty painless.

You can download all code examples that are used in this article from the link provided at the beginning of the article.

Using Eigen online

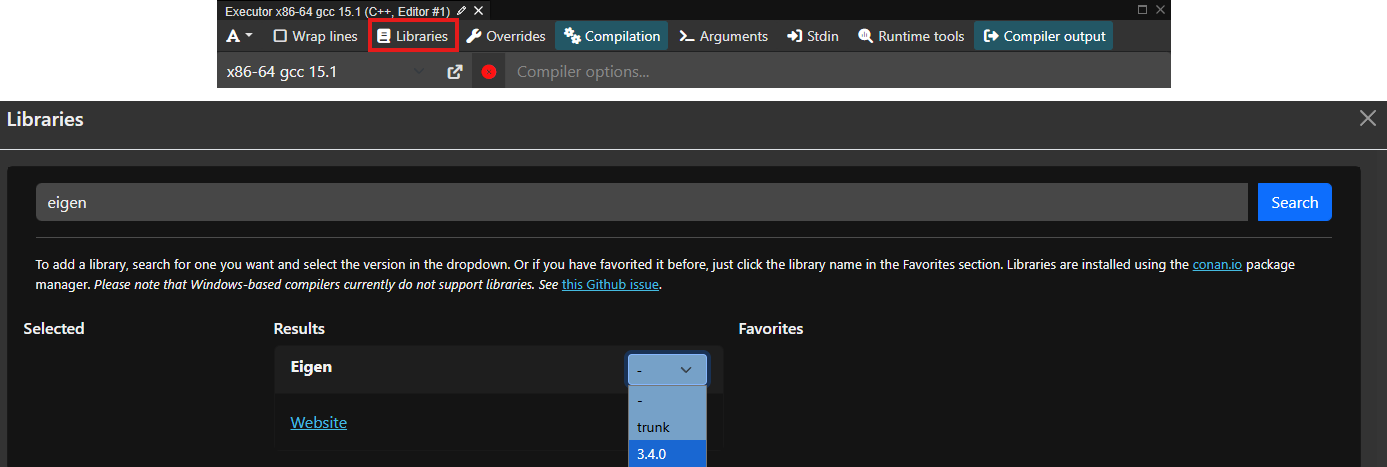

If you just want to play around with Eigen, the easiest way is to head over to compiler explorer, activating Eigen as a project dependency, and start coding. To activate Eigen, click on the Libraries button as shown below (red rectangle), and type eigen in the window that appears (shown below). Select the version you want to use; 3.4.0 is the latest as of the time of writing, which has been stable for some time and all examples will work with this version.

To test that this was working, try running the following code:

#include <Eigen/Dense>

#include <iostream>

int main() {

Eigen::MatrixXd A(3, 2);

A << 1, 2,

3, 4,

5, 6;

std::cout << "Matrix A:\n" << A << "\n\n";

return 0;

}

This should print the following output:

Matrix A:

1 2

3 4

5 6Note, by default, compiler explorer will not give you this output, but rather the compiled assembly code. To get the output, you will need to click on the Add new... button and select Execution Only. This is also the place where you are able to select Eigen as a library dependency, as shown above. You should now see this output. If you do, you can go ahead and copy and paste all other code examples now in here and start playing around with Eigen.

Installing Eigen locally on your PC

I mentioned that Eigen is a header-only library, and so, theoretically, we can simply download the library and throw it into our project folder and start using it. I would not recommend consuming Eigen this way, though.

Instead, you should always be using a package manager whenever possible. They don't just download and manage the dependency for you (compiling them as well if necessary), they also handle all of the nitty-gritty details of communicating to your build system where to find the library, so you can easily integrate them into your build files.

I am personally a huge fan of conan as a package manager. It just works! I have gone through countless ways to manage dependencies (libraries), including:

- Manual compilation through build systems (MSBuild, Autotools, CMake, Make, custom bash scrips)

- FetchContent in CMake

- Docker

- EasyBuild

- Lmod

- VCPKG (no, I am not linking back to this dumpster fire of a package manager)

- UNIX package manager like apt/pacman/yay/brew/etc.

Believe me, conan understands libraries, and it understands C++ specific quirks (such as ABI compatibility) better than any other package manager solution does. It is also so simple to use that you really don't have an excuse not to use it. You only need Python and a C++ compiler.

I have a dedicated article that will show you how to set up a coding environment to develop CFD codes. If you now have Python and a C++ compiler installed, you may continue with the next steps.

Step 1: Installing prerequisites

Once you have downloaded the example code at the beginning of this article, extract the archive and navigate into it using your favourite shell (yes, I still advocate using Microsoft PowerShell, even on macOS, thus far no hate mails ...).

Once inside the project folder, install the project dependencies with Python's package manager pip as:

pip install -r .\requirements.txtDepending on your operating system, this step may fail. You may see an error message like the following:

error: externally-managed-environment

× This environment is externally managedIf this is the case, you cannot install dependencies globally, and you will need to use a virtual environment. Virtual environments are isolated environments in which you can do whatever you want without changing the settings and behaviour of your global environment. If these are new to you, this article provides a good explanation of what they do and how to work with them.

Creating a virtual environment is straightforward in Python. Follow these steps, depending on your operating system:

- UNIX:

python3 -m venv .venv

source .venv/bin/activate- Windows:

py -m venv .venv

.\.venv\Scripts\Activate.ps1After you have activated the virtual environment, you should see the name of the virtual environment at the beginning of your shell, e.g. (.venv). From now on, any packages that you install will go into this virtual environment (which really just is a folder called .venv).

So, now you can install all requirements again using the line we saw before, i.e.

pip install -r .\requirements.txtIf you look into the requirements.txt file, you'll see there are two dependencies listed: conan and cmake. We will be using Conan to download dependencies and manage these for us, i.e. Eigen in this case. We'll use CMake to link our code against Eigen and produce executables from our source files.

CMake is such an important part of the build process of software that I have dedicated an entire series to it. If you are completely new to CMake and just want to find your bearings, I have written a high-level introduction that you can consult before proceeding. This should give you a good grasp of CMake.

Even if you have no intention of using CMake at any point in the future in your own personal projects, it is still useful to know the basics, as pretty much every C++ library under the sun supports it, and so consuming other projects will likely require some basic CMake knowledge.

OK, so now that you don't have an excuse not use CMake anymore, I can bug the fine people over at Kitware for my affiliate marketing commissions, let's start by setting up our Conan profile.

If you have never used Conan before, a profile is essentially a configuration file. It stores your preferences and environmental variables that are required for building libraries from source, if needed. For example, Conan needs to know which operating system you are running, what your compiler is, and if you want to build your packages as a debug or release build. During development, you want to work with debug builds, but for release, you want to work with release builds to turn on compile-time optimisations.

Conan handles all of that for us, and we can set these options in our profile file. But, for now, if this is the first time for you using Conan, simply force conan to create a default profile for you. This will then contain sensible default values.

conan profile detect --forceYou only need to do this once after you installed Conan. After you have a profile, you can reuse it for other projects as well, in which Conan manages your dependencies. For more information on the usage of profiles, have a look at the documentation.

Now we can install all required dependencies. For this, we need to create a conanfile.txt. This file contains all the required packages we want to download and make available for our build. The contant of the conanfile.txt is:

[requires]

eigen/5.0.1

[generators]

CMakeDeps

CMakeToolchain

[layout]

cmake_layoutWe can see that our project requires Eigen version 5.0.1 to work properly, and we want to use Eigen in a CMake project. We tell that to Conan so that it downloads all required packages (i.e. Eigen in this case) and then prepares it so it can be easily consumed by a CMake project. It does all of the heavy lifting for us behind the scenes.

You may ask how this text file was created. Well, if you know how to copy and paste, you should be able to create this file yourself. Simply head over to the conan center and search for the library you want to use. In our case, searching for Eigen provides us with this page. You can see that we already get the boilerplate content for the conanfile.txt and simply need to copy that into our conanfile.txt within our project.

It even shows you at the bottom how to find Eigen as a dependency within CMake. I love Conan ...

With this file in place, we can now instruct Conan to get all dependencies and set them up for us:

conan install . --build=missing --settings=build_type=ReleaseThe install command will make dependencies available, and we specify that we want to make them available in the current (root) directory. This will then create the required files in a file structure that is understood by CMake. The ---build=missing won't do anything here, as we are using a header-only library, and nothing is built from source.

However, if we were to download another library that actually compiles to a static or dynamic library, we allow Conan to compile these libraries from source if needed. The default behaviour for Conan is to look for a precompiled library that matches our environment (e.g. operating system and build specification). In many cases, that already exists, and Conan simply fetches the already compiled library for us. But, just in case, this does not exist, the --build=missing flag allows Conan to build from source if needed.

The additional --settings=build_type=Release flag will overwrite the build_type variable stored in the profile that was generated by conan. To check what value was created, head over to your home directory of your user (e.g. C:\Users\tom\ (Windows) or /home/tom/ (UNIX)), and go into the .conan2/profiles folder. You will find a file called default which was created when we asked Conan to create a profile for us.

In my case, this file contains build_type=Release and it will likely be the same for you (this is Conan's default behaviour). It may change in the future, so we can overwrite it here. I mainly do it to remind myself what I am building at the moment. You can change it to --settings=build_type=Debug and conan will make the library available in debug mode

Alternatively, copy the default profile file and create a debug file, for example, and change the build_type variable to Debug. To use this profile now, simply run conan with the profile flag:

conan install . --build=missing -pr debugI keep different profiles for different build tasks (debug/release, as well as different compilers), and this makes Conan great with C++. Other dependency managers don't give you this flexibility, or at least not at this level of convenience.

Depending on your build system that was detected by conan, it will write out all of its files into one of the following two possible folder paths:

build/Release/generators/conan_toolchain.cmake

build/generators/conan_toolchain.cmakeCheck where your files have been stored, as this will influence the following command. We have now downloaded all dependencies and want to compile our project with CMake, which uses Eigen. The CMake project is defined in the CMakeLists.txt file within the root directory. The content is:

cmake_minimum_required(VERSION 3.23)

project(

eigenDemo

LANGUAGES CXX

)

# Ensure eigen is available as a dependency

find_package(Eigen3 REQUIRED)

# Add an executable and provide the source file directly.

add_executable(denseMatrices "src/denseMatrices.cpp")

add_executable(denseMatrixVector "src/denseMatrixVector.cpp")

add_executable(cflNumber "src/cflNumber.cpp")

add_executable(pointExample "src/pointExample.cpp")

add_executable(laplacianExample "src/laplacianExample.cpp")

add_executable(interoperabilityExample "src/interoperabilityExample.cpp")

add_executable(leastSquareExample "src/leastSquareExample.cpp")

add_executable(sparseMatrices "src/sparseMatrices.cpp")

add_executable(heatEquation "src/heatEquation.cpp")

add_executable(fluxJacobian "src/fluxJacobian.cpp")

# Link against Eigen

target_link_libraries(denseMatrices Eigen3::Eigen)

target_link_libraries(cflNumber Eigen3::Eigen)

target_link_libraries(denseMatrixVector Eigen3::Eigen)

target_link_libraries(pointExample Eigen3::Eigen)

target_link_libraries(laplacianExample Eigen3::Eigen)

target_link_libraries(interoperabilityExample Eigen3::Eigen)

target_link_libraries(leastSquareExample Eigen3::Eigen)

target_link_libraries(sparseMatrices Eigen3::Eigen)

target_link_libraries(heatEquation Eigen3::Eigen)

target_link_libraries(fluxJacobian Eigen3::Eigen)We specify our minimum CMake version on line 1, as required by CMake, but we are not using any advanced features, so a lower version would also be possible here (though, given CMake's troubled past, it's better not to go too low. Never go below version 3, or you ask for trouble).

We define a simple C+ project on lines 3-6, and then we can request Eigen as a project dependency on line 9. Conan will have done everything for us so that we can cleanly import Eigen here. If you have ever worked with find_package yourself and tried to understand CMake's logic in detecting packages, you'll know the pain. Probably exactly because I have tried to use find_package myself in the past without Conan, I do appreciate and like it so much, because it really is like an aspirin during your worst migraine; it takes the pain away.

I have defined a few targets to keep each example small and self-contained, but in reality, we may only have a single executable or librayr we want to compile and then link against Eigen. The takeaway here is that on lines 12-21 we define all executables (demos) we want to create, and then we link all of them against Eigen on lines 24-33. Remember, these are also pretty much just copied and pasted from Conan's website (look for the targets at the bottom of the page).

We can now compile the project from our terminal. Still within the root folder of the project, write either of these two lines (remember to check where all conan files were generated and select the line that is correct for you).

cmake -B build/ -S . -DCMAKE_BUILD_TYPE=Release -DCMAKE_TOOLCHAIN_FILE='build/Release/generators/conan_toolchain.cmake'

cmake -B build/ -S . -DCMAKE_BUILD_TYPE=Release -DCMAKE_TOOLCHAIN_FILE='build/generators/conan_toolchain.cmake'If you can't be bothered to check, execute both of them; one will fail, one will work. And, if both fail commands fail, don't worry, there are always jobs in project management and a need for engineers with Excel skills. Perhaps CFD isn't for you, then.

For those that are still standing (for some reason, I am thinking of those as having reached the final stage of Takishi's castle(please tell me I am not the only one who watched this?!) ...), we can now finally compile our executables using the following command:

cmake --build build/ --config ReleaseThis will now generate the executables within the build/ folder, either directly inside it or within the Release/ folder. Again, this will depend on your build environment. You should be able to execute these files now. For example, to run the first example for dense matrices, try to run one of the following commands.

On Windows, you may run either:

.\build\Release\denseMatrices.exe

.\build\denseMatrices.exeOn UNIX, you will have no file ending and may run:

./build/Release/denseMatrices

./build/denseMatricesGreat, now that we can compile and run our examples, using either Compiler Explorer online or your own development setup offline, let's look at some examples to get familiar with Eigen!

Code examples for Eigen

What I have done in this section is to come up with some basic examples that show how to use Eigen, so that you can get a feeling for how to use it. Once we understand the basic syntax, we can look at some more relevant CFD applications, and I have created a few simple examples where I show you how I would use Eigen in a real CFD solver.

Basic Eigen usage examples

Working with dense matrices and vectors

In this section, we will deal with dense matrices. But before we do that, let's first understand why we need to differentiate between dense and sparse matrices.

In a mathematical sense, a matrix is a matrix. That's it. But, if pure math is your thing, you would be sitting down with pen and paper and start deriving away, not combing through a website about numerical math (ups, I used the N-word and I am not even a pure mathematician, I'll probably get cancelled for that ... )

If we ever want to achieve something practical with matrices (that is, in a computational sense, e.g. using CFD), then it makes a lot of sense to differentiate between dense and sparse matrices.

A dense matrix is one where all elements of the matrix are stored. A sparse matrix, on the other hand, is one where only the non-zero elements are stored. Thus, a dense matrix stores everything (and thus its name), while a sparse matrix only stores what it needs (and thus its name).

When we were looking at writing our own sparse matrix class a while ago, we looked at the cost of storing a dense and sparse matrix to compute a linear system of the form [katex]\mathbf{Ax}=\mathbf{b}[/katex] for 1 million elements in our mesh. The results were:

- We need about 8 Terabytes (TB) of RAM to store 1 million elements with a dense matrix

- We need about 24 - 96 Megabytes (MB) of RAM (depending on the element shape) to store 1 million elements with a sparse matrix

You can find the example and the computation here: The cost of dense matrices and how sparse matrices save the day.

You see, I am personally a big fan of reducing the cost of my linear algebra computations to a minimum, so that my browser has sufficient RAM to load YouTube for me to watch while I wait for my simulations to finish. Seriously, how have we come to accept it as a normality that our browsers consume Gigabytes of RAM just to display static text? If CFD programmers approached memory management the same way as web developers, we would still be simulating 2D channel flows on 100 by 20 structured grids.

Anyhow, to allow you and me to consume our daily portion of 8 hours of social media doom scrolling, Eigen has sensibly introduced both a dense and sparse matrix class, which largely operate the same. The only difference is how they are internally stored, but you and I as end-users do not see that.

One of Eigen's goals was (and still is) to be a performant C++ library. This means it makes heavy use of templates. In a nutshell, a template is like a blueprint for a house. You create (define) the blueprint of the house, and then you can build many versions of that house at different locations. Templates are similar in that you specify what type you want to use, and your C++ compiler will then create (and optimise!) the required code for you.

The optimisation bit is what is important here. Because templates are evaluated at compile time, your compiler has all it needs to optimise your code. It does come at the cost of increased compile time and horrible error messages that are no longer debuggable, which means that you have to use your lunch breaks to write a C++ template error parser application only to make sense of those pesky template errors.

Thus, we have to be comfortable with templates, but, as long as we know a few things about them, we are good to use Eigen; there really is nothing difficult for us to worry about. We are not developing Eigen, only using it, so we leave all of the fun debugging to the Eigen developers (who have received a fair share of bug reports from my side).

We will jump into code in just a second, but I wanted to look at the basic matrix definitions so that the code will make more sense. To define a matrix, we use the following syntax: Eigen::Matrix. We see that we have 6 template parameters, which are:

ScalarType: This is the type we want each coefficient in the matrix to have, typically primitive variable types likeintordouble.RowsandColumns: The number of rows and columns our matrix should have. This can be either a fixed number, e.g. 3, or we can use the special valueEigen::Dynamicto indicate that we don't know the number of rows and/or columns at compile time. We can then set the size later, for example, after reading some input file.Options(optional): Allows us to set specific matrix options, typically to set the storage order of the matrix. For example, we may useEigen::ColMajorandEigen::RowMajorto tell Eigen that we want to store the matrix coefficients in a row-by-row or column-by-column fashion, respectively (since matrix coefficients are stored in a 1D array internally, so Eigen needs to know how to map this 1D array to a 2D matrix). Since this is an optional parameter, we don't have to set it and Eigen will, in this case, default toEigen::ColMajor.MaxRowsandMaxColumns(optional): Specifies the maximum number of rows and columns inside the matrix. This is rarely used (I have never used it), and may only be useful in cases where you don't know the exact size at compile time, but you know it will never exceed a specific value. In this case, you would set theRowsandColumnsarguments toEigen::Dynamicand then specify the maximum values inMaxRowsandMaxColumns. By default, if you don't set anything, we haveMaxRows = RowsandMaxColumns = Columns.

We will look at how to use this matrix class along with its template options in our first code example, but I want to focus on the second and third template parameters a bit more, as this really is the main differentiating part in how we use matrices in Eigen.

When we specify the number of rows and columns as a template parameter, we speak of a fixed-size matrix, since we fix the size of it at compile time and we cannot change it later. This makes sense for matrices which don't change in size, like 3-by-3 rotation matrices, or flux Jacobians. We know this value at compile time and can thus fix it.

On the other hand, if we set the rows and columns to Eigen::Dynamic, we don't fix te size and thus speak of a dynamic-sized matrix. We can change the size of the matrix at any point in the program, but this flexibility comes with an overhead, and thus we should only do that if we don't know the matrix size at compile time.

For example, if we want to use Eigen to compute the linear system of equation [katex]\mathbf{Ax}=\mathbf{b}[/katex], then we don't know the size of [katex]\mathbf{A}[/katex], as this will depend on the mesh size, which is only known at runtime (unless we always run the same case and hardcode the parameter for the mesh at comple time). Generally speaking, though, we want to be able to allow for different mesh sizes at runtime, and so we can't know the mesh size, and thus the size of [katex]\mathbf{A}[/katex] in advance. In this case, Eigen::Dynamic is appropriate as a size.

Furthermore, it should come as no surprise that if we set either the rows or columns to 1, then we have essentially created a row or column vector, respectively. Thus, a vector is a subset of the general matrix class, though Eigen does not differentiate between the two from an implementation point of view; vectors are treated the same way as matrices.

Because not everyone will feel comfortable with the use of templates, Eigen has provided several typedefs. A typedef is just a simple way to define your own type. For example, if you want to use an unsigned int to loop over your data, you could create a typedef as typedef IndexType unsigned int. Now, you can use the IndexType instead of typing unsigned int all the time, which may be more verbose (and thus serves as code documentation).

Typedefs are still widely used these days, but they are the old way of doing things. In modern C++, we use an alias instead of a typedef, which just has a different syntax but achieves exactly the same thing. For example, the IndexType example using an alias would be using IndexType = unsigned int.

In Eigen, we have several of these aliases or typedefs, and the general structure is: Matrix (or Vector) + size + type. The size is a number between 2 and 4, and the size is the first letter of the type to be used, that is, i for int, f for float, d for double. As an example, Eigen::Matrix3d would define a 3-by-3 matrix using doubles. Eigen::Vector3i would define a column vector with 3 integers, while Eigen::RowVector3i would create the corresponding row vector.

All of these types define a fixed-size matrix or vector, but we can also use dynamic-sized matrices and vectors. For that, we set the size argument to X. For example, Eigen::MatrixXd would be a matrix of unknown size using doubles for its coefficients. If we want to fix the size of a row or column to, say, 4, we can also create a matrix as Eigen::Matrix4Xd or Eigen::MatrixX4d, respectively. For vectors, we simply set the size to X, e.g. Eigen::RowVectorX or Eigen::VectorX.

To see a list of all typedefs (alias') that are provided, you can consult the relevant page in the Eigen documentation.

OK, with this preamble out of the way, we should be able to tackle our first, gentle introduction to Eigen and look at our first code example. The code is shown below. I'll provide explanations below the code section.

#include <iostream>

#include <Eigen/Eigen>

int main() {

// create a 3 by 3 matrix, with the size fixed at compile time

Eigen::Matrix<double, 3, 3> mat1;

// initialise values in matrix to zero

mat1.setZero();

// set all elements of the matrix, row by row

mat1 << 1, 2, 3, 4, 5, 6, 7, 8, 9;

// create a 3 by 3 matrix, with the size fixed at runtime

Eigen::Matrix<double, Eigen::Dynamic, Eigen::Dynamic> mat2(3, 3);

// Alternatively, resize the matrix to any size you want

mat2.resize(3, 3);

// make mat2 an identity matrix

mat2.setIdentity();

// evaluate matrix-matrix multiplication

Eigen::Matrix<double, 3, 3> mat3 = mat1 * mat2;

// change individual entries in a matrix

mat3(2,2) = 5.2;

// change an entire row or coloumn

mat3.row(1) << 3, 2, 6;

mat3.col(0) << 8.7, -2.2, -1.0;

// compute trace of the resultant matrix

auto trace = mat3.trace();

// or, perform the computation manually, using reductions

auto traceManual = mat3.diagonal().sum();

// print results

std::cout << "matrix 1:\n" << mat1 << std::endl;

std::cout << "matrix 2:\n" << mat2 << std::endl;

std::cout << "matrix 3:\n" << mat3 << std::endl;

std::cout << "trace(mat3): " << trace << std::endl;

std::cout << "trace(mat3): " << traceManual << std::endl;

return 0;

}When you run this code, you will see the following output:

matrix 1:

1 2 3

4 5 6

7 8 9

matrix 2:

1 0 0

0 1 0

0 0 1

matrix 3:

1 2 3

4 5 6

7 8 9

trace(mat3): 15

trace(mat3): 15I have commented on the different matrix types and how we define fixed and dynamically-sized matrices. Hopefully, this example makes this clear.

However, there are a few more things I want to point out. The first thing is how we set elements in a matrix or vector. We can either initialise a matrix through the overloaded operator<<, as seen on line 12. It is important that the number of elements we provide matches the size of the matrix. In this case, I provide 9 elements for the 3-by-3 matrix.

If we only want to set the size for a specific element in our matrix, then we can use the overloaded operator(row, col), as seen on line 27. Note that this operator is overloaded for both reading and writing. So, if we need to get the coefficient stored at row 1 and column 2, then we can store this item into a variable as auto coefficient = mat(1, 2);. But, as seen on line 27, we can also set its content directly as mat(1,2) = coefficient; (where coefficient is the value we want to store).

If we are dealing with a vector instead, then we can simply drop the second index and use the syntax vec(index); instead. It doesn't matter if it is a row or column vector; by the way, we just provide the index of the vector from where we want to retrieve the data (or where we want to write the data to).

If we want to simply set the content of a row or column within a matrix, then we can also set that using the row(index) or col(index) syntax, as seen on lines 30-31. Here, we first specify which row/column we want to set, and then we can overwrite its content using the overloaded operator<<, which is similar to how we set the content for the entire matrix on line 12.

In the case of the dynamically-sized matrix on line 15, I have provided two arguments to the constructor, indicating the size of the matrix. This is optional, but if we do provide a size here, Eigen will already take care of memory allocation at this point. If we don't knwo the size of the matrix yet, then we can also use the resize() method and apply that to our matrix as shown on line 18.

In this case, we are resizing the matrix to the same size, and Eigen is clever enough not to perform a memory allocation here, but theoretically, we can resize the matrix to any size here. This may be useful, for example, if we want to support dynamic mesh refinement, where the size of our coefficient matrix [katex]\mathbf{A}[/katex] changes after each mesh refinement stage. In this case, we can resize the matrix to account for all new mesh elements.

We can also see the inbuilt functions setZero() and setIdentity() on lines 9 and 21, respectively. These will set the matrix to specific values as instructed. Additional functions that are available here are setOnes(), setRandom(), and setConstant(value), where value is a number we want to store for each coefficient in the matrix. Thus, mat1.setOnes() and mat1.setConstant(1) will be identical.

On line 24, we evaluate a matrix-matrix multiplication, and Eigen follows a pretty intuitive approach here to evaluate vector-matrix, vector-vector, and matrix-matrix operations. We can always add, subtract, and multiply matrices and vectors, given that they have proper dimensions. Eigen will perform checks and complain if you want to perform operations on non-matching matrices or vectors. For example, you can't multiply a 4-by-4 matrix with a vector that has 2 entries.

For vectors in particular, we also have dot and cross products, and these are supported as special functions on vector objects (that is, matrices where either the row or column is set to 1). If we have two vectors v1 and v2 of the same dimension, then we can compute the dot and cross product using auto dotProduct = v1.dot(v2); and auto crossProduct = v1.cross(v2);. We will see this later in action in other code examples.

On line 34, I compute the trace of the matrix (the sum of all of the diagonal coefficients). There are several functions in Eigen that allow you to either compute something (like the trace, determinant, eigenvalues, or eigenvectors), or to set the state of the matrix (e.g. to transpose the matrix). In true Eigen fashion, these are intuitive to use, that is, can you guess what mat1.transpose(); and auto result = mat1.determinant(); is doing?

Granted, eigenvectors and eigenvalues are somewhat more involved and require some more code for robust calculation of them, but generally speaking, Eigen is pretty straightforward to use as it has a very clean interface design.

Instead of computing the trace of the matrix using the inbuilt function, we can also compute it ourselves using reductions. Reductions, in general, refer to the action of taking a higher-dimensional object (for example, a vector containing 1000 entries) and transforming it into a lower-dimensional object (typically a single value). If you have ever used =SUM(C1:C10) in Excel, you have performed a reduction and reduced the higher-dimensional table of 10 entries into a single sum.

Examples of reductions are summations, calculating a norm, finding the minimum or maximum entry in a vector, and the list goes on. You will find the term reduction commonly used in computer science. It can mean various things, but in the context of programming, it typically means to reduce the number of entries in a vector to one.

A good example in CFD is the computation of residuals. We compute the differences between the old and new solutions in each cell and then either check for the largest difference (L0 norm) or some form of normalised summation (L1 or L2 norm). What we see as residuals, either printed to a plot, or listed as raw data in the console/log files, are the result of reducing these local differences to a single number.

On line 37, we compute the trace manually by first getting the diagonal of matrix mat3 (again, can you guess what the diagonal() method is doing? See, good interface design!), and then we apply the sum() method to this new vector, which reduces all of the diagonal coefficients into a single value. Printing both the manual and Eigen computed trace on lines 43 and 44 confirms that both result in the same trace.

If you understood this example, congratulations, you are now able to use Eigen in your own project. While there is much more depth to Eigen, you now know the most important parts. Performing specific operations on matrices and vectors is just one more step towards manipulating the data into a form you need. The next examples will highlight this.

But, before we approach more CFD-relevant examples, I want to solidify some of what we have looked at and discussed previously and also bring in vectors. This will be the subject of the next code example.

Combining matrices and vectors

In this example, I want to bring in vectors as well and show how they really are just a subset of matrices. Have a look at the example, and we discuss it below the code and its output again.

#include <iostream>

#include <Eigen/Eigen>

int main() {

// use a typedef to define a 3 by 3 matrix, fixed at compile time

Eigen::Matrix3d mat1;

// create a compile-time fixed vector of size 3

Eigen::Matrix<double, 3, 1> vec1;

// create a run-time fixed vector of size 3

Eigen::Matrix<double, Eigen::Dynamic, 1> vec2(3);

// we can again resize the vector if we want

vec2.resize(3);

// set matrix and vector to random values

mat1.setRandom();

vec1.setRandom();

vec2 = vec1;

// compute the matrix vector product

auto vec3 = mat1 * vec1;

auto vec4 = mat1 * vec2;

// compute the vector matrix product, using the transpose

auto vec5 = vec1.transpose() * mat1;

auto vec6 = vec2.transpose() * mat1;

// scalar (dot) product of vec5 and vec6

auto dot = vec5.dot(vec6);

// compute the L2 norm of vector 3

auto L2norm1 = vec3.norm();

// compute the L2 norm of vector 3 with verbose syntax

auto L2norm2 = vec3.lpNorm<2>();

// compute the L1 norm of vector 3

auto L1norm = vec3.lpNorm<1>();

// compute the infinity norm of vector 3

auto infnorm = vec3.lpNorm<Eigen::Infinity>();

// print results

std::cout << "matrix 1:\n" << mat1 << std::endl;

std::cout << "vector 1:\n" << vec1 << std::endl;

std::cout << "vector 2:\n" << vec2 << std::endl;

std::cout << "vector 3:\n" << vec3 << std::endl;

std::cout << "vector 4:\n" << vec4 << std::endl;

std::cout << "vector 5:\n" << vec5 << std::endl;

std::cout << "vector 6:\n" << vec6 << std::endl;

std::cout << "dot product of vector 5 and vector 6:\n" << dot << std::endl;

std::cout << "L2 norm of vector 3:\n" << L2norm1 << std::endl;

std::cout << "L2 norm of vector 3:\n" << L2norm2 << std::endl;

std::cout << "L1 norm of vector 3:\n" << L1norm << std::endl;

std::cout << "infinity norm of vector 3:\n" << infnorm << std::endl;

return 0;

}This will result in the following output:

matrix 1:

-0.934479 0.676625 -0.554193

-0.275777 -0.0601981 0.471348

0.768346 -0.607514 0.125891

vector 1:

-0.398127

0.968061

-0.508405

vector 2:

-0.398127

0.968061

-0.508405

vector 3:

1.30881

-0.188117

-0.958013

vector 4:

1.30881

-0.188117

-0.958013

vector 5:

-0.285558 -0.0187946 0.612928

vector 6:

-0.285558 -0.0187946 0.612928

dot product of vector 5 and vector 6:

0.457578

L2 norm of vector 3:

1.63284

L2 norm of vector 3:

1.63284

L1 norm of vector 3:

2.45494

infinity norm of vector 3:

1.30881You see that I am now using Eigen's typedefs to create a matrix through a shorter syntax. On line 6, we create a 3-by-3 matrix, which is fixed at compile time and its coefficients are stored as doubles. I am also creating 2 vectors on lines 9 and 12, the first being fixed at compile time, the second being dynamically sized at runtime. As shown on line 15, we can resize our dynamic-size vector if needed. On lines 18-20, I am assigning random values to the matrix and vector, and I can assign the content of one vector to another vector using the equal operator (operator=).

We can compute products of matrices and vectors as shown on lines 23-24, and we see that it doesn't matter if these are dynamic or fixed-size matrices/vectors; we can mix and match them as we want. On lines 27 to 28, we compute the product of a vector and a matrix. We see that I use the transpose() function here. This is required as vec1 and vec2 are column vectors, but need to be row vectors if we want to multiply the vector by the matrix from the left.

Try to remove the transpose() function here and see what error you are getting, Eigen is pretty verbose and good at telling you what is going wrong. If I do that, I am getting an error message saying: error: static assertion failed: YOU_MIXED_MATRICES_OF_DIFFERENT_SIZES EIGEN_STATIC_ASSERT_SAME_MATRIX_SIZE(Lhs, Rhs). Granted, this is hidden in all of the template errors you are seeing, but it is there.

On line 31, we see how we can compute the dot product of two vectors, as also previously highlighted. If we want to compute the L2 norm, we can simply call the norm() function on a vector, as seen on line 34. However, we can also specify the norm we want to compute explicitly using the syntax shown on lines 37, 40, and 43, respectively. For the L0 norm, or L-infinity norm, we use the special Eigen::Infinity symbol, as seen on line 43.

You see, working with matrices and vectors is really not that difficult. If you want to learn more about the arithmetic operations you can perform on matrices and vectors, I highly suggest to read the relevant page in the documentation. As always, the documentation pages are succinct and to the point, and come with plenty of helpful examples to get you set up in no time.

CFD-specific examples

Now that we know the basics of Eigen and how to work with it, I want to pivot now to CFD-relevant examples and show you how I would use Eigen for some real CFD applications, and not just toy examples. This is how I am, or would, use Eigen in my own codes.

I have to be honest here, before writing this article, I went through the entire Eigen documentation to see what is relevant and what isn't, and then tried to come up with relevant CFD examples where we can use Eigen's functionality. Some of the functionality I wasn't aware of, but I am glad I checked it out, as I have now discovered so many more use cases for Eigen, and honestly don't know how I can ever replace this library, or write solvers without using it. It truly is the Swiss Army Knife of CFD libraries.

Computing the CFL number

Computing the CFL number in any CFD code is essential. When we are starting out writing our first solvers, we likely start with explicit time integration methods, which typically have a CFL stability criterion attached to them. For advection-dominated flows, we typically have a maximum allowable CFL number of 1, while for diffusion-dominated problems, this drops to 0.5. Thus, we need to compute a time step for which the simulation is stable.

If you want to know how this stability criterion can be derived, we need to talk about the stability of numerical schemes, and under which conditions errors will propagate and diverge the solution process. There is a well-established theory behind this, known as the von Neumann stability analysis, and I have looked at this in my article on implicit and explicit time integration, which you can find here: Explicit vs. Implicit time integration and the CFL condition.

The (inviscid) CFL number is defined as:

\text{CFL} = \mathbf{u}_i\frac{\Delta t}{\Delta \mathbf{x}_i}

\tag{1}

Here, [katex]\mathbf{u}_i[/katex] is the velocity magnitude at cell/vertex [katex]i[/katex], and [katex]\mathbf{x}_i[/katex] is the characteristic spacing of cell [katex]i[/katex]. For a 1D case, this may simply be the distance between two adjacent vertices, but for an arbitrary 2D or 3D cell (e.g. on unstructured grids using polygons and polyhedra), it may be taken as the square or cube root of the volume of the cell, e.g. [katex]\sqrt{V_i}[/katex] in 2D and [katex]\sqrt[3]{V_i}[/katex] in 3D.

Since the CFL number is a local quantity, meaning that each cell will have its own CFL number based on the local velocity and spacing, we have to compute the CFL number in each cell and then find the cell with the maximum CFL number.

In practice, we typically use Eq.(1) to compute a stable time step; thus, we solve it for [katex]\Delta t[/katex] and get:

\Delta t = \text{CFL}\frac{\Delta \mathbf{x}_i}{\mathbf{u}_i}

\tag{3}

We pick a CFL number that is lower than our maximum allowable CFL number (if we are using explicit time integration), and then compute the max allowable time step for each cell. We then need to find the lowest time step and impose that in our simulation. If we picked the largest time step, then the cell which computed the smallest time step would be advanced with the same time step, which likely would locally violate our CFL condition (that is, the locally computed CFL number would be larger than the maximum allowable CFL number).

Thus, Eq.(3), really, is:

\Delta t = \text{min}\left[\text{CFL}\frac{\Delta \mathbf{x}_i}{\mathbf{u}_i}\right]

\tag{3}

For completeness, I have mentioned that Eq.(1) is the inviscid CFL number. That means, strictly speaking, this CFL number is only valid for flows that do not have any diffusion. For CFD applications, that means we do not have any viscous forces. We can express that by setting the viscosity to zero, i.e. [katex]\mu=\nu=0[/katex]. The Navier-Stokes equations then reduce to the Euler equations.

However, in reality, we do have viscosity, that is, [katex]\mu\ne 0[/katex] and [katex]\nu\ne 0[/katex]. In that case, we can also compute a diffusive, or viscous CFL number. This is defined as:

\text{CFL}=\Gamma\frac{\Delta t}{(\Delta \mathbf{x})^2}

\tag{4}

Here, [katex]\Gamma[/katex] is our diffusive coefficient, e.g. [katex]\mu[/katex] or [katex]\nu[/katex] for the momentum equations, but it could also be the heat diffusion coefficient [katex]\alpha[/katex], for example, if we are dealing with pure heat diffusion problems.

For most cases (certainly for most industrial relevant flows), the inviscid forces dominate the flow. We can determine that by the Reynolds number, as that is giving us essentially a ratio between the inviscid and viscous forces. Thus, for any flows where we have [katex]\text{Re}\gg 1[/katex], the inviscid forces dominate, and we can only consider the inviscid CFL number definition as given in Eq.(1). Or, if we want to dumb it down further, if we are using a turbulence model, inviscid forces dominate viscous forces.

There is a catch here, though. We may have [katex]\text{Re}\gg 1[/katex] for the freestream properties of the flow. However, that doesn't mean that this is given everywhere in the flow. For example, near the wall, the velocity decelerates and approaches zero, so that diffusion may dominate.

If we are using explicit time stepping (i.e. we are limited in our max CFL number), then we should compute both the inviscid and viscous CFL numbers and choose the smaller one. However, if we use implicit time-stepping and thus are not limited in the CFL number, we typically don't care about the viscous contribution and only consider the inviscid part. This is commonly done in general-purpose CFD solvers.

You may be saying that it is, perhaps, wasteful to compute the time step in each cell only to then advance the solution with the smallest time step. Indeed, if we have a large separation in cell sizes (perhaps some small inflation layers near walls and then some larger elements in the farfield), we will have a large difference between the smallest and largest computed time step. Some cells may then have [katex]\text{CFL}\ll 1[/katex]. This will result in slow convergence.

This is a common issue, and several solutions are available. We can either treat the problem as a steady-state problem, in which case we don't care if each cell is advanced with a physically correct time step (we don't care about the time history of the flow, only about the final result).

In this case, it is perfectly acceptable to let each cell advance the solution in time at its own time step. This allows us to let each cell advance in time at the same CFL number, resulting in faster (steady-state) convergence. This is known as local time stepping.

If, however, we really want to have a time-accurate flow, but we have the same issue of large separation in scales between the smallest and largest element, we can use the same local time stepping technique, but we have to augment that with a second, time-accurate time derivative. This yields to the so-called dual time stepping.

Now, at first, this approach is somewhat confusing. For example, the incompressible momentum equation becomes:

\frac{\partial \mathbf{u}}{\partial t} + \frac{\partial \mathbf{u}}{\partial \tau} + (\mathbf{u}\cdot\nabla)\mathbf{u} = -\frac{1}{\rho}\nabla p + \nu\nabla^2\mathbf{u}

\tag{5}

Here, the first derivative resolves the solution in real time, while the second, pseudo time derivative is the aforementioned local time stepping time integral. During each time step, we update the pseudo time derivative, that is, we advance the solution in pseudo time (which effectively drives each time step to a steady state solution). Once that is achieved, the solution for the current time step has converged, and we can update the real-time derivative.

If you find this confusing, you are not alone; it only really starts to make sense once you implement it. But, once you know how it works, you start to realise that the fundamental working principle of the dual time stepping is found in many codes, even though it doesn't use this name. For example, in OpenFOAM, the incompressible solver of choice for unsteady, turbulent simulations is pimpleFoam, a combination of the SIMPLE and PISO algorithm.

The PIMPLE algorithm, really, is just the PISO algorithm with some additional corrector steps. These corrector steps are essentially the same as the dual time stepping, that is, we solve the momentum equation several times per time step. We do that to increase the maximum allowable CFL number, which is, in essence, what the dual time stepping procedure is used for.

Or, if we solve a non-linear system using an implicit treatment, well, we have to solve the linear system of equations [katex]\mathbf{Ax}=\mathbf{b}[/katex]. If we have strong non-linearities, we may need to apply some additional iterations within each time step, where we solve this linear system several times. This can be done in a few ways, but the most common approaches here are to use a Newton-Raphson or Picard iteration. All we are doing here is essentially solving [katex]\mathbf{Ax}=\mathbf{b}[/katex] a few times per time step until [katex]\mathbf{x}[/katex] changes little.

In any case, we don't need to dive further into dual time stepping here; all we are concerned with is computing the inviscid CFL number of some flow. The example below does exactly that for a simplified 1D example with some dummy data. Let's look at the code first and then discuss where Eigen comes in to do its magic.

#include <iostream>

#include <Eigen/Eigen>

#define PI 3.141592

int main() {

// number of cells and vertices

const int nCells = 100;

const int nVertices = nCells + 1;

// some random velocity field

auto u = Eigen::Vector<double, nCells>::Random();

// create the mesh

Eigen::VectorXd x = Eigen::VectorXd::LinSpaced(nVertices, 0, 1);

// apply cosine clustering so that more points are towards either end

x = 0.5 * (1.0 - (PI * x.array()).cos());

// mesh spacing

auto dx = x.tail(nVertices - 1) - x.head(nVertices - 1);

// some assumed time step

auto dt = 0.001;

// compute the CFL number using the smallest spacing and largest velocity

// CFL = max(u * dt / dx)

auto CFL = (dt * u.array().abs() / dx.array()).maxCoeff();

// print out some values

std::cout << "CFL number: " << CFL << std::endl;

std::cout << "max velocity c0mponent: " << u.array().maxCoeff() << std::endl;

std::cout << "minimum spacing dx: " << dx.array().minCoeff() << std::endl;

return 0;

}This produces the following sample output (yours will be different, as I am using random numbers here to initialise the velocity vector):

CFL number: 3.33135

max velocity component: 0.999987

minimum spacing dx: 0.00024672On lines 8-9, we define the number of cells and vertices to use, so that we can construct a simple mesh on line 15. This is, initially, a vector with nVertices going from [katex]x=0[/katex] to [katex]x=1[/katex], but then we apply some clustering towards each edge using a cosine function on line 18.

Now, let's investigate line 18 a bit more in-depth. We have defined the vector x as, well, a vector on line 15. Thus, Eigen treats it as such. This means we can do certain things with this object, for example, compute the dot or cross product (which is only defined for vectors), but we are restricted in other ways (for example, dividing two vectors, which isn't mathematically defined).

Thus, we can transform an Eigen vector into a plain Eigen array by calling the array() method on a vector. Similarly, we can transform an Eigen array into an Eigen vector by calling the matrix() method on it (A matrix with a single column or row is just a vector).

When we transform a vector into an array, what happens is that we lose all associated vector properties (like dot or cross products), but we gain other functionalities, like calling component-wise functions that we can apply directly to each array coefficient. In our case, we multiply each component of the x vector by [katex]\pi[/katex], and then we call the cosine function on each element. This is what happens in this statement: (PI * x.array()).

Notice that I have enclosed the product of PI * x.array() in additional parenthesis. After this multiplication is done, the resulting object is still an array. By placing parentheses around it, I can call additional array functionalities on it. In this case, I use it to call the cosine function on it. There are quite a few of these functions available to us, and you can find a list in the Eigen documentation here: Catalog of coefficient-wise math functions.

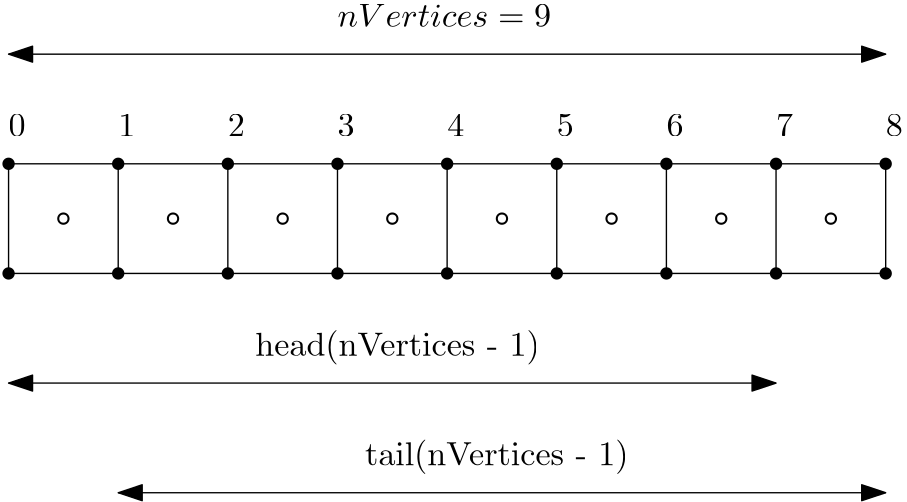

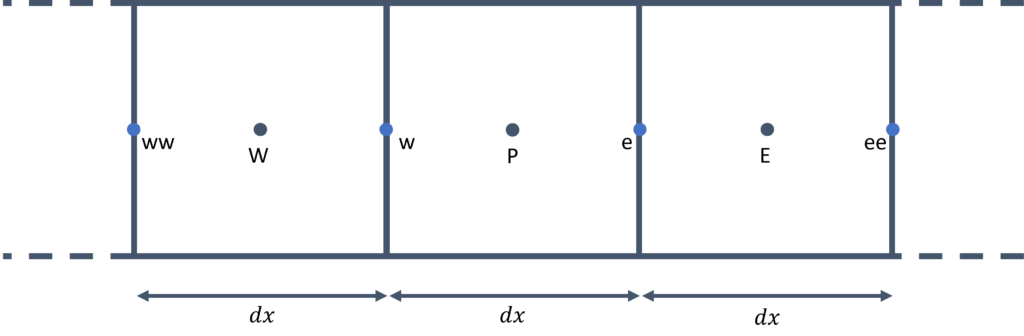

Now that we have a mesh with non-uniform spacing, we need to compute the spacing for each cell. This is done on line 21. I am using here two functions on x; the head(N) and tail(N) function. The head(N) function will return the first N elements in the vector x, while tail(N) returns the last N elements in the vector. I am setting N here to the number of vertices minus 1, which essentially is the same as the number of cells. Thus, dx will contain a spacing for each cell.

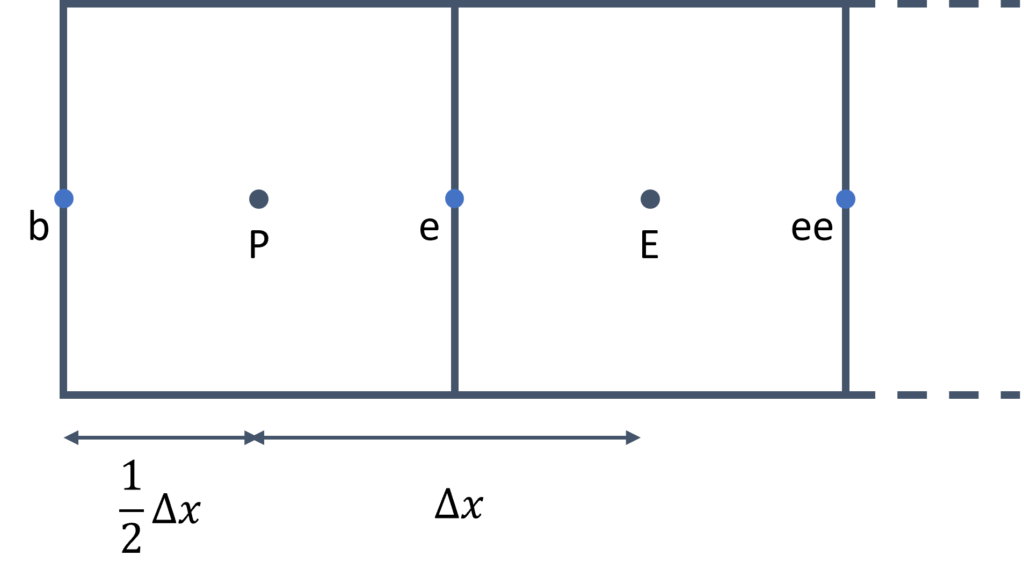

To see what is happening here, consider the following schematic:

Here, we have a total of 9 vertices (solid circles) and thus 8 cells (where hollow circles denote the cells' centroid). If we call tail(8), we get the last 8 entries in the vector, and if we call head(8), we get the first 8 entries in the vector. If we now subtract the vector produced by the head() function from the vector produced by the tail() function, we essentially compute [katex]x_{i+1} - x_i[/katex].

This is the definition for the local spacing of each cell in a 1D grid, and thus line 21 computes the grid spacing vector, all in just a single line of code, thanks to Eigen's functionality.

After we set the time step arbitrarily on line 24, we can now compute the CFL number on line 28. There are a few things to note about line 28:

- First, we call again the

array()function on both the velocity vectoru(as defined on line 12, containing random velocity values) and the spacing vectordx. This allows us to divideubydx. - However, before we compute the fraction, we make sure that we take the magnitude of the velocity vector

uby calling theabs()function on the generated array. This will ensure that we check the magnitude of both the largest and smallest (i.e. most negative) velocity components. - If we don't change the vector

uanddxto an array, Eigen would not compile our code and crash with an error saying that vectors cannot be divided. - After we multiply the fraction of

uanddxby the time stepdt, we take the entire generated array, enclose it again with parentheses and then find the maximum value using themaxCoeff()function.

Alternatively, we could have set a CFL number on line 24 and then calculated the smallest time step on line 28 according to Eq.(3), where we would now use the minCoeff() reduction operation on the generated time step array, to get the smallest time step required to satisfy the CFL condition.

I think that line 28 is also a perfect demonstration of the power of object-oriented programming, and, perhaps, if you needed further convincing, consider the alternative. If we wanted to write a CFD solver without any object-oriented programming, then all we have available are functions. Functions require us to pass in all necessary data (or worse, work with global variables), and then we have to write verbose code.

For example, line 28 above, without object-oriented programming, would be replaced by:

double manualComputedCFL = 0.0;

for (int i = 0; i < nCells; ++i) {

auto temp = dt * std::fabs(u(i)) / dx(i);

if (temp > manualComputedCFL)

manualComputedCFL = temp;

}This isn't necessarily bad, but consider all the logic we would have to provide elsewhere, for example, the initialisation of the u and dx vector, with explicit memory allocation (and deallocation). Then, each operation would require a loop (for example, to compute x, then its transformation, and finally to compute the spacing vector dx). All of this would add additional code similar to the one above.

Why is that bad? Well, for starters, let me ask you, what is easier to understand? The non object-orientated code example above, or the Eigen version? Using object-oriented programming allows us to hide things like loops and passing of variables to functions. Instead, we focus on implementing logical features into classes that make our code extremely readable.

In any case, I think you get my point; this example already got a bit longer than expected. Perhaps it is time to move on to the next example.

Computing geometric information: The Triangle-Point example

When we are dealing with unstructured grids, we commonly have to deal with elements such as triangles. I will focus on triangles in this example, but this can be extended to other elements as well.

Let's consider the following: I want to compute the inviscid fluxes using the finite-volume method. We can discretise our inviscid fluxes by first integrating over a finite volume (the cell's volume), and then replacing the volume integral by a surface integral. We do that as surface integrals have better conservative properties than volume integrals, as I have discussed in why is the Gauss theorem king?.

In equation form, this can be written for a 1D example (for simplicity) as:

(\mathbf{u}\cdot\nabla )\mathbf{u} = u\frac{\partial u}{\partial x} \rightarrow \int_V u\frac{\partial u}{\partial x} \mathrm{d}V = \int_A n\cdot u^2 \mathrm{d}A

\tag{6}

We can notice two things from the final discretised form in Eq.(6).

- We need the surface normal vector [katex]n[/katex]

- We need the surface area [katex]A[/katex] of the element over which we integrate.

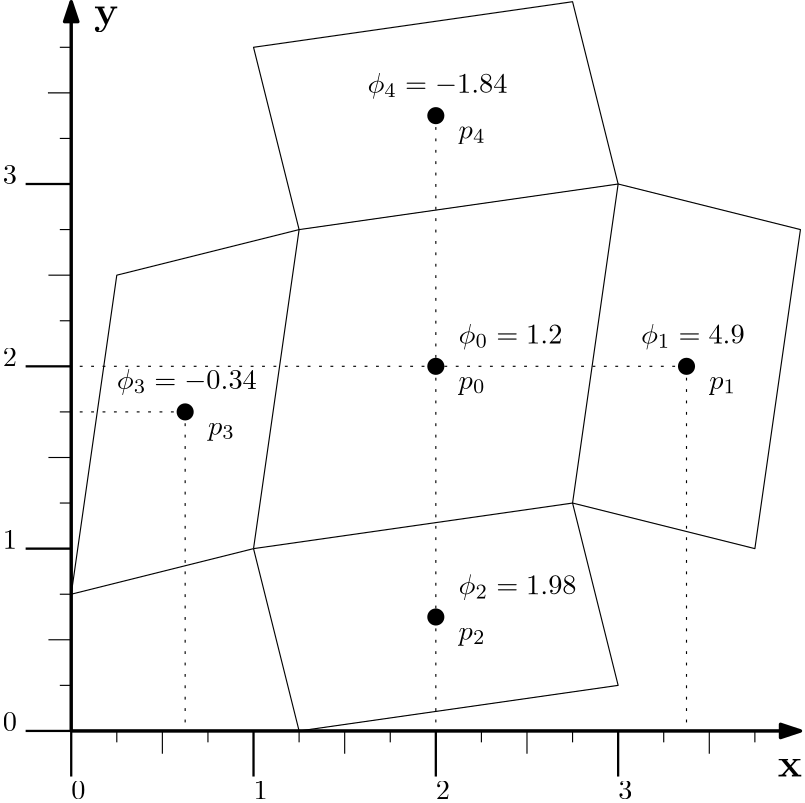

Let's say that the element we want to integrate over is a triangle. In that case, given 3 points (for example, from our mesh generator), we need to reconstruct these two geometric properties from these 3 points alone.

The normal vector can be computed from the cross product of two edges that are formed from the triangle points. That is, if we have [katex]P_1[/katex], [katex]P_2[/katex], and [katex]P_3[/katex] given, which are the three vertices of a triangle, then we can define two edges of the triangle as [katex]\overline{P_2 P_1} = P_2 - p_1[/katex] and [katex]\overline{P_3 P_1} = P_3 - P_1[/katex], for example.

With these edges defined, the normal vector can be computed from the cross product as:

\mathbf{n} = \overline{P_2 P_1} \times \overline{P_3 P_1}

\tag{7}

We can normalise this normal vector to have a magnitude of 1. This is achieved by:

\mathbf{n}_\text{normalised} = \frac{\mathbf{n}}{|\mathbf{n}|}

\tag{8}

As it turns out, the area of a triangle can be computed as half the (non-normalised) normal vector magnitude, that is:

A = 0.5 \cdot \sqrt{n_x^2 + n_y^2 + n_z^2}

\tag{9}

This is done in the following code example. As per usual, I'll let you go through it first, and then I'll add my comments and explanations below.

#include <iostream>

#include <Eigen/Eigen>

class Triangle {

public:

using PointType = typename Eigen::Vector3d;

public:

Triangle(PointType p1, PointType p2, PointType p3) : _p1(p1), _p2(p2), _p3(p3) {

calculateNormalVector();

calculateArea();

}

~Triangle() {}

double getArea() { return _area; }

PointType getNormalVector() { return _normalVector; }

private:

void calculateNormalVector() {

auto tempNormalVector = normalVector();

tempNormalVector.normalize();

_normalVector = tempNormalVector;

}

void calculateArea() {

auto tempNormalVector = normalVector();

_area = tempNormalVector.norm() / 2;

}

PointType normalVector() {

auto p2p1 = _p2 - _p1;

auto p3p1 = _p3 - _p1;

auto normalVector = p2p1.cross(p3p1);

return normalVector;

}

private:

PointType _p1;

PointType _p2;

PointType _p3;

double _area;

PointType _normalVector;

};

int main() {

// create 3 points in the xy plane

Eigen::Vector3d p1 = {0, 0, 0};

Eigen::Vector3d p2 = {1, 0, 0};

Eigen::Vector3d p3 = {0, 1, 0};

Triangle triangle(p1, p2, p3);

std::cout << "Area of triangle: " << triangle.getArea() << std::endl;

std::cout << "Normal vector of triangle: (" << triangle.getNormalVector().transpose() << ")" << std::endl;

return 0;

}Running this example will produce the following output:

Area of triangle: 0.5

Normal vector of triangle: (0 0 1)I have defined a Triangle class here on lines 4-44. This class takes three points as a constructor argument, as seen on line 9. Each point has a type of PointType, which is a typedef. The PointType itself is defined on line 6 using the more modern C++ syntax with the using keyword rather than the typedef keyword, as discussed previously. The PointType type is just a wrapper around an Eigen vector with three entries, all storing double values (hence the name Vector3d).

At construction time, we store the points p1, p2, and p3 that are passed to the constructor and store them as private variables within the class, as defined on lines 39-41, as _p1, _p2, and _p3. I know, I am committing a cardinal sin here by placing an underscore in front of the variable name, not after (e.g. p1_), or using the m_ naming convention as m_p1.

However, in any other sensible programming language, placing an underscore in front of the variable name indicates this is a private variable, and I like this convention. Why should I change just for C++? Well, I know why, but I disagree with it. But, if you have too much time on your hands, why not go through the reasons written by someone who has even more time than you and me: What are the rules about using an underscore in a C++ identifier?.

Within the constructor, in lines 10-11, I do two things to set up the class: First, I calculate the normal vector using the calculateNormalVector() method, followed by the area calculation through the calculateArea() function. Let's look at these in turn.

The function for calculateNormalVector() is defined on lines 20-24. It is within a private access modifier, meaning that only the class itself may call this function (which it does within the constructor). If we are instantiating objects later from this class, we couldn't call this function from outside the class.

The first step in the calculateNormalVector() method is to call the normalVector() method, which in turn is defined on lines 31-36. In this method, we first create two arbitrary edges on the triangle (on lines 32 and 33), where we take two points in the triangle and subtract them from one another. Since the PointType is really just an Eigen::Vector3d, arithmetic operations like subtractions are defined on this object and Eigen knows how to subtract two vectors for us.

We then find the normal vector on line 34 by taking the cross product of these two new vectors. As a simple thought experiment, if the edge p2p1 has a vector of (1,0,0) and the edge p3p1 has a vector of (0,1,0), we know that the cross product of these two vectors will be (0,0,1). Thus, this function does indeed return the normal vector of a triangle.

On line 35, we return this normal vector from the function. Going back to the calculateNormalVector() on line 20, we store this result on line 21. On line 22, we call the normalize() function on the vector, which will execute Eq.(8) for us. We then store the result within the _normalVector variable on line 23. Later, when we access this variable, it will contain the correctly scaled normal vector for us.

Returning to the constructor, on line 11, we compute the area of the triangle through the calculateArea() method, as defined on lines 26-29. Here, we first compute the non-normalised normal vector on line 27. We then apply the norm() method to this normal vector on line 28. The norm() method will return the L2-norm, and this is a reduction operator (reducing all elements in the vector to a single value). It is defined as:

\parallel P \parallel = \sqrt{p_0^2 + p_1^2+p_2^2 + ... + p_N^2}\tag{10}For a vector with three elements, this reduces to:

\parallel P \parallel = \sqrt{p_0^2 + p_1^2+p_2^2}\tag{11}As we can see, this is the same as taking the magnitude of the vector. Thus, on line 28, after we call norm() on the normal vector, we get its magnitude, which, when divided by 2, results in the area of the triangle, as demonstrated by Eq.(9). And this is it, really.

The main function on lines 46-57 shows how we may use this class. We define three points on lines 48-50, which are of type Egen::Vector3d (which we have redefined as PointType within the class). We create an instance of the Triangle class on line 52, where we pass these points to.

Afterwards, we call the getArea() and getNormalVector() methods from our Triangle class, as defined on lines 16 and 17. These are getter functions that simply return the value of the precomputed area and normal vector.

On line 54, we also call the transpose() method on the returned normal vector. By default, Eigen stores vectors as column vectors, and so if you print them, they would take up 3 lines in the console. However, for printing, a row vector may look better, and so I am printing the transpose of the column vector here, which is a row vector. This is purely for aesthetics and isn't required in a mathematical sense.

Well, this example certainly took a bit more code and showed very little functionality of Eigen; however, to show you some more CFD-relevant cases, I do need to provide some setup to show the usage of Eigen in the right context. Hopefully, you can see how, in this example, my 3D manipulation of geometric data can be easily achieved with Eigen. This is a simple example, but whenever you are dealing with geometric operations, Eigen is your friend!

Discretising partial differential equations with Eigen

Now, let's dive into a much more involved scenario. If I am interested in quickly prototyping a solver, then what follows in this example is likely how I would use Eigen. We will look at mesh generation, storing of data, creating views into the data, and discretising partial differential equations. This example is longer, but again, aimed at showing you how you can integrate Eigen tightly into your code base.

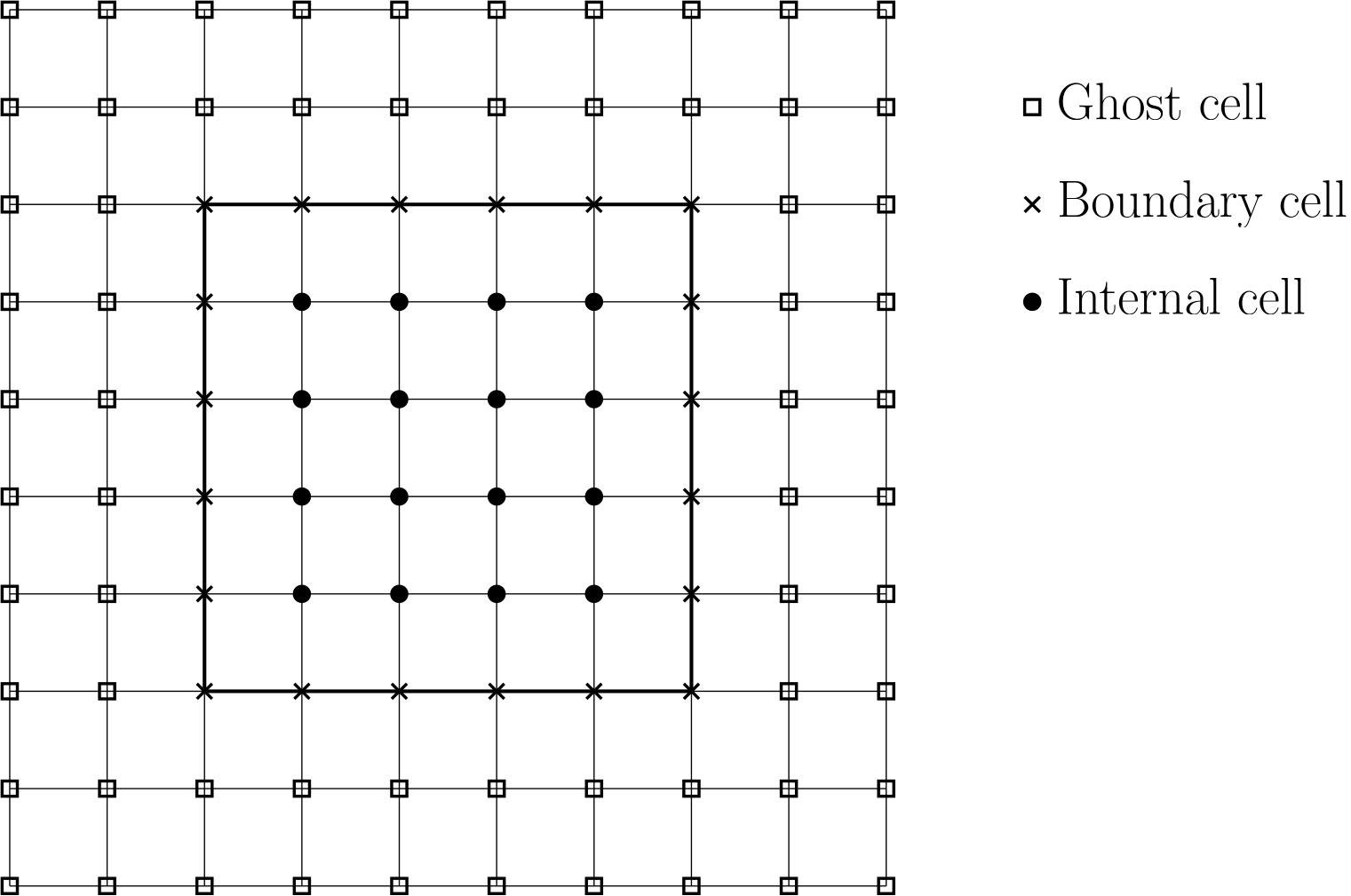

To start with, let us consider a typical grid arrangement that you may have for a structured grid. This is shown below:

Here, we have three different types of vertices: Those that are outside the domain (which we call ghost cells or vertices), those that are on the boundary, and those that are internal to the domain (i.e. within the domain).

When we have a grid like that, we may want to be able to loop over the internal vertices only (for example, to update our solution in time), or we want to loop over each boundary separately to update the boundary conditions. In other words, we may want to loop over only a subset of the grid. Eigen allows us to define what is known as a view in C++.

If you look outside a window, you have a view as well. You can only see what is visible through the window. If your window were larger, you would be able to see more. Make it tiny, and you see only a small fraction of what is outside.

The same concept applies to a view in C++. It presents a subset of our data. For example, if I have a vector of 1 entries, a view may be vector containing all entries as the original vector, except for the first and last value. What is important here is that a view does not create a second vector and copies data back and forth; instead, it will provide us access to a subset of the original data.

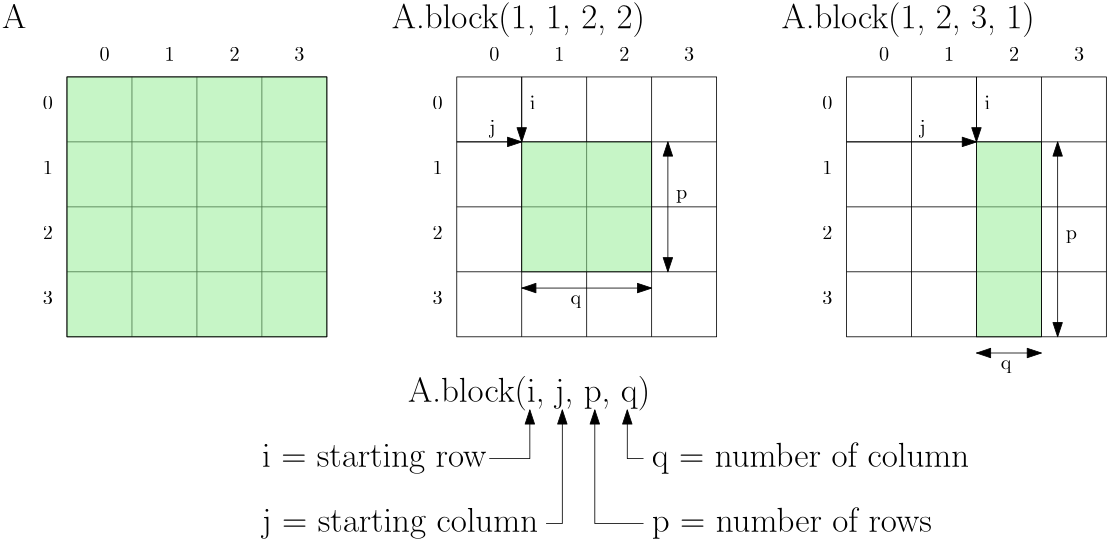

In Eigen, we can achieve that with the block() method. This method accepts 4 parameters, where the first two specify the row and column where we want our view to start, and the last two entries specify how many elements we want to include in our view. This is all wonderfully abstract, so let's follow that up with an example. Consider the following 4-by-4 matrix [katex]A[/katex]:

Here, we see the original matrix on the left. In the middle, I discard the first and last row and column, so I start row=1 and column=1, and I have two rows and columns. The example on the right shows a somewhat arbitrary example to help enforce the definitions of these different variables. We say that we start at row=1 and column=2, and we want to expand our view three rows down, with only a single column.

Remember, all of these views don't require memory allocation. Instead, what happens internally is that Eigen uses iterators to only serve the elements we have requested. Iterators are just pointers to data members and so don't store any memory themselves.

OK, with that, we should now be able to look at the next code example and see what is happening here. Try to see if you can make sense of the code, and I'll catch up with you afterwards.

#include <iostream>

#include <Eigen/Eigen>

int main() {