How to implement boundary conditions in CFD

In this series thus far, we have looked at the governing equations of CFD, how to discretise them and what their characteristics are. We have looked at different numerical schemes and how they influence various properties, such as accuracy. Eventually, we need to talk about boundary conditions, as every simulation will have to truncate the simulation domain somewhere.

Thus, in this article, we take a deep dive into the (fascinating?!) world of boundary conditions. I promise you, you might think they are easy and straightforward (I've been there), but they are not. The devil is in the details, and by the end of this article, you will have an appreciation for what common issues, both solved and unsolved, arise when we have to deal with boundary conditions.

In the process, we will see that there really are only three different types of boundary conditions, and if you understand them, you can model any kind of boundary. In most cases, we don't even need the third, so really, just knowing two is all you need. With these two (or three) fundamental types of boundary conditions, we can derive boundary conditions like walls, symmetry planes, inlets, outlets, and so on, which are just different combinations of these two fundamental types of boundary conditions for the flow variables we are solving for.

I can't make boundary conditions look sexy, but I can tell you about all of the dead bodies they have been hiding and their troubled past (and future), if that is something you are interested in, get the popcorn. We have a journey in front of us!

In this series

[custom_category_posts_list category_slug="10-key-concepts-everyone-must-understand-in-cfd"]In this article

Introduction

When you performed your first CFD simulations, you probably simulated some kind of laminar or even turbulent flow using a commercial or open-source solver. You selected some boundary conditions, perhaps a combination of inlets and outlets, and sprinkled in some solid walls here and there. You solved this simulation, got results, and presumably, you were very happy with the results. If this describes you, then you would be forgiven for thinking that imposing boundary conditions is easy.

Well, a lot happens behind the scenes, which is abstracted away from you. Unless you deal with OpenFOAM (where the developers really want you to think long and hard about your boundary conditions, and, by extension, about your life choices (I could have used Fluent/StarCCM+ instead ...)), the end result you see is a combination of violated physics and some undisclosed black magic. Let's face it: we probably all go to hell for the way we treat boundaries. We don't want to admit it, but we know it to be true in our hearts. Or is it just me?

When I applied for my first PhD position on Large Eddy Simulations (LES) and Direct Numerical Simulations (DNS), I was invited for an interview, and I got into a heated argument with one of the post-docs. He was working in the wind tunnel (and, as you are probably aware, there is a healthy portion of distrust and hatred between computational and experimental fluid dynamicists!). So naturally, I didn't take his opinions on CFD too seriously. He insisted that boundary conditions are one of the hardest things to get right, whereas I was of the opinion that they are easy.

I got offered the PhD position (to everyone's surprise, including mine), and later found myself trying to implement boundary conditions into my Cartesian grid-based solver in 2D for steady, laminar flows (we experts call this piss-easy, yes, this is scientific terminology ...). To my surprise, I was struggling, and while I was trying to resolve my boundary condition issues after spending months going through the literature and looking for answers, I remembered the discussion I had with the post-doc. Could he have been right?

Later, I was working on a project using Large-Eddy Simulations. I also supervised a PhD student at the time working on high-resolution schemes on unstructured grids for external aeronautical applications. We both were suffering from the boundary conditions. My inlet boundary conditions could only be described as borderline illegal, while my PhD student did confess to me, after 9 months of troubleshooting, that he just copied and pasted boundary conditions from a different case. After I made some modifications, his case worked, but now he is going to hell, too (I guess).

Boundary conditions are hard. If you believe they aren't, then you either have never implemented them yourself or have never done any simulations where the adverse effects of boundary conditions become an issue. If you want to challenge yourself, go and set up a channel flow with Large Eddy Simulations. But no cheating; periodic boundary conditions aren't allowed. You will realise that there are as many different boundary conditions you can impose at the inlet as there are probably RANS turbulence models available, and some are better than others but not necessarily more correct.

In 1991, Sani and Gresho (who would also later publish their book on finite elements in CFD applications) held a mini-symposium on open questions and challenges for open boundary conditions during the seventh international conference on numerical methods for laminar and turbulent flow. Three years later, they would publish a bleak review of the outcome of this mini symposium.

The test cases they discussed were classical test cases such as the steady-state flow over a backward-facing step (with and without heating), the unsteady flow past a circular cylinder, and the steady-state flow through a channel. But these cases came with a twist; test cases needed to be simulated with boundary conditions imposed in two locations.

The first location was far downstream, i.e. far enough away so that they would not influence the simulation. However, the second location was in a region where the flow was still developing. For example, for the circular cylinder case, the boundaries were placed as close as four diameters away from the cylinder. The von Karman vortex shedding is still in development in this region.

The participants presented results for different outlet boundary conditions, and in their 1994 review, Sani and Gresho summarised the mini-symposium and its outcome as:

It has been an exercise in frustration and we are not thrilled with the results obtained

You would think that 30+ years later, we would have solved all of these issues. We haven't. Sure, we have made some advances in specific areas, such as inlet boundary conditions for scale-resolving turbulent flows, but we are still struggling with very fundamental problems. If you have ever worked with Fluent, for example, then you might have encountered reverse flow at the outlet. Once that happens, your convergence is affected, so the developers at Fluent have implemented a fix.

What's that fix? Well, if you encounter reverse flow on a specific cell at the outlet, Fluent will temporarily turn that cell into a wall, preventing flow from entering. Genius, right? Now, you tell me if this is a true reflection of the physics that is going on or a quick and dirty fix we have come to accept because we haven't made any advances in the past 30 years.

The fundamental problem with boundary conditions

Before we now go into deep philosophical discussions on boundary conditions, let's address the issue at hand. Why are they so difficult? Why can't we make advances in this field?

The short and simple answer is: because boundary conditions don't exist in real life, at least not all!

To see that this is true, let us look first at boundary conditions that do exist. These are solid boundaries, i.e. walls. In a computational sense, nothing can penetrate a solid boundary, i.e. fluid can't go through a wall (unless we impose some form of porosity, which I am ignoring in this example). In real life, we do have physical wall-type boundaries as well. The cup I had my tea in this morning (Madam Grey, two sugars, and a flood of milk, apparently called a builder's brew ...) effectively held the fluid in one place. There was no dripping or loss of fluid.

If you want to simulate the flow around an airfoil and also decide to run some wind tunnel experiments (of course, without telling your friends, you don't want to be seen hanging out with those experimental people!), then you can go into the test section and physically touch the wing that is installed in the wind tunnel.

Solid boundaries can be found in real life and, thus, are easy to model. But what about open boundaries like inlets and outlets? Think about it for a second. Can you come up with an example of an inlet or an outlet in real life? You can? Well, think again. There are no such things as inlets and outlets or, in general, open boundaries in real life. Every system is connected to another system in some way.

If you simulate the flow through a pipe, whatever you impose at the inlet has to come from somewhere, a part of the system that you have conveniently ignored to model in your simulation. Or go back to the example of the airfoil simulation. In the wind tunnel, whatever leaves the test section (i.e. what we would call an outlet in a CFD simulation) is recycled, straightened, and pressurised before it is returned through the wind tunnel to the test section (i.e. what we would call an inlet in a CFD simulation).

There are no open boundaries in real life, and if you want to have a physically correct system, then you are only allowed to use solid walls as your boundary condition. Take the wind tunnel, for example. Instead of imposing a fixed inlet velocity, you could also model the entire wind tunnel, including the spinning rotor, which would drive the flow. This geometry would consist of only walls and thus be a valid physical representation. But as soon as you chop off parts of the system and model the effect of the rotor through an inlet, you have changed the original system.

For a steady-state RANS simulation, this might be OK, but you will still have to impose boundary conditions for your RANS model. If you are solving this flow with the [katex]k-\omega[/katex] SST model, for example, you will need to impose boundary conditions at the inlet for the turbulent kinetic energy [katex]k[/katex] and the specific dissipation rate [katex]\omega[/katex]. Are you confident that you can impose correct values for these quantities? And is a uniform distribution of [katex]k[/katex] and [katex]\omega[/katex] across the inlet plane a good approximation or would you expect these quantities to be non-uniformly distributed?

Turbulent kinetic energy is created in boundary layers, so close to the walls, and where you have turbulent kinetic energy, you have dissipation. Thus, you probably have much more happening at the edges of your inlet, where your inlet intersects with a solid wall. OK, so how tall is the turbulent boundary layer at your inlet? After all, you have chopped off part of the system; if there was no inlet, then we would have some boundary layer at the wall. You need to know the size of the turbulent boundary layer in order to impose realistic values for [katex]k[/katex] and [katex]\omega[/katex] here.

Thankfully, the values of [katex]k[/katex] and [katex]\omega[/katex] are not very sensitive to changes, so you will still get fairly good results in most cases, even if you can't accurately describe these values at the inlet. This is one positive attribute of RANS models. But once you want to resolve more turbulence, rather than modelling it, i.e. we are talking about scale-resolving turbulence such as Detached-Eddy Simulations (DES), Scale-Adaptive Simulations (SAS), Large-Eddy Simulations (LES), or god forbid, even Direct Numerical Simulations (DNS), then the above discussed issues become a real problem!

Because we are now resolving turbulence, we also need to resolve the boundary layer at our inlet. If we don't, well, then we are solving a different type of flow. So how do we get around this issue? Well, there are several solutions in the literature, all of which are an approximation, but none of them are correct.

The simplest approach is to give up before we even start, wave a white flag and pretend that there is no turbulence at the inlet. In this case, we set all turbulence to zero and only impose freestream conditions for velocity, pressure, and potentially temperature. This is the simplest approach and, in some cases, a good approximation. Wind tunnels are able to suck away unwanted boundary layers, so as long as we place our inlet at exactly the position where the boundary layer is sucked away and we develop a new boundary layer, we should be golden, right?

Perhaps, and thinking only about the velocity, this is likely a good approximation. But what about the pressure? We looked at elliptic flows in an earlier article within this series, and the pressure is, for the most part, governed by elliptic behaviour. What that means is that a change in pressure at one point of the flow will instantly propagate throughout the domain. This is why your flow starts turning around an obstacle before it has even reached it. Look at the streamlines around an airfoil, as shown in the above-linked article, to see what I mean.

What this means for our simulation is that by placing an inlet somewhere, we restrict the propagation of pressure (waves) only in a direction away from the inlet. The pressure isn't allowed to go beyond the inlet, influence the velocity upstream of the inlet itself, and, as a result, change the velocity profile that would otherwise develop at the inlet. This may seem like a small thing, but always remember that the Navier-Stokes equations are non-linear. A small change can have large consequences. After all, turbulence is created by microscopic surface roughness (natural transition).

You can show (and I have) that removing an upstream portion of the flow and replacing this with an inlet with a defined velocity profile that was measured in an experiment will give you different results compared to just simulating the upstream portion of the domain. It is the pressure that plays a critical role here, and concentrating only on the velocity will result in inaccuracies.

Of course, if you are dealing with supersonic flows, your pressure behaves hyperbolically, meaning that it has a preferred direction of travel, unlike elliptic flows, where it is travelling in all directions, and so imposing an inlet here is easier. While you have gained simplicity at the inlet, you have shifted the problem to the numerical schemes, which now have to deal with strong non-linearities. We saw in the last article how to tackle those. Alas, not every flow is supersonic, so we are stuck with elliptic pressure-induced boundary issues.

Hopefully, this will set the scene for you. Boundary conditions aren't easy, but they are a necessary evil that we have to deal with in any simulation. In this article, I want to look at how boundary conditions are commonly imposed, and we will see that there are really just three fundamental types of boundary conditions, of which the third type is just a combination of the first two. So, if you understand these two fundamental boundary conditions, you know all there is about boundary conditions. The art is in finding a combination that works for your case that doesn't violate the laws of physics (good luck to you, my friend!)

Three fundamental boundary conditions to rule them all

In this section, we will review the three fundamental types of boundary conditions that we can use in simulations. The first two types are the ones you will use in every simulation. When you use a CFD solver and you select a type for your boundary, for example, a wall, or an inlet, your solver will use that information and translate that back to the two fundamental types for all of the quantities that you are solving for, e.g. velocity, pressure, temperature, and any turbulent quantities.

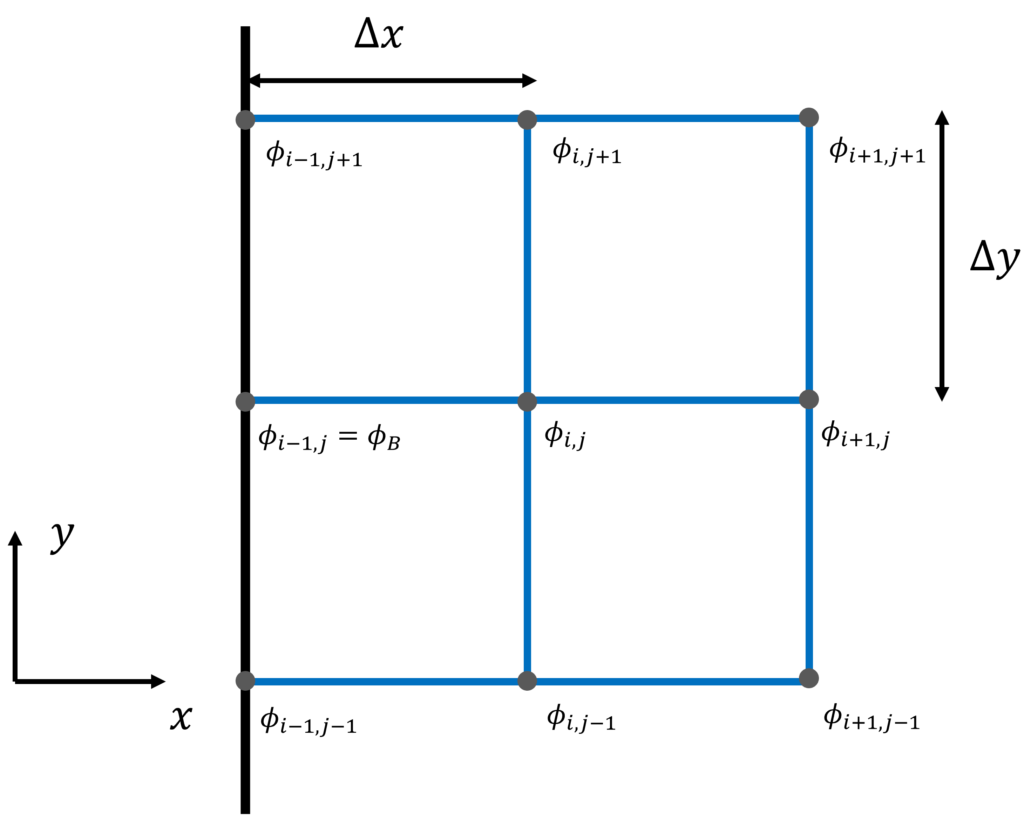

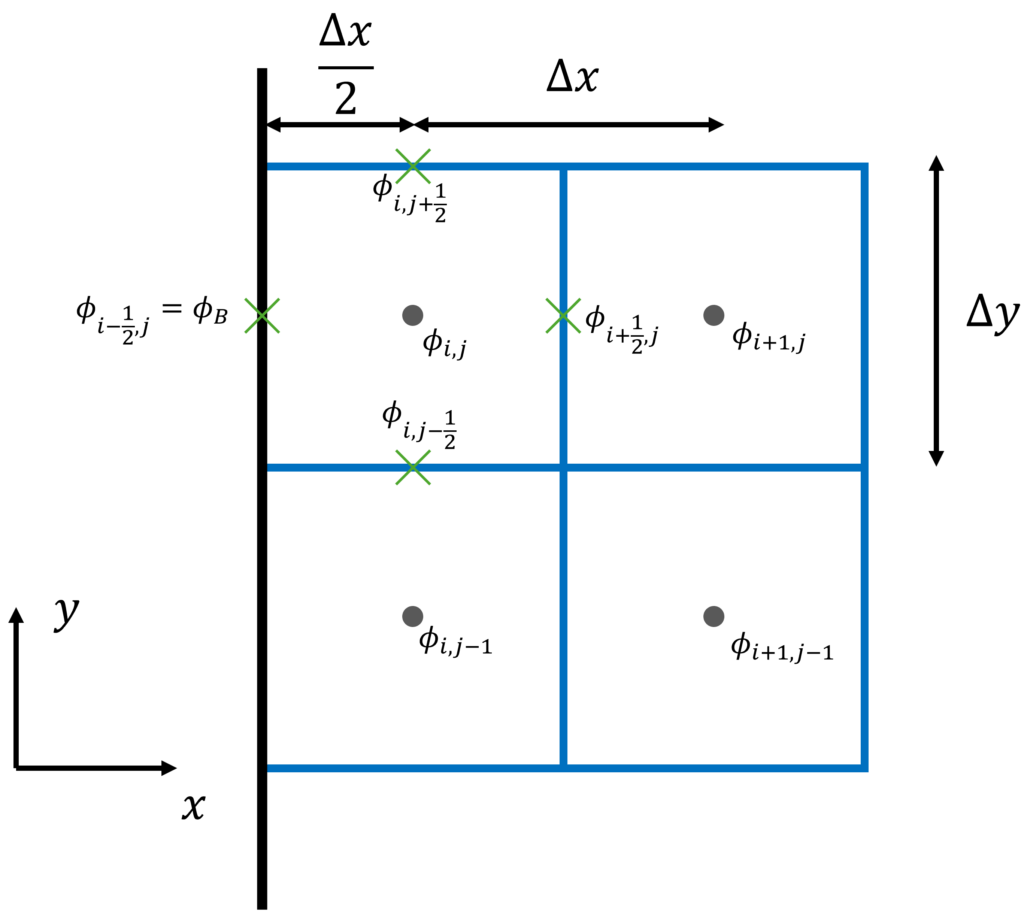

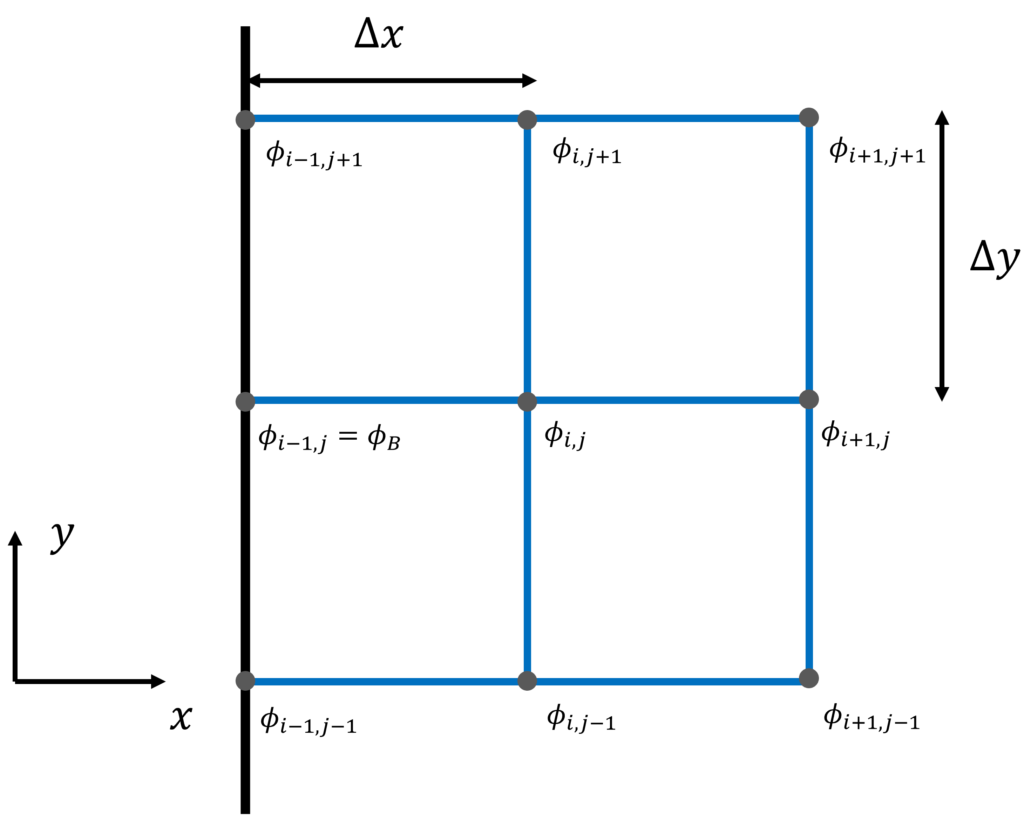

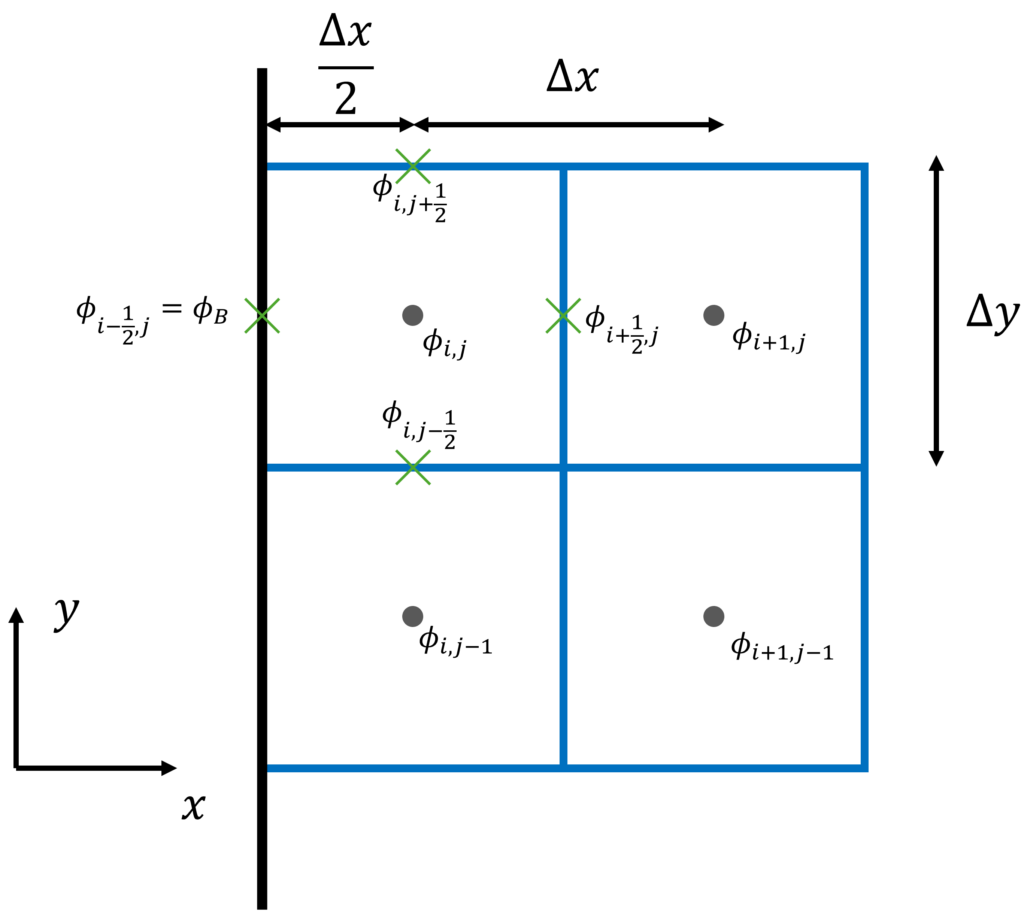

To make our lives easier, let's introduce some notation that we will use to derive the three fundamental types of boundary conditions. The two figures below show the cell arrangement commonly found in the finite difference method (left side), where the variables are stored at the cell's vertices, and the cell arrangement commonly found in the finite volume method (right side), where the variables are stored at the cell's centroid.

Vertex-based boundary conditions (finite difference method)

Centroid-based boundary conditions (finite volume method)

Dirichlet-type boundary conditions

Dirichlet-type boundary conditions are those where we impose a value directly. For example, we can impose a velocity, say 10 m/s at an inlet, and then all velocity at the inlet will have this value. This means that we need to know the value we want to impose and that this value will be typically uniformly distributed across the boundary, though we can also implement a non-uniform distribution if we know how this distribution will look like. For example, we may know the velocity profile from experiments.

Let's see how this can be implemented for the two types of grids that we saw above. On the left, we have the typical vertex-based arrangement that we normally use for finite difference methods. If we concentrate on [katex]\phi_{i-1,j}[/katex], which simply refer to as [katex]\phi_B[/katex], i.e. the value of [katex]\phi[/katex] at the boundary, then we see that since this node is already located on the boundary, we can simply write

\phi_{i,j-1}=\phi_B =\phi_{Dirichlet}Here, [katex]\phi_{Dirichlet}[/katex] is the value we want to impose on the boundary, e.g. 10 m/s on an inlet, 0 m/s at a non-moving wall, etc. This is rather straightforward. But what about the case on the right side of the figure?

Well, the right side of the figure shows the typical cell arrangement for a finite volume discretisation. We can see that the node on the boundary is not the cell centroid, i.e. the location where we store our flow variables like velocity, pressure, temperature, etc., but rather [katex]\phi_{i-1/2,j}=\phi_B[/katex]. Again, we just pick one point on the boundary, but the discussion would be the same for any of the other boundary points.

Let's review the finite volume discretisation again. In my article on how to discretise the Navier-Stokes equations, we looked at how to derive first-order and second-order derivatives. For the first-order spatial derivatives (i.e. in space), we obtained this approximation:

a\int_V\frac{\partial \phi}{\partial x}\mathrm{d}V=a\int_An\cdot\phi\,\mathrm{d}A\approx a\sum_{i=1}^{n_{faces}}n_f\cdot \phi_i A_iHere, [katex]a[/katex] is some coefficient, for example, the linearised velocity in the non-linear convective term. We go from a volume integral to a surface integral using the Gauss or divergence theorem, which requires us to integrate over all cell faces. In the finite volume method, we approximate that integration by a summation, where we now have to the face normal vector [katex]n_f[/katex], as well as the face area [katex]A_i[/katex].

What about [katex]\phi_i[/katex]? Well, these are the values of [katex]\phi[/katex] at the cell's faces. This is indicated in the figure above on the right by the green crosses on the faces. So, using the figure's notation, we could write this summation explicitly as:

a\sum_{i=1}^{n_{faces}}n_f\cdot \phi_i A_i=a\left[(\phi_{i+1/2,j}\Delta y) + (\phi_{i,j+1/2}\Delta x) - (\phi_{i,j-1/2}\Delta x) - (\phi_{i-1/2,j}\Delta y)\right]The plus and minus signs appear as a result of the orientation of the normal vector. The convention is that it is pointing outwards, so on the right and top face, the normal vector is pointing along the x and y direction, respectively, so we multiply the flux [katex]\phi_i A_i[/katex] with [katex]+1[/katex] in the x and y direction. For the left and bottom face, on the other hand, the normal vector points against the x and y direction, so we multiply the fluxes by [katex]-1[/katex], hence the negative sign.

If we look at the discretised form above, then we see that our Dirichlet value has sneaked into the discretised equation, i.e. the last term [katex]\phi_{i-1/1,j}[/katex] is located on the boundary, and thus we could also write this as [katex]\phi_{i-1/2,j}=\phi_B=\phi_{Dirichlet}[/katex]. Then, our discretised form becomes:

a\sum_{i=1}^{n_{faces}}n_f\cdot \phi_i A_i=a\left[(\phi_{i+1/2,j}\Delta y) + (\phi_{i,j+1/2}\Delta x) - (\phi_{i,j-1/2}\Delta x) - (\phi_{Dirichlet}\Delta y)\right]Now we can solve this equation again, by finding values for the remaining values of [katex]\phi_{i+1/2, j}[/katex], [katex]\phi_{i,j+1/2}[/katex], and [katex]\phi_{i,j-1/2}[/katex] through a suitable numerical scheme. We have looked at suitable numerical schemes for the finite volume method in the previous article.

But we also have to deal with second-order derivatives, which are slightly different. Again, I have derived their step-by-step derivation in the article on how to discretise the Navier-Stokes equation, so we will just look at the final form here, which is of interest. We saw that we can write the finite volume approximation for the second-order derivative as:

b\int_V \frac{\partial^2 \phi}{\partial x^2}\mathrm{d}V=b\int_V \frac{\partial}{\partial x}\frac{\partial \phi}{\partial x}\mathrm{d}V\approx b\sum_{i=1}^{n_{faces}}n_f\cdot \frac{\partial \phi_i}{\partial x} A_iHere, [katex]b[/katex] is some coefficient, but since we only have the diffusion term in the Navier-Stokes equation that uses a second-order derivative, this means that [katex]b[/katex] will always be the dynamic or kinematic viscosity (depending on whether it is divided by the density or not).

So now we have the derivative to deal with in our summation. Let's look at the face on the right, i.e. at location [katex]i+1/2,j[/katex]. Looking at the above figure, we can see that a simple approximation for the derivative at this face could be written as

\frac{\partial\phi}{\partial x}\biggr\rvert_{i+1/2,j}\approx\frac{\phi_{i+1,j}-\phi_{i,j}}{\Delta x}But what about the left face? Here we do not have a value beyond the location [katex]i-1/2,j[/katex], so we can evaluate the gradient the same way. In this case, we simply form the gradient between locations [katex]i-1/2,j[/katex] and [katex]i,j[/katex], which results in

\frac{\partial\phi}{\partial x}\biggr\rvert_{i-1/2,j}\approx\frac{\phi_{i,j}-\phi_{i-1/2,j}}{\Delta x/2}=2\frac{\phi_{i,j}-\phi_{Dirichlet}}{\Delta x}Now we see the appearance of [katex]\phi_{i-1/2,j}=\phi_B=\phi_{Dirichlet}[/katex] again, and so we can impose our Dirichlet-type boundary condition for second-order derivatives again. We would find suitable approximations for the remaining terms, i.e. [katex]\phi_{i,j+1/2}[/katex] and [katex]\phi_{i,j-1/2}[/katex], and then we can continue with our finite volume approximation.

The remaining steps to approximate both the first-order and second-order derivatives, as well as how to bring them into a form that can be easily solved, are discussed in the previously mentioned article on how to discretise the Navier-Stokes equations.

Neumann-type boundary conditions

Neumann-type boundary conditions are really just Dirichlet-type boundary conditions in disguise. Instead of setting the absolute value of a quantity [katex]\phi[/katex] (e.g. velocity, pressure, temperature, etc.) at the boundary, we set the value of its derivative, i.e. [katex]\partial\phi/\partial n[/katex], where [katex]n[/katex] is the normal vector on the boundary.

We use this boundary condition when we do not know the values at the boundary and we want them to be calculated as part of the solution. Let's take a simple example, a simple flow through a channel, with an inlet, an outlet, and a wall at the top, bottom, front, and back.

For simplicity, let's say we want to impose a velocity at the inlet that is constant across the inlet boundary. What, then, is the velocity at the outlet? We don't know. We would expect it to follow some form of parabolic or power-law velocity profile depending on whether it is a laminar or turbulent flow, but if our domain is very short, it might not even fully develop. We don't know how the velocity will be distributed at the outlet, so we pick a Neumann-type boundary condition for the velocity at the outlet.

Now, let's look at the pressure. If we assume that the outlet is connected to some opening where we have atmospheric conditions, then it would make sense to prescribe the atmospheric or ambient pressure at the outlet. This can be a constant value for the pressure across the boundary, so we would impose a Dirichlet-type boundary condition for the pressure at the outlet.

What about the inlet? There will be a pressure drop within the channel, but we don't know what its magnitude is. If we want the pressure drop to develop as part of the solution, then we need to allow the pressure to freely develop at the inlet boundary, as the difference of the pressure at the inlet and outlet will determine the pressure gradient (if we divide the difference by the channel length). Thus, we need a Neumann-type boundary condition for the pressure at the inlet.

Finally, what about the walls? We know that we have a no-slip condition, so the velocity should have the same value as the translational motion of the wall. Typically, walls are stationary in simulations, so there is no movement of the walls, and that means our velocity in each direction has to be zero. Since we know the value, we have to impose a Dirichlet-type boundary condition for the velocity at solid walls.

Now, think about the pressure distribution around an airfoil. It changes from the leading edge to the trailing edge. The only thing we can say with confidence is that the pressure coefficient is one at the stagnation points. However, we don't know the distribution between the leading and trailing edges. The same is true for our channel example, we don't know the pressure here, so we need to let it develop as part of our solution. This means that the pressure needs to be treated as a Neumann-type boundary condition at solid walls.

So, let's see how we can impose Neumann-type boundary conditions. In general, we can write them as follows:

\frac{\partial \phi}{\partial n}=\phi_{Neumann}Here, [katex]n[/katex] is the direction of the normal vector on the boundary. In the figures we looked at above, we have [katex]n=x[/katex], i.e. it is aligned with the x-direction. Using the notation of [katex]n[/katex] allows us to write the boundary condition in a generic notation or even account for curvature on the boundary.

[katex]\phi_{Neumann}[/katex] is the value the derivative should be equal to. A common choice is to set [katex]\phi_{Neumann}=0[/katex]. But there are cases where we want to impose a non-zero value for [katex]\phi_{Neumann}[/katex], for example, when we are dealing with heat transfer applications (where [katex]\phi=T[/katex]) and we want to impose some heating, which would be realised with a non-zero gradient of the temperature gradient at the wall (i.e. we set a heat flux).

So, let's start to see how we can impose Neumann-type boundary conditions on the domain sketched out in the figures we looked at previously. For the figure on the left, i.e. using the finite difference method, we have the following discretisation:

\frac{\partial \phi}{\partial n}=\frac{\partial \phi}{\partial x}=\frac{\phi_{i,j}-\phi_{i-1,j}}{\Delta x}=\frac{\phi_{i,j}-\phi_B}{\Delta x}=\phi_{Neumann}Now, all that we have to do is solve this equation for [katex]\phi_B[/katex], which will be the value at the boundary that we will impose. This will lead to:

\phi_B=\phi_{i,j}-\Delta x\cdot\phi_{Neumann}The boundary position is important and changes this calculation. For example, if we assume for a second that the boundary would be located at [katex]i+1,j[/katex] in the figure, i.e. the solid, vertical black line would be attached to the blue cells on the right, then our boundary condition would be evaluated as:

\frac{\partial \phi}{\partial n}=\frac{\partial \phi}{\partial x}=\frac{\phi_{i+1,j}-\phi_{i,j}}{\Delta x}=\frac{\phi_{B}-\phi_{i,j}}{\Delta x}=\phi_{Neumann}In this case, when we solve for [katex]\phi_B[/katex], we obtain:

\phi_B=\phi_{i,j}+\Delta x\cdot\phi_{Neumann}Now, if we have the special case of [katex]\phi_{Neumann}=0[/katex] (in the channel flow example we discussed above, all Neumann-type boundaries had a zero gradient condition), both expressions simplify to

\phi_B=\phi_{i,j}That is, we simply copy the value of [katex]\phi[/katex] that is next to our boundary node in the [katex]n[/katex] direction. Now you can see why I said that Neumann-type boundary conditions are Dirichlet-type boundary conditions in disguise. We still impose a value for them, but we do so with values from the internal domain. This is also why the values can change (like the pressure distribution around an airfoil), which is based on values obtained from the internal fluid domain.

And what about the finite volume discretisation? Switching our attention to the figure on the right, which we saw in the beginning of this section, we have a very similar process. We can write the Neumann-type boundary condition as

\frac{\partial \phi}{\partial n}=\frac{\partial \phi}{\partial x}=\frac{\phi_{i,j}-\phi_{i-1/2,j}}{\Delta x/2}=2\frac{\phi_{i,j}-\phi_B}{\Delta x}=\phi_{Neumann}We can solve this equation now for [katex]\phi_B[/katex], which results in

\phi_B=\phi_{i,j}-\frac{\Delta x}{2}\phi_{Neumann}If the boundary would be located again at [katex]i+1,j[/katex], i.e. on the east side of the simplified domain given in the figure above, then we would obtain

\frac{\partial \phi}{\partial n}=\frac{\partial \phi}{\partial x}=\frac{\phi_{i+3/2,j}-\phi_{i+1,j}}{\Delta x/2}=2\frac{\phi_{B}-\phi_{i+1,j}}{\Delta x}=\phi_{Neumann}Here, the location [katex]i+3/2,j[/katex] is the face between [katex]i+1,j[/katex] and [katex]i+2,j[/katex], i.e. [katex]i+3/2,j=i+1.5,j[/katex]. Then can then be solved for [katex]\phi_B[/katex] again to result in

\phi_B=\phi_{i+1,j}+\frac{\Delta x}{2}\phi_{Neumann}If we have [katex]\phi_{Neumann}=0[/katex], then we can simplify our expression again, and we have

\phi_B=\phi_{i,j}For the arrangement given in the figure above on the right (i.e. the boundary is located on the west face of the domain). Since we know the value on the Boundary now, we can continue exactly in the same way as we did for the Dirichlet-type boundary condition. And this is what I would advocate you should do if you want to write your own solver.

Some CFD textbooks will introduce a simplification for second-order derivatives. We saw in the discussion on the Dirichlet-type boundary conditions that we had the following expression for second-order derivatives:

b\int_V \frac{\partial^2 \phi}{\partial x^2}\mathrm{d}V=b\int_V \frac{\partial}{\partial x}\frac{\partial \phi}{\partial x}\mathrm{d}V\approx b\sum_{i=1}^{n_{faces}}n_f\cdot \frac{\partial \phi_i}{\partial x} A_iSince we now have to deal with derivatives directly, as seen in the last term, when we are summing over all faces, we could simply set the gradient at the boundary to the value of [katex]\phi_{Neumann}[/katex].

I don't like this approach because this is not how you want to implement that in code. Based on the discussion we had up to this point, we saw that once we have found a way to implement Dirichlet-type boundary conditions, we can reuse (or even better, modify) this implementation to also allow for Neumann-type boundary conditions. This results in cleaner code, which is easier to maintain and modify.

Robin-type boundary conditions

Robin-type boundary conditions are just a linear combination of Dirichlet and Neumann-type boundary conditions. We can write them in simple terms as

\phi_{Robin}=a\cdot\phi_{Dirichlet}+b\cdot\frac{\partial \phi}{\partial n}Here, [katex]a[/katex] and [katex]b[/katex] are linear weights that we use to determine how much each boundary condition contributes. I will show you in a second how you can determine these. A good question at this point would be, why do we need this type of combination? First of all, it is rare (I have survived my entire CFD career up to this point without ever having to use this condition), but specific applications can benefit from it. In short, it is used to model imperfections.

For example, we said that we have a no-slip condition at the wall for the velocity. This is true on a macroscopic scale. At a molecular scale, particles will experience an electrostatic force that gets stronger the closer the particles get to the wall. Since the wall is a solid material, its atoms are arranged in a certain lattice structure that doesn't move. Any fluid particle (or atom, I use these nouns interchangeably) that gets close is attracted to the wall, and its movement will slow down due to this attractive force. But the particles will never fully stop moving.

But, if we look at a macroscopic average velocity of these particles at the wall, it would appear as if there is no movement. So, on a macroscopic level, a Dirichlet-type boundary condition for the velocity with no relative motion between the fluid and the wall is a very good approximation of the underlying physics.

But what happens if we work on smaller length scales? For example, have a look at the following application, which is known as a lab-on-a-chip device:

We have lots of so-called microchannels, seen in red and blue colour, and you see in comparison to the thumb and index finger that these channels are rather small. As our characteristic length scale (say, the height of the channel) gets smaller and smaller, it gets closer to the average distance an atom can travel before colliding with another atom. This distance is known as the mean free path (and for air at ambient pressure, it is about [katex]7\cdot 10^{-8}[/katex] meters.

As these two numbers converge, our continuum hypothesis that we use for the Navier-Stokes equations no longer holds true, i.e. the continuum hypothesis assumes that we are dealing with a large number of atoms so that any motion we observe results from the average motion of these underlying particles. We use the Knudsen number to help us identify this effect, which is expressed as

Kn=\frac{\text{mean free path}}{\text{characteristic length}}=\frac{\lambda}{L}Depending on which sources you consult, we classify our flow into different regimes. Typical values and their corresponding flow regime are given as:

- [katex]Kn<0.01[/katex]: Continuum flow (Navier-Stokes equations are valid)

- [katex]0.01\le Kn\le 0.1[/katex]: Slip flow (Navier-Stokes equations are valid with modified boundary conditions, for example, Robin type)

- [katex]0.1\le Kn\le 10[/katex]: Transitional flow (Navier-Stokes no longer works here; a particle description is needed)

- [katex]Kn > 10[/katex]: Free molecular flow

As our characteristic length scale approaches this mean free path (as our Knudsen number increases and gets to a value of [katex]Kn>0.01[/katex]), we start to see a shift from this purely Dirichlet-type boundary condition to that of atoms freely moving about at the boundary, which can result in some noticeable slip at the boundary.

This slipping of atoms can be implemented with a Robin-type boundary condition, where we impose a nominal no-slip boundary condition with our Dirichlet-type boundary condition, and then we allow some slippage through the Neumann-type boundary condition.

Another example would be insolation against heat transfer at walls. Let's say our goal is to keep the temperature at a wall to a constant value, but we don't want the fluid to heat the wall further and impose a heat flux across the boundary. We might not be able to fully insulate our wall, so a small heat flux ([katex]\partial T/\partial n\ne 0[/katex]) may be measured in experiments. If we wanted to account for that in our boundary conditions, then we could impose a Robin-type again to model this with more confidence.

So let's look at how the discretised equations would look like. For the finite difference approximation on the left in the figure above, we have

\phi_B=a\cdot\phi_{Dirichlet}+b\cdot\frac{\partial \phi}{\partial n}=a\cdot\phi_{Dirichlet}+b\cdot\frac{\phi_{i,j}-\phi_B}{\Delta x}Now we have to solve this equation for [katex]\phi_B[/katex], where we first have to isolate [katex]\phi_B[/katex] as

\phi_B+\frac{\phi_B}{\Delta x}=a\cdot\phi_{Dirichlet}+b\cdot\frac{\phi_{i,j}}{\Delta x}Now, we simplify the left-hand side to

\phi_B\left(1+\frac{1}{\Delta x}\right)=a\cdot\phi_{Dirichlet}+b\cdot\frac{\phi_{i,j}}{\Delta x}Dividing by the term in the parenthesis on the left, we obtain our combined Robin-type boundary condition as

\phi_B=\frac{a\cdot\phi_{Dirichlet}+b\cdot\frac{\phi_{i,j}}{\Delta x}}{\left(1+\frac{1}{\Delta x}\right)}For the finite volume discretisation seen in the figure above on the right, we have a very similar procedure. We start with

\phi_B=a\cdot\phi_{Dirichlet}+b\cdot\frac{\partial \phi}{\partial n}=a\cdot\phi_{Dirichlet}+b\cdot\frac{\phi_{i,j}-\phi_{i-1/2,j}}{\Delta x/2}=a\cdot\phi_{Dirichlet}+2b\cdot\frac{\phi_{i,j}-\phi_B}{\Delta x}We can now derive the Robin-type boundary condition for the finite volume discretisation in exactly the same manner, or we can be lazy and realise that this is exactly the same equation as for the finite difference method, with the only difference that we have a factor of two infront of the [katex]b[/katex] coefficient. Thus, regardless of which way we take, we would end up with

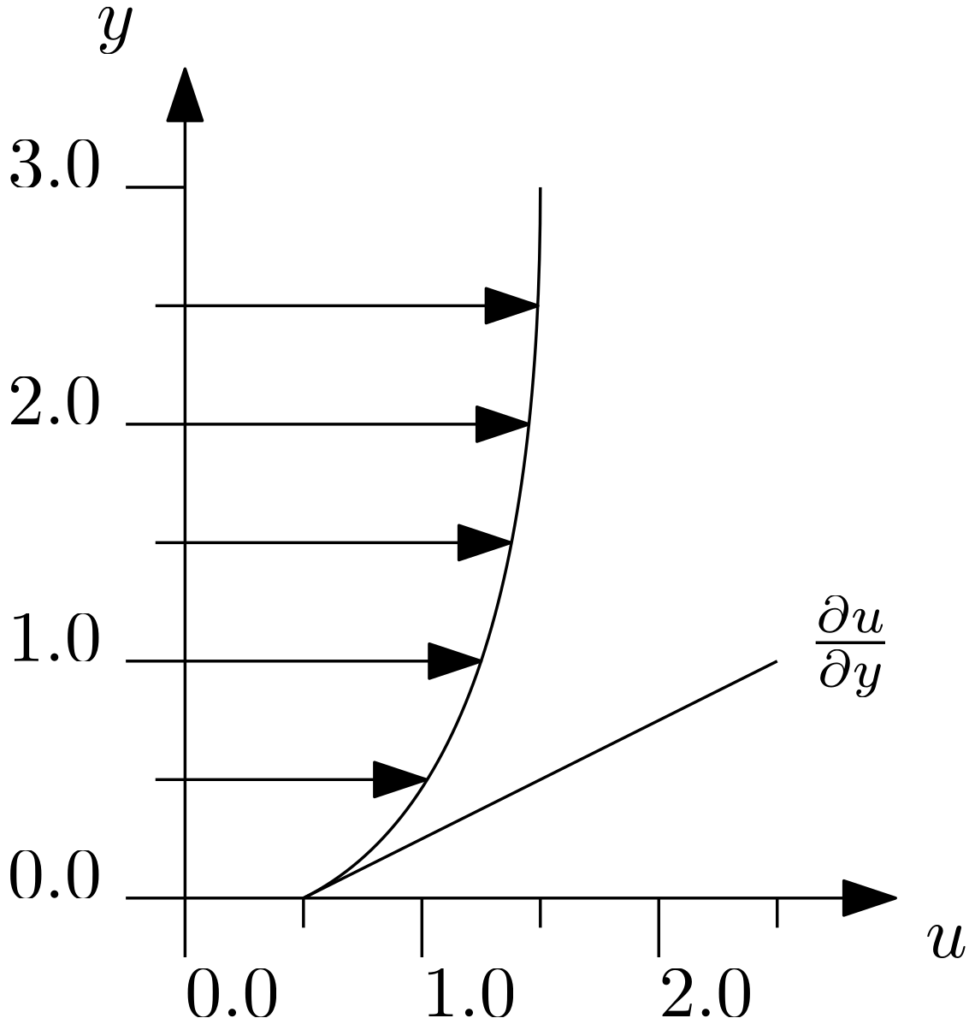

\phi_B=\frac{a\cdot\phi_{Dirichlet}+2b\cdot\frac{\phi_{i,j}}{\Delta x}}{\left(1+\frac{1}{\Delta x}\right)}Finally, let us discuss how we can obtain the values for [katex]a[/katex] and [katex]b[/katex]. To do this, we need to know beforehand what the behaviour at the boundary should look like. For example, we may have measured a small slippage at a wall boundary condition, either through an experiment or a numerical simulation using a particle method. Let's say that we obtained the following velocity profile:

We can see that we do not have a fully no-slip condition at the boundary. In this particular example, the velocity at the wall is [katex]0.5[/katex]. I have also given the slope at the wall, which we can use to calculate the gradient. Using the numbers provided, we see that as we go one unit up on the y axis, i.e. [katex]\Delta y=1[/katex], we can see that we have to go two units to the right on the x (u) axis, i.e. [katex]\Delta u = 2.5 - 0.5 = 2.0[/katex]. This means that our gradient, or slope, of the velocity at the wall is [katex]\partial u/\partial x\approx \Delta u/\Delta y=2/1=2[/katex].

We can also say that we want [katex]\phi_{Robin}=\phi_B[/katex] to evaluate to [katex]0.5[/katex], i.e. this is the value that we want to impose on our boundary. The Dirichlet-type boundary condition for the velocity is still going to assume that we have a no-slip value at the wall, which would leave us with [katex]\phi_{Dirichlet}=0[/katex]. With that knowledge, we can write our Robin-type boundary condition as:

\phi_{Robin}=a\cdot\phi_{Dirichlet}+b\cdot\frac{\partial \phi}{\partial n}\\[0.5em]

0.5=a\cdot 0+b\cdot 2\\[0.5em]

0.5=b\cdot 2\\[0.5em]

b=\frac{1}{4}We want to ensure that [katex]a+b=1.0[/katex], i.e. [katex]a[/katex] and [katex]b[/katex] have to be chosen in a way that we have a linear combination of Dirichlet-type and Neumann-type boundary conditions. If [katex]a+b=1[/katex] and [katex]b=0.25[/katex], then we have [katex]a=0.75[/katex]. In this case, the coefficient of [katex]a[/katex] doesn't matter, as we impose a zero velocity at the wall, but if we were to use the example of a heated wall with a heat flux through it, then we would impose the wall temperature here, which would not be zero.

And there you have it. Three fundamental boundary condition types will be used to construct various types of derived boundary conditions, such as velocity inlets, mass flow outlets, solid walls, symmetry planes, and so on. We will derive this at the end of this article, but before we do that, let's discuss the two different ways we can implement boundary conditions in code.

The two schools of thought on implementing boundary conditions

As alluded to above, we have two different ways of implementing boundary conditions in our code. We can make changes to our mesh and thus change the way the boundaries are treated. In this section, I want to review these two different methods and show which advantages and disadvantages they bring.

Implementing boundary conditions without mesh modifications

In the first method, we do not touch the mesh, and we leave everything exactly as it is. This results in the methodology we used in the previous section. Take a look at the vertex-based and centroid-based mesh again that we looked at above:

The advantage is obvious (well, after you have gone through the other type of how to treat boundaries); we do not have to make any mesh modifications. We either create our mesh within our solver or read in the mesh from an external mesh generator, and we can use it as it is. This seems logical, but you will see why this is an advantage in the next section.

The disadvantage is that as we approach the boundary, the cell directly attached to the boundary will not have any neighbour cell beyond that boundary. But, if we use a higher-order scheme, we saw in my previous article that we need to have more and more points to the left and to the right of the cell for which we are currently finding an approximation.

At boundaries, we can't evaluate these schemes, so we have to switch to a lower-order version just to be able to get approximations near the boundaries, either for the gradients (finite difference method) or the face-interpolated values (finite volume method). If you went through my eBook on how to implement your own CFD solver, we saw that we had to switch the numerical scheme at the boundaries from a second-order MUSCL scheme to a first-order piecewise constant scheme.

So, with this approach, we get to use the mesh as is, without modifications. Boundary conditions can be implemented as we have discussed in the previous section, but the numerical schemes used at boundaries may reduce in order. If we want to overcome this issue, we need to have a look at the second type of implementing boundary conditions with ghost cells. Let's do that next.

Implementing boundary conditions using the ghost cell approach

So, you have decided that you do not want to reduce the order of approximation near the boundary. This can be achieved at a cost. What we have to do is to implement additional cells that go beyond the boundary. This is shown below for a vertex-based (finite difference) approach:

We can also add additional cells for a centroid-based (finite volume) approach, which would result in:

In both cases, we have added two additional cells to the left of the boundary, indicated again by the vertical black line. These additional cells go under various names, but a common name is ghost cells. If you look into the CGNS format, it does support ghost cells, but it goes under the name of rind layers, just to confuse that little bit extra (no one else uses that name as far as I can see).

Let's ignore for the moment how we can impose boundary conditions, now that the boundary is seemingly on the inside of the domain. We are still only solving the flow for the internal domain, i.e. everything up to the boundary, that is the domain we are interested in. But now that we have additional cells beyond the boundary, we can use our higher-order numerical schemes without issues, i.e. those where we need information beyond the boundary. This simplifies our code implementation significantly near boundaries.

Another advantage is that we have practically removed boundaries completely. In our code, we simply solve the equations up the cells that are attached to the boundaries, but we no longer have to introduce a special treatment near boundaries. How is that possible? Well, the ghost cells that we have created need to have some values. We set the values within those ghost cells based on the boundary conditions.

Let's take the vertex-based (finite difference) case first. Here we can see that we have [katex]\phi_B[/katex] located at [katex]i,j[/katex], in other words, [katex]\phi_B=\phi_{i,j}[/katex]. So this is the [katex]\phi[/katex] on the boundary. We can see by the shaded outline that both [katex]\phi_{i-1,j}[/katex] and [katex]\phi_{i-2,j}[/katex] are located within the ghost cells, and they are located outside of our fluid domain.

The way that we find values for these two cells is by using the information we have at the boundaries. For example, if we have a Dirichlet-type boundary condition for [katex]\phi[/katex], i.e. we have [katex]\phi_B=\phi_{Dirichlet}[/katex], then we can say that the average of [katex]\phi[/katex] at the two vertices directly left and right to [katex]\phi_B[/katex] must be equal to [katex]\phi_{Dirichlet}[/katex]. This can be written as:

\phi_{Dirichlet}=\frac{\phi_{i+1,j}+\phi_{i-1,j}}{2}From this, we can solve this equation for [katex]\phi_{i-1,j}[/katex] and obtain

\phi_{i-1,j}=2\cdot \phi_{Dirichlet}-\phi_{i+1,j}The eagle-eyed among you will have realised this is the canonical equation for extrapolation. Thus, we use values of [katex]\phi[/katex] at [katex]i+1,j[/katex] (which we know from the internal fluid domain computation) and [katex]i,j[/katex] (which we know from the Dirichlet-type boundary condition) to find a value at [katex]i-1,j[/katex] (beyond the fluid domain). Because we found this value by using the boundary condition, it will be implicitly included when we use now [katex]\phi_{i-1,j}[/katex] in our computations.

What about [katex]i-2,j[/katex]? Well, we repeat the same process and find the average between cells at [katex]i+2,j[/katex] and [katex]i-2,j[/katex]. This results in

\phi_{Dirichlet}=\frac{\phi_{i+2,j}+\phi_{i-2,j}}{2}\\[0.5em]

\phi_{i-2,j}=2\cdot\phi_{Dirichlet}-\phi_{i+2,j}This extends to an arbitrary number of cells, so we can introduce as many ghost cells as we need to use the numerical stencil we want. If we are dealing with centroid-based (finite volume) approximations, using the definition in the figure above, we simply have:

\phi_{i-1,j}=2\cdot\phi_{Dirichlet}-\phi_{i,j}\\[0.5em]

\phi_{i-2,j}=2\cdot\phi_{Dirichlet}-\phi_{i+1,j}OK, so this is how we deal with Dirichlet-type boundary conditions. What about Neumann-type? The process is similar, where we first start with the definition of the Neumann-type boundary condition, which is

\phi_{Neumann}=\frac{\partial \phi}{\partial n}We now take this and approximate the derivative across the boundary. For the vertex-based approximation, we have:

\phi_{Neumann}=\frac{\phi_{i+1,j}-\phi_{i-1,j}}{2\Delta x}\\[0.5em]

\phi_{Neumann}=\frac{\phi_{i+2,j}-\phi_{i-2,j}}{4\Delta x}This can be solved for the unknowns [katex]\phi_{i-1,j}[/katex] and [katex]\phi_{i-2,j}[/katex] to produce:

\phi_{i-1,j}=2\Delta x\cdot\phi_{Neumann}+\phi_{i+1,j}\\[0.5em]

\phi_{i-2,j}=4\Delta x\cdot\phi_{Neumann}+\phi_{i+2,j}Again, for the centroid-based (finite volume) description, we would obtain similar results. With the notation provided in the figure above, we would have

\phi_{Neumann}=\frac{\phi_{i,j}-\phi_{i-1,j}}{\Delta x}\\[0.5em]

\phi_{Neumann}=\frac{\phi_{i+1,j}-\phi_{i-2,j}}{3\Delta x}This can be solved for the unknowns again to yield:

\phi_{i-1,j}=\Delta x\cdot\phi_{Neumann}+\phi_{i,j}\\[0.5em]

\phi_{i-2,j}=3\Delta x\cdot\phi_{Neumann}+\phi_{i+1,j}In all of the above discussions, I assume that the grid spacing is constant. If we have mesh stretching, then we could either introduce ghost cells with constant spacing and then work out how derivatives with non-constant spacing are approximated, or we could simply mirror the mesh spacing in our ghost cells so that the boundary would essentially act as a mirror. For example, if we use inflation layers, these would have to be mirrored by the ghost cells. This is the easier approach of the two.

If you look at the Dirichlet-type and Neumann-type approximation, you might have realised that we are using here a second-order extrapolation and a second-order central difference scheme to approximate the derivative. Thus, if you are using a numerical scheme that has a numerical order that is higher than two, you might not be able to achieve that order at the boundaries, as the values within the ghost cells are only approximated to within second-order accuracy. Bummer!

Could we use higher-order approximations? Yes, but once we do, we may start to introduce numerical oscillations. If you have ever fitted a higher-order polynomial through some points, you will know that you will get a seemingly good fit for interior points (probably an overfit), but near the points at the start and end, your polynomial will oscillate quite violently.

Take the following example of fitting a sixth-order polynomial through six points:

While the polynomial goes through all data points, it does start to oscillate. A similar behaviour may be observed if we want to achieve higher-order approximations for the values in our ghost cells, so it is probably for the best to keep it to second order.

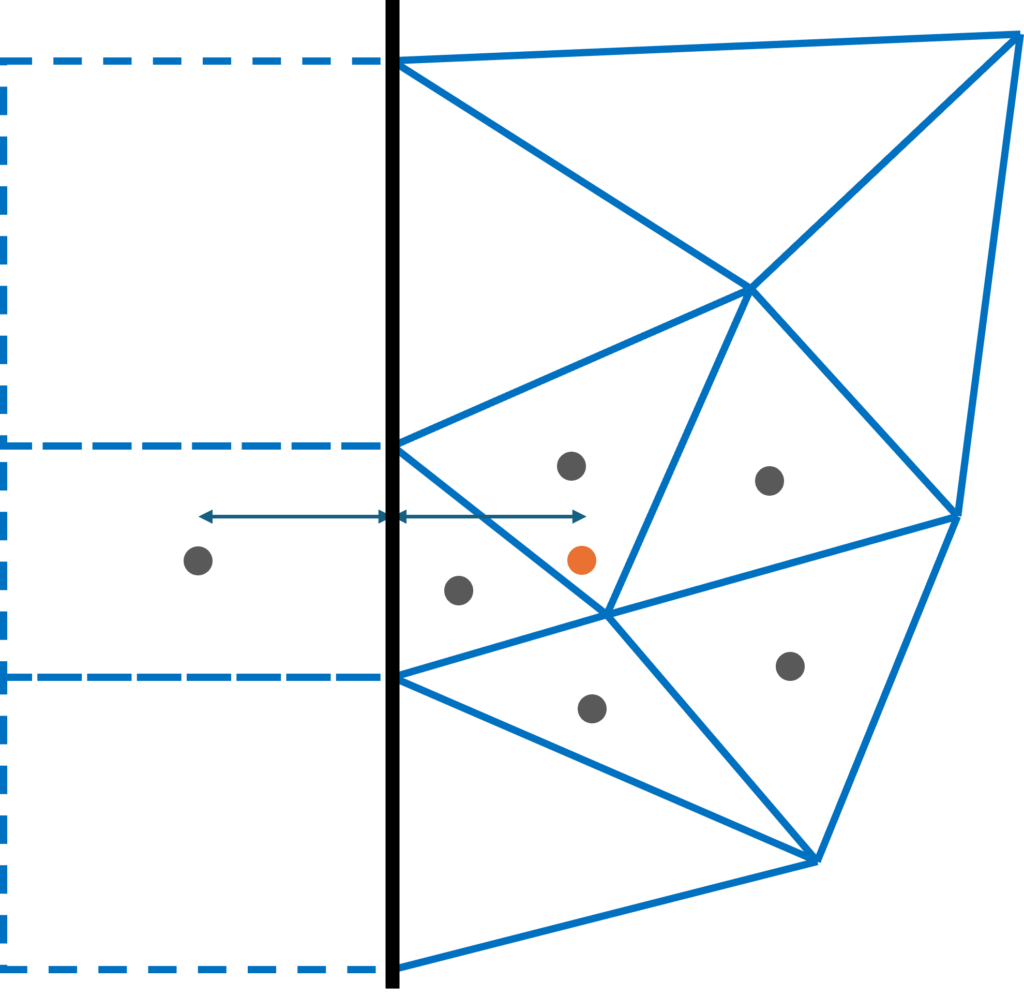

But even if we find a stable method of approximating values within our ghost cells, that would only really work for equidistant structured grids. What about unstructured grids? Let's have a look at the following example:

Here, if we want to get the centroid value for the ghost cell shown (I have left the other ghost cells at the top and bottom empty), then we see that the point on the other side of the boundary in the fluid domain would be where the orange dot is located. However, this point does not coincide with any centroid within the fluid domain, so we would have to get this point with an interpolation from surrounding points. This can be done, but higher-order schemes using ghost cells now would be limited by this second-order interpolation.

I have just shown one layer of ghost cells here, but for higher-order numerical schemes on unstructured grids, your stencil will be much larger. That means that we have to construct even more ghost cells. You see, on unstructured grids, the complexity quickly escalates, but we are not really gaining anything. We have to resort to second-order interpolation, so our higher-order scheme would lose accuracy near the boundaries anyway. It is simpler to just use a lower-order scheme here, which likely has the same accuracy but less computational cost.

How to implement boundary conditions for implicit time integration

Thus far, we have looked at how to treat Dirichlet, Neumann, and Robin-type boundary conditions, and the discussion has been accurate to this point. However, the way that I have presented boundary conditions thus far will only really allow you to deal with explicit time integration schemes.

Why is that? When we deal with a purely explicit time integration scheme, we have an equation of the form:

\phi^{n+1}=\phi^n + \Delta t\left[\text{advection}(\phi^n) + \text{diffusion}(\phi^n) + \text{sources}(\phi^n)\right] Here, advection(), diffusion(), and sources() are some operators to discretise the various terms in our Navier-Stokes equation. The defining characteristic of a fully explicit time integration is that we have a single unknown on the left-hand side of the equation, in this case, [katex]\phi[/katex] at the next time level, i.e. [katex]\phi^{n+1}[/katex], while all other variables are known and on the right-hand side of the equation, in this case, [katex]\phi[/katex] at the current time level, i.e. [katex]\phi^n[/katex].

In this case, [katex]\phi^n[/katex] is either taken from the initial condition, if we are just starting our simulation, or from the previously computed time level, if we have already computed some time steps as part of our simulation. For an implicit time integration, at least one (or all) of the terms on the right-hand side is/are evaluated at the next time level, i.e. we have at least one dependency on [katex]\phi^{n+1}[/katex]. For a fully implicit system of equations, for example, we get:

\phi^{n+1}=\phi^n + \Delta t\left[\text{advection}(\phi^{n+1}) + \text{diffusion}(\phi^{n+1}) + \text{sources}(\phi^{n+1})\right] In this case, it is typically customary to group all known variables on the right-hand side, i.e. those depending on the previous time level (all terms containing [katex]\phi^n[/katex]), and all unknowns on the left-hand side, i.e. those depending on the current time level (all terms containing [katex]\phi^{n+1}[/katex]). This would result in:

\phi^{n+1} - \Delta t\left[\text{advection}(\phi^{n+1}) + \text{diffusion}(\phi^{n+1}) + \text{sources}(\phi^{n+1})\right]=\phi^n This type of system then leads to a linear system of equations, of the form [katex]\mathbf{Ax}=\mathbf{b}[/katex], as we have seen in the previous article, and we need to solve this now. Solving a linear system of equations poses its very own challenges, especially near boundaries. Since we are solving [katex]\mathbf{Ax}=\mathbf{b}[/katex], we need to impose boundary conditions directly into our coefficient matrix [katex]\mathbf{A}[/katex].

While that is no more complicated than what we have already discussed up to this point, if you have never done it, you might be wondering how to do that, and so in this section, I want to shine some light on that, as this is something that catches me out every now or then (I just spent a week debugging my coefficient matrix only to realise that I must have had a stroke while implementing the Neumann boundary conditions. We shall not speak of this blunder again, and you shall not examine my git history!)

In the following section, we will look at how we can implement our two fundamental boundary types (Dirichlet and Neumann) using no mesh modifications and the ghost cell approach.

Implicit time integration without mesh modifications

We'll start with the approach that doesn't require mesh modifications. As it turns out, Dirichlet boundary conditions are pretty straightforward to implement, at least if we assume a finite-difference type data structure, that is, we store our variables at nodes/vertices. This is straightforward, as we will see shortly, because we can impose boundary conditions directly at the location where we store our variables.

Once we start to adopt a finite-volume type data structure, though, we store data at cell centroids, which are not on the boundaries, and so, we have to make changes to our discretisation.

While it does sound like vertex/node-based discretisation is easier to achieve, it's not; we are just shifting problems around. While it may indeed be easier to impose some boundary conditions with a node-based discretisation, we end up with an array of other issues. For example, think of corner points, where two different boundary conditions meet. Which one are we imposing here?

This is an issue for which there are solutions available, but it just goes to show that no matter what we do with our boundaries, they will make sure that we suffer from PTSD. There is no free lunch.

Anyways, after these encouraging words, if you are up for it, let's look at these boundary conditions. Boy, how I look forward to writing this ...

Dirichlet boundary conditions

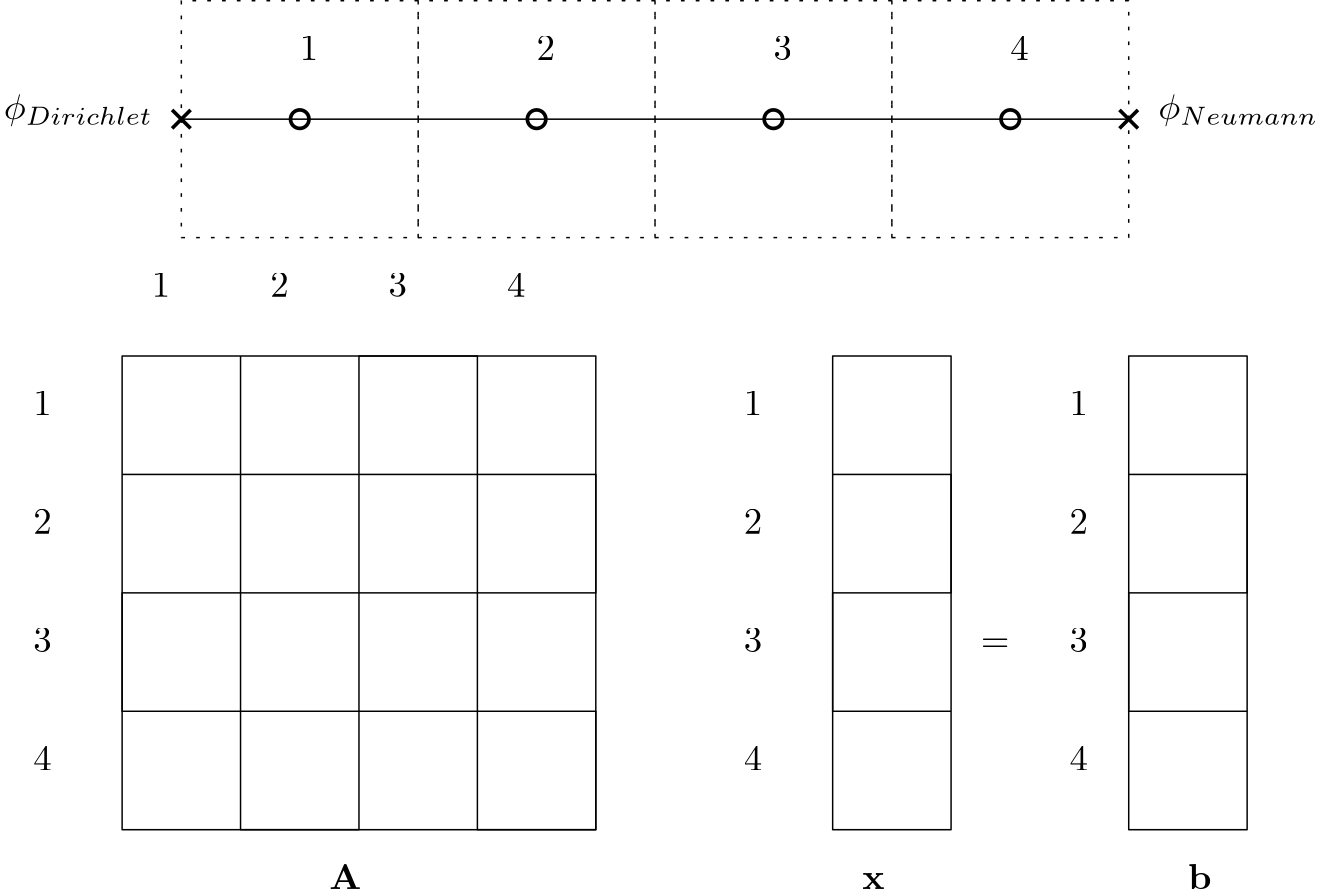

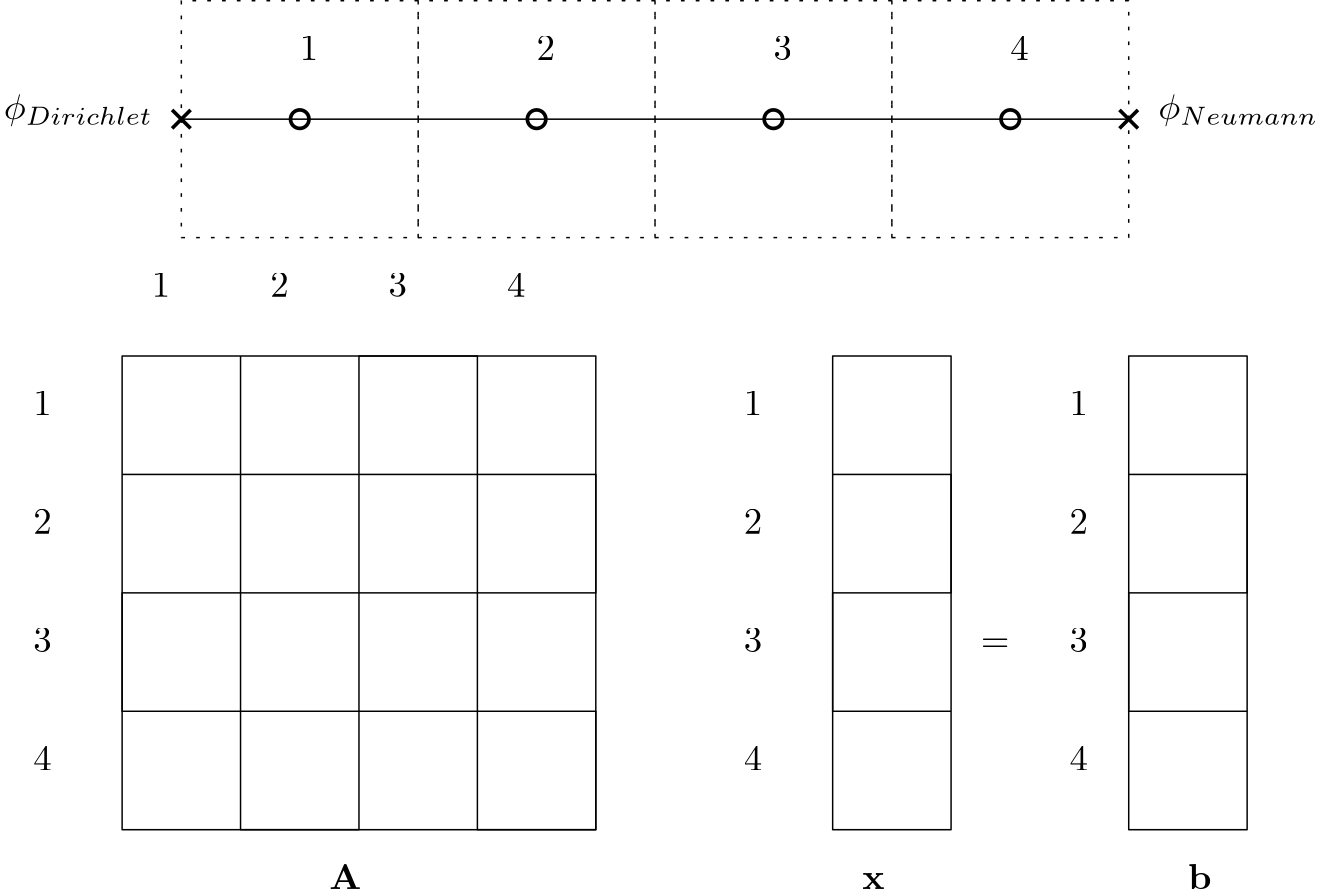

Let's recall what our goal is with Dirichlet-type boundary conditions. We have some value [katex]\phi_{Dirichlet}[/katex] that we want to impose on boundaries. Looking at the following, simple, 1D domain, we can see that our goal, for example, is to impose some Dirichlet value on the west boundary, that is, at node/vertex 1.

Below the 1D domain, we see the individual matrices and vectors of the linear system of equations that arises from our discretisation, i.e. [katex]\mathbf{Ax}=\mathbf{b}[/katex]. We see that we have the same number of rows (and columns for the coefficient matrix) as we have grid points, which would be true for 2D and 3D grids as well (i.e. if we have 1 million cells in our mesh, and we store information at cell centroids, then we have a 1 million by 1 million coefficient matrix, and two vectors of length 1 million for [katex]\mathbf{x}[/katex] and [katex]\mathbf{b}[/katex], respectively).

On the internal domain, that is, on nodes 2-4, we go about our discretisation as per usual, and find the coefficient that we want to impose in our coefficient matrix. But what happens at the boundaries? Well, we need to focus our attention now on node/vertex 1.

If we were dealing with an explicit time integration, we would simply set the value of [katex]\phi[/katex] to [katex]\phi_{Dirichlet}[/katex]. We can write this as [katex]\phi_1=\phi_{Dirichlet}[/katex]. So, the goal of the Dirichlet boundary condition is to impose a fixed value on the boundary.

Let's take that to our linear system of equations now. How do we translate that to [katex]\mathbf{Ax}=\mathbf{b}[/katex]? Well, here, [katex]\mathbf{x}[/katex] is the vector that will contain the solution of our unknowns after we have solved this system of equations. We want this vector to contain the correct values at the boundaries as well, so essentially, what we are saying is that the first entry at index/row 1 of [katex]\mathbf{x}[/katex] should contain [katex]\phi_{Dirichlet}[/katex] after we have finished solving our linear system of equations.

However, if we were to simply write this into our vector, that is, we set [katex]x_1=\phi_{Dirichlet}[/katex], we would not be imposing this boundary condition at all! Why? Well, let's say, for a moment, that we can easily invert [katex]\mathbf{A}[/katex]. Either the matrix is very small, or we have found a Nobel Prize-worthy inversion algorithm (only to realise that mathematicians are not eligible for Nobel Prizes) that allows us to rewrite our system of equations as:

\mathbf{x}=\mathbf{A^{-1}b} If we now set [katex]x_1=\phi_{Dirichlet}[/katex], it would be completely ignored by this system, as [katex]x_1[/katex] is computed from the first row of [katex]\mathbf{A}[/katex] and the entire right-hand side vector [katex]\mathbf{b}[/katex]. And, even if we have not found a Nobel Prize-worthy inversion algorithm and we continue to use an iterative procedure, this iterative procedure will still aim to find a solution to the above equation.

So, you may then argue, why don't we just set something like [katex]x_1=\phi_{Dirichlet}[/katex], knowing full well that this will not impose boundary conditions correctly, but we still solve [katex]\mathbf{Ax}=\mathbf{b}[/katex] and then overwrite whatever (incorrect) solution has been obtained at [katex]x_1[/katex] with the correct value of [katex]\phi_{Dirichlet}[/katex]?

Well, this certainly sounds plausible, but that has a pretty big problem. You see, when we discretise our governing equations with our numerical schemes, and we start to fill our coefficient matrix [katex]\mathbf{A}[/katex] based on our discretisation, if we do not impose the boundary conditions correctly here, we are no longer solving the governing equation we are trying to solve.

So, we have to impose our boundary condition in such a way that the solution vector [katex]\mathbf{x}[/katex] in [katex]\mathbf{Ax}=\mathbf{b}[/katex] will contain the correct boundary value after the simulation has completed. In the case of Dirichlet-type boundary conditions, this is thankfully easy to achieve.

Let's look at [katex]\mathbf{Ax}=\mathbf{b}[/katex] again carefully. If we want the first entry in [katex]\mathbf{x}[/katex] to be [katex]\phi{Dirichlet}[/katex], then the easiest way to achieve that is to set the first row and column in the coefficient matrix [katex]\mathbf{A}[/katex] to 1, i.e. we have [katex]A_{11}=1[/katex], while setting the first entry in the right-hand side vector [katex]\mathbf{b}[/katex] to [katex]\phi_{Dirichlet}[/katex], i.e. we have [katex]b_1=\phi_{Dirichlet}[/katex].

If we impose the boundary conditions this way, then we are guaranteed to get the correct value in our solution vector [katex]\mathbf{x}[/katex]. To make this clear, [katex]A_{11}[/katex] is set to 1, while all other columns for the first row are zero. We can write this precisely for the first node as:

A_{1j}=

\begin{cases}

1\qquad j=1\\

0\qquad j\ne 1

\end{cases} If this is the case, then our linear system of equations [katex]\mathbf{Ax}=\mathbf{b}[/katex] can be written for the first boundary node as:

A_{11}\cdot x_1 = b_1 \\

1\cdot x_1 = \phi_{Dirichlet}\\

x_1 = \phi_{Dirichlet}/1\\

x_1 = \phi_{Dirichlet} It doesn't matter what is in the right-hand side vector [katex]\mathbf{b}[/katex], as long as it contains the correct value at [katex]b_1[/katex], since all other columns in the coefficient matrix are set to zero, and so whatever else is in [katex]\mathbf{b}[/katex], will be multiplied by zero, except what is stored at [katex]b_1[/katex] (which is [katex]\phi_{Dirichlet}[/katex]).

What I have assumed thus far is that the nodes/vertices in our mesh coincide with the location where we store our solution variables as well. This is typical for finite difference approximations, but not for finite-volume discretisations. When we are dealing with finite volumes, we typically store our variables at cell-centroids. If we adapt our 1D domain example from above, where we now store variables at the cell centroids, we obtain the following domain:

In this case, the boundary conditions are no longer imposed directly at the same location where our variables are stored, and so, we cannot simply set [katex]A_{11}=1[/katex] and [katex]b_1=\phi_{Dirichlet}[/katex]. If life was only that simple ...

Instead, we have to modify our discretisation and work the boundary conditions directly into our discretisation. Let's consider first-order and second-order derivatives; these are the ones you will usually find in CFD (e.g. advection, divergence, and pressure gradient representing first-order derivatives, and diffusion representing second-order derivatives). For first-order derivatives, we have the following finite-volume discretisation:

\frac{\partial \phi}{\partial x}\rightarrow \int_v\frac{\partial \phi}{\partial x}\mathrm{d}V = \int_S\vec{n}\cdot \phi \mathrm{d}S\approx \sum_k^{nFaces}\vec{n}_k\cdot\phi_k\cdot S_k If you need a refresher on this discretisation, and why on earth I get away with murder for changing a volume to a surface integral, you may want to catch up on my finite-volume write-up.

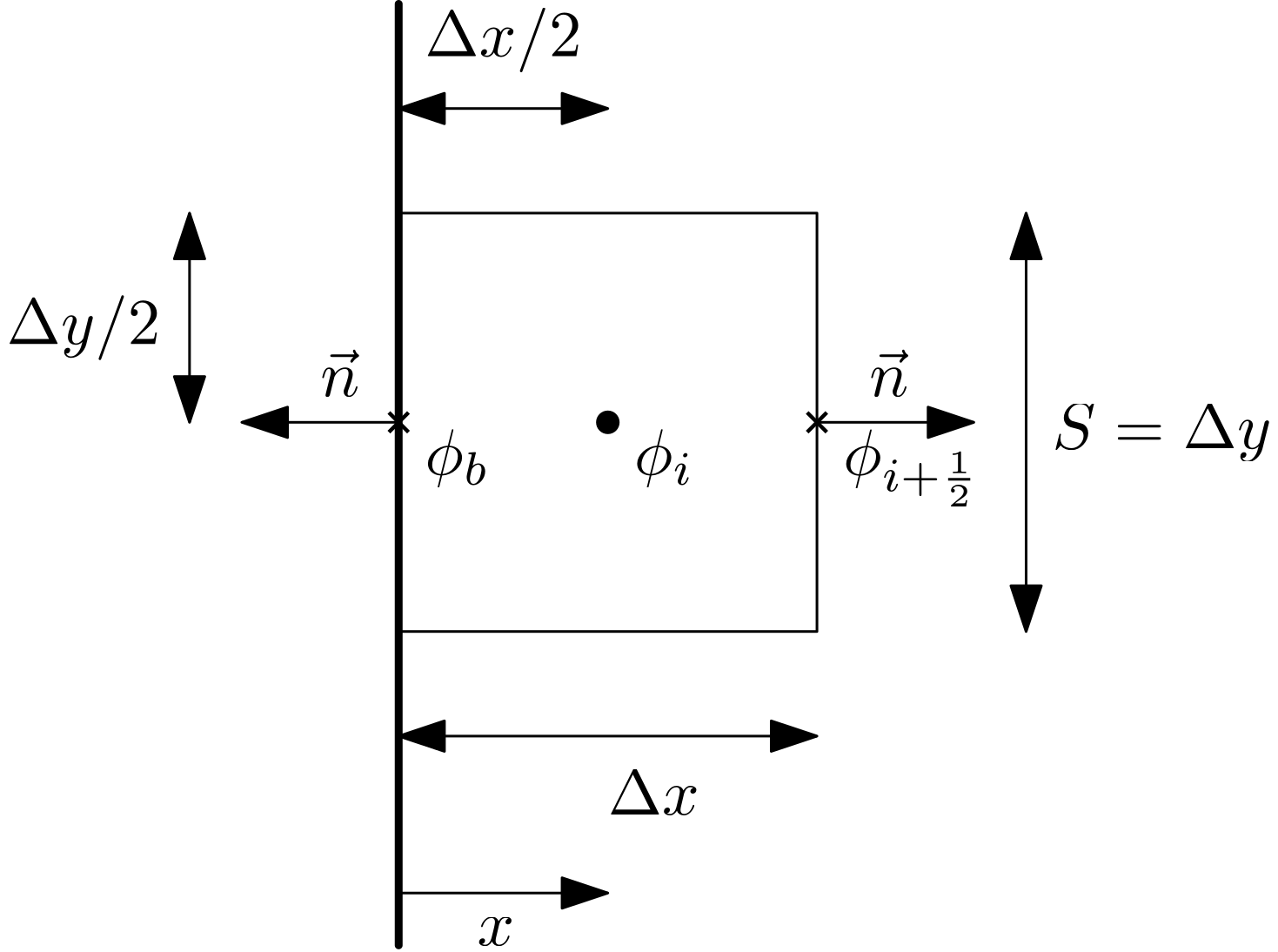

Let's consider the following sketch of a single cell, showing all of its dimensions, as well as a boundary on the left-hand side of the cell:

We can see the surface area in 2D is just the height of the cell (i.e. [katex]S=\Delta y[/katex]), and, if this is a 1D example, then we simply set it to [katex]S=\Delta y=1[/katex], and we can ignore it in our discretisation. We see that the normal vectors point outwards from the cell, and we see that we have interpolated values for [katex]\phi[/katex] on the cell's faces. Well, on the east face, we do have an interpolated value [katex]\phi_{i+\frac{1}{2}}[/katex], on the west face, we have the boundary value [katex]\phi_b[/katex].

If you need a refresher on how to get those interpolated values, I got you covered as well. See my write-up on interpolation schemes for the finite-volume method.

In any case, let's write out our discretisation. For that, we need to know what [katex]\phi_{i+1/2}[/katex] is. For first-order derivatives, for example, the advection term, we use an upwind-based discretisation. In the simplest form, we say that we set [katex]\phi_{i+1/2}[/katex] to [katex]\phi_i[/katex] if the local velocity in the x-direction is positive (and along the x-axis), or we set it to [katex]\phi_{i+1}[/katex] if the local velocity is negative (against the x-axis). We can write this as:

\phi_{i+\frac{1}{2}}=

\begin{cases}

\phi_i &u_x \gt 0 \\

\phi_{i+1} &u_x \lt 0

\end{cases} This type of logic may be best implemented with if/else statements in code, which isn't great for our discretisation. So, instead, we can write this in a more compact form as:

\phi_{i+\frac{1}{2}} = \text{max}(sign(u_x), 0)\phi_i + \text{min}(sign(u_x), 0)\phi_{i+1} Here, I am using the [katex]sign(x)[/katex] function, which does what it says: it returns the sign (but limits the value to 1). So, a value of [katex]u_x=6.3[/katex] would be [katex]sign(u_x=6.3)=1[/katex], whereas a value of [katex]u_x=-0.7[/katex] would be [katex]sign(u_x=-0.7)=-1[/katex]. Thus, if [katex]u_x \gt 0[/katex], then the first term in the above equation will evaluate to [katex]\mathrm{max}(1, 0)=1[/katex], while the second term will evaluate to [katex]\mathrm{min}(1, 0)=0[/katex]. It will be reversed if [katex]u_x \lt 0[/katex]. In this way, we automatically get the correct value for [katex]\phi_{i+\frac{1}{2}}[/katex].

If we are dealing with, for example, the pressure gradient, we are typically OK to use a central differencing scheme as an approximation, and so could write here:

\phi_{i+\frac{1}{2}}=\frac{\phi_i + \phi_{i+1}}{2} While this works for the pressure, it pretty much always diverges if we try to use this for the velocity, especially the advection (non-linear) term. If you want to know why, we'll need to talk about von Neumann's stability analysis. Would you believe it, I have a write-up on that, too. Wow, today must be your lucky day. Have you played the lottery already?

If we take the central differencing approach, and we set [katex]\vec{n}=1[/katex] at the east face (pointing along the x-direction) and [katex]\vec{n}=-1[/katex] at the west face (pointing against the x-direction), then we can start to write our discretised equation as:

\sum_k^{nFaces}\vec{n}_k\cdot\phi_k\cdot S_k=1\cdot\phi_{i+\frac{1}{2}}S + (-1)\cdot \phi_b\cdot S = \frac{\phi_i + \phi_{i+1}}{2}S-\phi_b\cdot S Now we have to find all unknowns and collect them on the left-hand side of the equation (that is, all [katex]\phi[/katex] values that are unknown), and place all known values on the right-hand side of the equation. This results in:

\frac{\phi_i + \phi_{i+1}}{2}S=\phi_b\cdot S We know what [katex]\phi_b[/katex] is; this is the boundary value we set and want to impose in our simulation, so it moves to the right-hand side. Since this is an implicit discretisation, we don't know the values of [katex]\phi[/katex] on the internal domain, and we obtain these from our linear system of equations. Let's bring this equation into coefficient form:

\phi_i\left[\frac{S}{2}\right] + \phi_{i+1}\left[\frac{S}{2}\right] = \phi_b S In our linear system [katex]\mathbf{Ax}=\mathbf{b}[/katex], where we have [katex]\mathbf{x}=\mathbf{\phi}[/katex] now, we would set [katex]A_{11}=S/2[/katex] and [katex]A_{12}=S/2[/katex]. Furthermore, we have [katex]b_1=\phi_b S[/katex], and the values of [katex]\phi_1,\ \phi_2,\,...,\,\phi_n[/katex] will be stored in the solution vector [katex]\mathbf{x}[/katex].

If we discretised a second-order derivative with the finite-volume discretisation, then we would have:

\frac{\partial^2 \phi}{\partial x^2}\rightarrow\int_V\frac{\partial^2 \phi}{\partial x^2}\mathrm{d}V=\int_S\vec{n}\cdot\frac{\partial \phi}{\partial x}\mathrm{d}S\approx\sum_k^{nFaces}\vec{n}\cdot\frac{\partial \phi}{\partial x}S The derivative [katex]\partial \phi/\partial x[/katex] will be evaluated at the face as well, that is, at [katex]i+1/2[/katex]. Since diffusion itself is a process that has no preferred direction of travel, we do not have to worry about upwinding here. In fact, these types of second-order derivatives have an elliptic behaviour, and you may ask yourself, what is elliptic behaviour, and WHERE IS MY WRITE-UP?

Steady-on, no need to scream, I think someone had too much CFD (fun?) for one day, perhaps take a break? As your CFD doctor, I would usually only recommend a few milligrams of dopamin release when visiting my website, but if you can't stop, you can enjoy my write-up at your own risk: Elliptic flows.

So, the derivative at the east face can be evaluated as:

\frac{\partial \phi}{\partial x}\bigg|_{i+\frac{1}{2}}=\frac{\phi_{i+1}-\phi_{i}}{\Delta x} We also have to find the derivative at the boundary face, that is, what is [katex]\partial \phi/\partial x[/katex] at [katex]i-1/2[/katex]? Well, we don't have a value for [katex]\phi_{i-1}[/katex], however, we do have the value at [katex]\phi_b[/katex]. This value is not a distance of [katex]\Delta x[/katex] away from [katex]\phi_i[/katex], but rather half that, i.e. [katex]\Delta x/2[/katex]. Therefore, we can find this derivative at the west face as:

\frac{\partial \phi}{\partial x}\bigg|_{i-\frac{1}{2}}=\frac{\phi_{i}-\phi_{b}}{\Delta x/2}=2\frac{\phi_{i}-\phi_{b}}{\Delta x} With both of these two derivatives now available, we can discretise our second-order derivative near boundaries again as:

\sum_k^{nFaces}\vec{n}\cdot\frac{\partial \phi}{\partial x}S=(1)\frac{\phi_{i+1}-\phi_{i}}{\Delta x}S + (-1)2\frac{\phi_{i}-\phi_{b}}{\Delta x} S Now we proceed as before, we collect terms on the left-hand side which are unknown, and those which are known on the right-hand side, and we end up with:

\frac{\phi_{i+1}-\phi_{i}}{\Delta x}S-2\frac{S\phi_i}{\Delta x}=-\frac{2S\phi_b}{\Delta x} We can bring this again in coefficient from, which provides us with:

\phi_i\left[-3\frac{S}{\Delta x}\right] + \phi_{i+1}\left[\frac{S}{\Delta x}\right] = -\frac{2S\phi_b}{\Delta x} And, again, we can now populate our coefficient matrix with [katex]A_{11}=-3S/\Delta x[/katex] and [katex]A_{12}=S/\Delta x[/katex], while the right-hand side vector is [katex]b_1=-2S\phi_b/\Delta x[/katex].

We can repeat this now for any of the other faces, though, in a general sense, we would simply keep the normal vector [katex]\vec{n}[/katex] in the discretisation (here I have inserted the values of +1 and -1 already), which means that we can apply the same procedure to unstructured grids just as well.

Additionally, the Navier-Stokes equation consists of a few terms, and each of them will be discretised on its own. This means that if we use a centroid-based discretisation, we may have to add coefficients to [katex]\mathbf{A}[/katex] and values to our right-hand side vector [katex]\mathbf{b}[/katex] a few times.

So, we could either write out the coefficients for the entire discretised equation (which textbooks typically do, and this is a sure way to lose overview very quickly) or we can apply the boundary conditions on a per-term basis (advection, diffusion, sources, etc.). This is typically much easier to comprehend (at least in my opinion), and it also aligns better with how you would actually want to implement this.

I say I want to, as this would promote a modular design (we can add as many or as few terms as we want, without having to re-derive our equations if something changes), but let's be honest, it is mostly academics who write CFD solvers, and they rarely think about good software design. (I am not even 40, where is all of that cynicism coming from? ... I should see a (real) doctor ...).

Anyhow, I think we have covered Dirichlet-type boundary conditions now extensively, and we can move on. I tried to be more explanatory here, as I have found classical CFD textbooks to be surprisingly vague on the details here. All it takes is just a bit of derivations, which aren't difficult, but we just need to know what we are doing here. With that said, let's now turn to Neumann-type boundary conditions, which require even more derivations. Oh, the joy that is to come!

Neumann boundary conditions

Let's return to our sketch using a node-based discretisation. Here, we said that we wanted to apply the Neumann boundary conditions on the right-hand side of the domain, as shown in the figure below, which I have repeated here for convenience:

When we dealt with the Dirichlet-type boundary condition, in this particular case (i.e. with a node-based discretisation), we saw that we can just easily impose that through the coefficient matrix and the right-hand side vector. But, for Neumann-type boundary conditions, that is not the case, unfortunately.

Neumann boundary conditions require us to derive the required matrix coefficients and right-hand side vector component for each operator separately. So, if we are dealing with the Navier-Stokes equations of the form:

\underbrace{\frac{\partial \mathbf{u}}{\partial t}}_{\text{Term 1}} + \underbrace{(\mathbf{u}\cdot\nabla )\mathbf{u}}_{\text{Term 2}} = \underbrace{-\frac{1}{\rho}\nabla p}_{\text{Term 3}} + \underbrace{\nu\nabla^2\mathbf{u}}_{\text{Term 4}} Then, we have to derive the Neumann condition for each of these 4 terms and apply that to our coefficient matrix. To complicate things further, the above equation cannot be solved as it is, but rather, we have to discretise this equation. In the process, we have to make choices about our numerical schemes, and changing a numerical scheme means we are solving a different discretised equation.

Thus, for each scheme that we implement, we have to derive a corresponding Neumann-type condition. Having said that, typically we can discretise the pressure gradient (term 3) and diffusion operator (term 4) with a central scheme and don't (for incompressible flows anyways) and don't have to worry about ever having to change that. The time derivative is also simple (it is very similar to a Dirichlet-type boundary condition), and so the burden, really, is only with the non-linear advective operator (term 2).

So, in the sketch above, we want to apply the Neumann-type boundary condition to the right of the domain. Let's do that and start easy. Let's start with the time derivative. If we discretised the time derivative, using a first-order forward finite-difference approximation (as we are node-based here, though I should point out this is a common choice, but not the only permissible one). That is, we can use finite volumes for node-based discretisation and centroid-based discretisation for finite-difference methods. Just for completeness, we get:

\frac{\partial \mathbf{u}}{\partial t}\approx \frac{\mathbf{u}_i^{n+1} - \mathbf{u}_i^{n}}{\Delta t} Now we collect terms again and write unknowns on the left-hand side and known terms on the right-hand side, and we arrive at:

\mathbf{u}_i^{n+1}\left[\frac{1}{\Delta t}\right]=\mathbf{u}_i^{n}\left[\frac{1}{\Delta t}\right] Referring to the sketch above, we want to impose the Neumann boundary conditions now at the last node with ID 5, so that the coefficient matrix has to be set at location [katex]i=5[/katex] and [katex]j=5[/katex]. This results in [katex]A_{55}=1/\Delta t[/katex], and the right-hand side vector at location [katex]i=5[/katex] becomes [katex]b_5=1/\Delta t[/katex].

For completeness, to show what happens with higher-order time derivatives, a popular choice here is the second-order backwards scheme, which can be written as:

\frac{\partial \mathbf{u}}{\partial t}\approx \frac{3\mathbf{u}_i^{n+1} - 4\mathbf{u}_i^{n} + \mathbf{u}_i^{n}}{2\Delta t} If we collect terms on the left-hand side again that are unknown, and known terms on the right-hand side, we end up with:

\mathbf{u}_i^{n+1}\left[\frac{3}{2\Delta t}\right] = \mathbf{u}_i^{n}\left[\frac{4}{2\Delta t}\right] - \mathbf{u}_i^{n-1}\left[\frac{1}{2\Delta t}\right] And thus, we write into our coefficient matrix [katex]A_{55}=3/(2\Delta t)[/katex] and into our right-hand side vector [katex]b_5=\mathbf{u}_i^{n}[4/(2\Delta t)] - \mathbf{u}_i^{n-1}[1/(2\Delta t)][/katex]

Perhaps now you can see why I say that the time derivative is very similar to a Dirichlet-type boundary condition, with the difference that we are not setting a value of 1 in the coefficient matrix, but rather the coefficient that comes out from our discretisation. Well, it will become, perhaps, even clearer when we deal with different operators. So, let's look at the diffusion operator. We can discretise the diffusion term (term 4 in our Navier-Stokes equation, ignoring the viscosity and only focusing on the derivative here) as:

\nabla^2\mathbf{u}=\frac{\partial^2 u}{\partial x^2} \approx \frac{u_{i+1}^{n+1}-2u_{i}^{n+1}+u_{i-1}^{n+1}}{(\Delta x)^2} Now we have to make an uncomfortable realisation! Thus far in your life, you may have been living under the impression that boundary conditions are imposed on the boundaries and that's that. You left them alone, computed solutions on the interior domain, and then updated boundary conditions afterwards. For explicit schemes, this is the case. But not for implicit schemes.

No, when we deal with implicit schemes, you see, we do have to compute the solution on the boundaries as well! I don't know about you, but when I first had this realisation, it made me feel very uncomfortable, as if someone told me Santa Claus didn't exist. I don't know why, it just did. So, Neumann boundary conditions are imposed as a constraint on our linear system of equations; we do not update any boundary condition after we are done with the computation; rather, they naturally arise as part of the solution.

So, let's remind ourselves what Neumann boundary conditions are. We said that these represent gradients that are normal to the boundary edges, which we can express as:

\frac{\partial \phi}{\partial n}=\phi_{Neumann} We can discretise this with a classical central scheme, now using [katex]\phi=u[/katex], as:

\frac{\partial u}{\partial n}\rightarrow \frac{u^{n+1}_{i+1} - u^{n+1}_{i-1}}{2\Delta x}=u_{Neumann} If we are at location [katex]i=5[/katex], where we want to impose the Neumann-type boundary condition in the above sketched domain, then inserting this into our discretised Neumann condition will give us [katex]i+1=5+1=6[/katex] and [katex]i-1=5-1=4[/katex] for the locations. Clearly, there is no node at [katex]i=6[/katex], so what do we do? Well, let us write the discretised form of the Neumann condition so that we place all unknowns (i.e. in this case, not in time, but rather in space, that is, [katex]i+1[/katex] quantities are unknown and [katex]i-1[/katex] are known) on the left-hand side:

u^{n+1}_{i+1}=u^{n+1}_{i-1} + 2u_{Neumann}\Delta x So far so good, now let's turn our attention back to our discretised stencil, we had:

\frac{\partial^2 u}{\partial x^2} \approx \frac{u_{i+1}^{n+1}-2u_{i}^{n+1}+u_{i-1}^{n+1}}{(\Delta x)^2} Remember what our goal here is: we want to compute the solution on the boundary, that is, at location [katex]i=5[/katex], in a way that imposes our Neumann boundary condition. Well, if we want to solve the above discretised form of the diffusion operator, we have the same issue as with the derivative of the Neumann boundary condition; we have a term that is evaluated at [katex]i+1[/katex] in our discretised equation.