Mesh generation in CFD: All you need to know in one article

Do you know the difference between the average CFD user and a world-class CFD practitioner? In most cases, it is the ability to generate a grid for complex geometries, which remains a challenge to this day with the tools we have available. Our desire to simulate ever more complex cases has grown faster than the tools to support this desire. As a result, mesh generation is complicated and a topic we really ought to give attention to, but rarely do.

For every paper that is published on the topic of mesh generation in CFD, there are 42 papers published in the area of turbulence modelling in CFD. This shows how little we care about a topic that is so fundamentally important and typically drives most of the issues we face in CFD. NASA even named it one of their key challenges in their 2030 CFD vision study, yet we treat mesh generation like our drunk uncle at Christmas: you are family, we have to put up with you, but we don't really want to.

In this article, I go through everything there is to know about mesh generation. I spend a lot of time talking about grid quality in this article, not just how it is defined, but also how each quality metric we look at will influence the simulation. In this way, you know exactly what will happen when you have poorly skewed cells or low orthogonality, and then you can decide if this is a problem or not.

We will also look at how structured and unstructured grids are generated, and how the algorithms we use today aim to maximise the grid quality. If you want to take a step towards CFD mastery, then this article will tell you everything you need to know in a single article about a topic you have likely not given a lot of consideration. It is on the lengthier side, but I promise, you will view mesh generation with different eyes after reading this article.

In this series

[custom_category_posts_list category_slug="10-key-concepts-everyone-must-understand-in-cfd"]In this article

- In this series

- In this article

- Introduction

- Mesh elements and their quality - why does it matter?

- Mesh generation

- Summary

Introduction

Mesh generation is one of those topics that splits the masses. Either you love it or hate it, and in all statistical likelihood, you hate it. And, if you don't hate meshing right now, it is very likely you will in the future. Personally, I really like meshing, and it is an almost zen-like activity for me, which I could do for hours. So, I have an uphill battle to fight to make you see the beauty of mesh generation.

I may not be able to convince you today that mesh generation is really not that bad, but if I can take at least some of the frustrations, I'd consider that already a win. In my experience, most of the frustrations come from mesh sensitivities. If you change the mesh slightly, you get disproportionally large changes in your solution, which can be the difference between convergence and divergence.

Contrast that with changes in the numerical scheme, or turbulence model, or linear system of equation solver; these may affect the overall accuracy or global convergence rate, but probably not much more. Thus, you have to spend a lot of time and energy on getting a grid that will allow you to get a result in the first place, and when you are just starting out, your simulations may be diverging rather than converging.

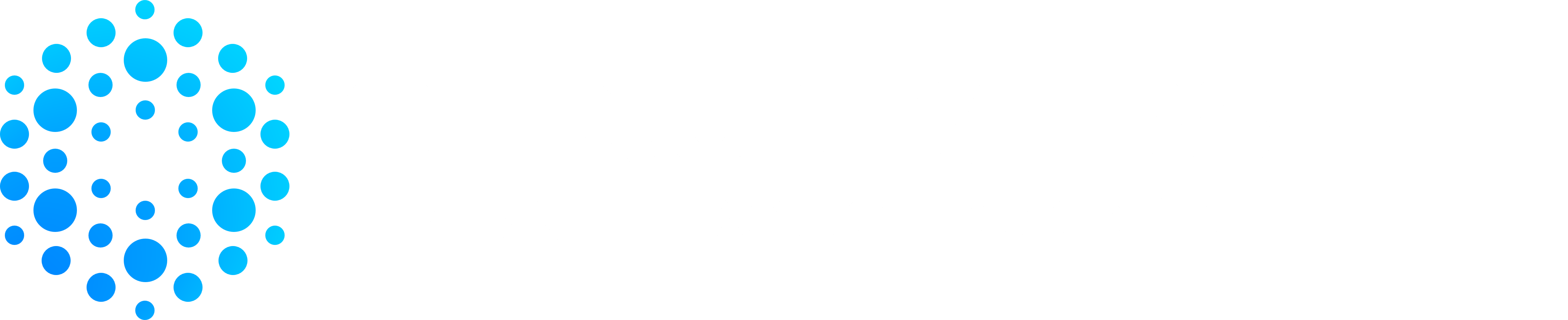

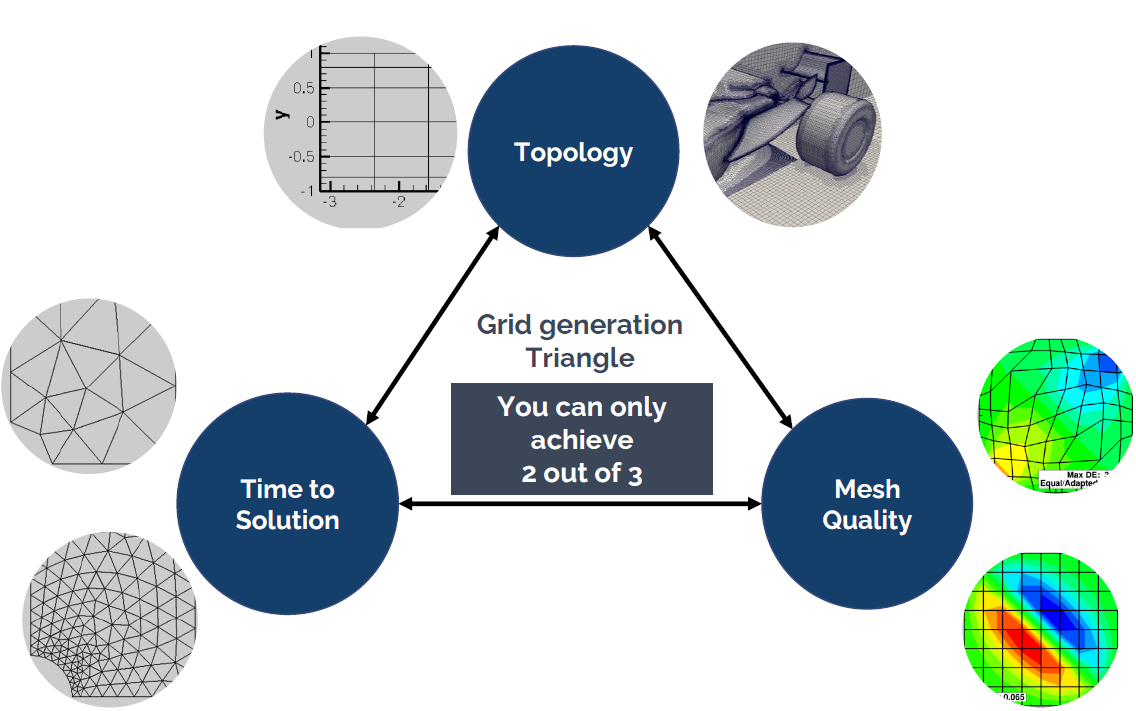

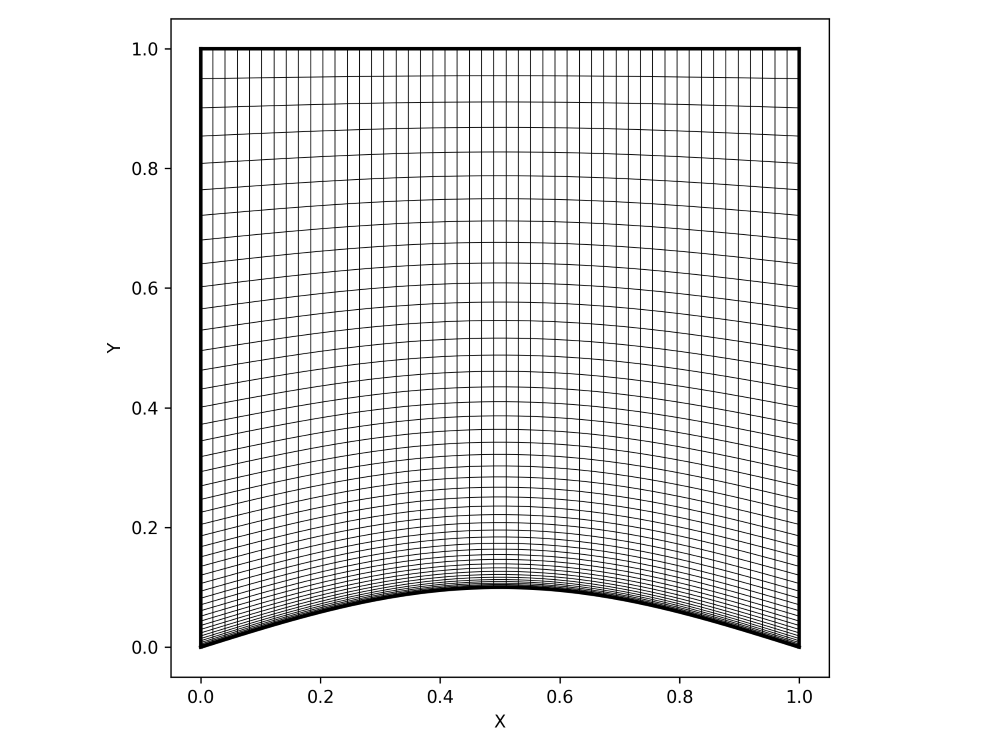

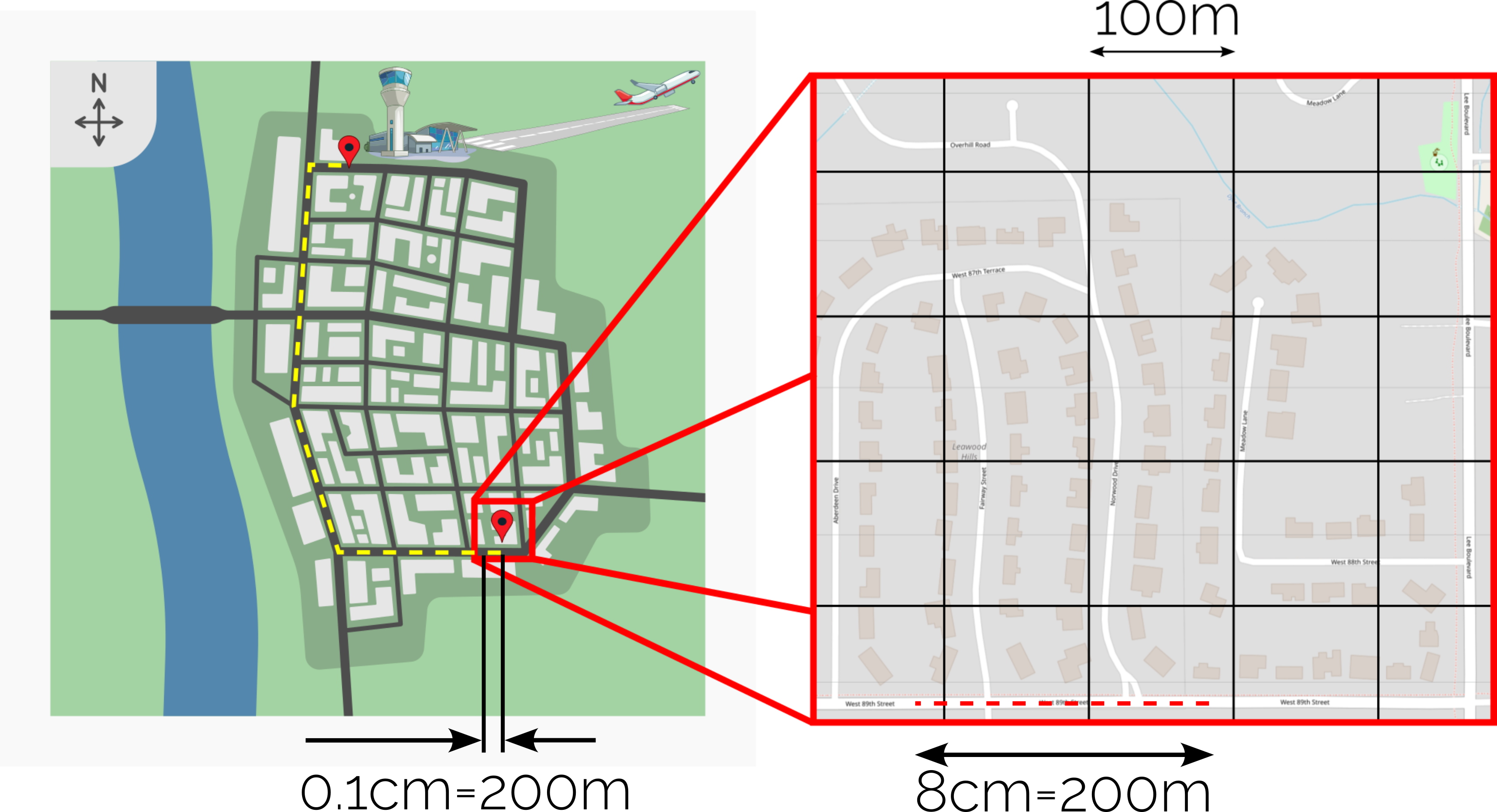

The problem with grid generation can be summarised in the following schematic, which I have dubbed the three pillars of mesh generation:

Here you have the three core elements that underpin any mesh that you create. These are:

- Topology: The topology defines the complexity of your model that you are trying to mesh. Think square box or circular cylinder for a simple topology, and an entire Formula 1 car / IndyCar / Aircraft / etc. for a complex topology.

- Mesh quality: The mesh quality is responsible for a number of things and typically influences the convergence rate, global solution accuracy, and, in the worst case, it can be the difference between convergence and divergence.

- Time to solution: This metric describes how long you will have to wait until you get a solution.

The problem: We can only independently influence two out of these three properties. The third property will be set for us, and it will always negatively affect our simulation. To make matters worse, we really only have a single choice, because the topology is determined by the geometry we want to investigate.

Let's look at a few examples. Let's say we are investigating a complex geometry (topology). We can now choose: Do we either want to have a quick simulation time, which means we have to accept that our mesh will be of poor quality and thus we may get less accurate results, or do we want to strive for a really high-quality grid, requiring some serious compute power?

We could also look at this from another perspective: Say you are a student and you can only run simulations locally on your laptop. This means your computational resources are limited, and unless you want to leave your laptop on for several days and let it compute some CFD cases, you really want to have a fast time to solution. Now you can pick, do you want to also have good results (high grid quality metric)? If so, you will need to limit yourself to simple cases like the flow past a cylinder or an airfoil.

You see how you can use this schematic to determine the bottleneck in your simulation. You may say, "Well, I work for a tech startup, we received a few million in funding, and I can blow it on cloud computing, so time to solution isn't a concern for me". Well, thank you for putting your ignorance on display for us all to enjoy.

Yes, if you have access to a high-performance computing (HPC) cluster, you may think that you can run simulations as large as you want. Therefore, you will not be limited in the other two metrics, and you can achieve a really high grid quality for a complex geometry, right? Well, perhaps, but keep the following in mind:

- There is a limit in how well simulations scale. Throwing more resources (CPU cores, GPU cards) at a simulation doesn't mean infinite speedup. At some point, more resources will hurt you, and simulation times will increase again.

- Scaling only really works for stationary problems. Once you have an unsteady problem, you can't speed up the time stepping; this will always be sequential, unless you try your hand at parallelisation in time techniques, but these haven't matured yet to a point where they become useful (and they are also not available in general-purpose CFD solvers).

- If you have access to an HPC cluster or cloud computing facility with 10 cores, you will find reasons why you need access to 100 cores. If you get 100 cores, you will find reasons why you need access to 1000 cores. If you get 1000 cores, you will find reasons why you need access to 10000 cores. If you get 10000 cores, you will find reasons why you need access to 100000 cores. Can you spot a pattern?

I will say one thing, though: If you have access to some serious computing power, then mesh generation is really simple. If you can make your elements really small, so that they are at least an order of magnitude smaller than the local feature size on your geometry (e.g. curvature/radius on your geometry), then you stand a good chance of generating a really high-quality mesh for even a complex geometry. But as we have seen above, this means you will need to have access to a good HPC cluster or cloud computing service.

There is just one caveat: The environment. You may have heard about global warming, and you may even live in a country where energy prices have soared in the past years. In short, you can run as many simulations as you want with your private cluster, but we should be aware of the environmental cost of doing so.

Here is a simple calculation I did for one of my simulations: I was simulating the flow past small cylindrical tubes using large-eddy simulations (LES). The simulation took 20 days to run on 128 cores. The energy that was required to power and cool the HPC cluster emitted as much CO2 as me driving in a small compact-sized car for about 2000km (I think that is about 1.618 gallon-miles/square-$, rounded, of course, to the nearest milli-Fahrenheit in imperial units, I think ...).

So, even if we have all the computational resources in the world, we ought to think about using them responsibly. Just because we can doesn't mean we should.

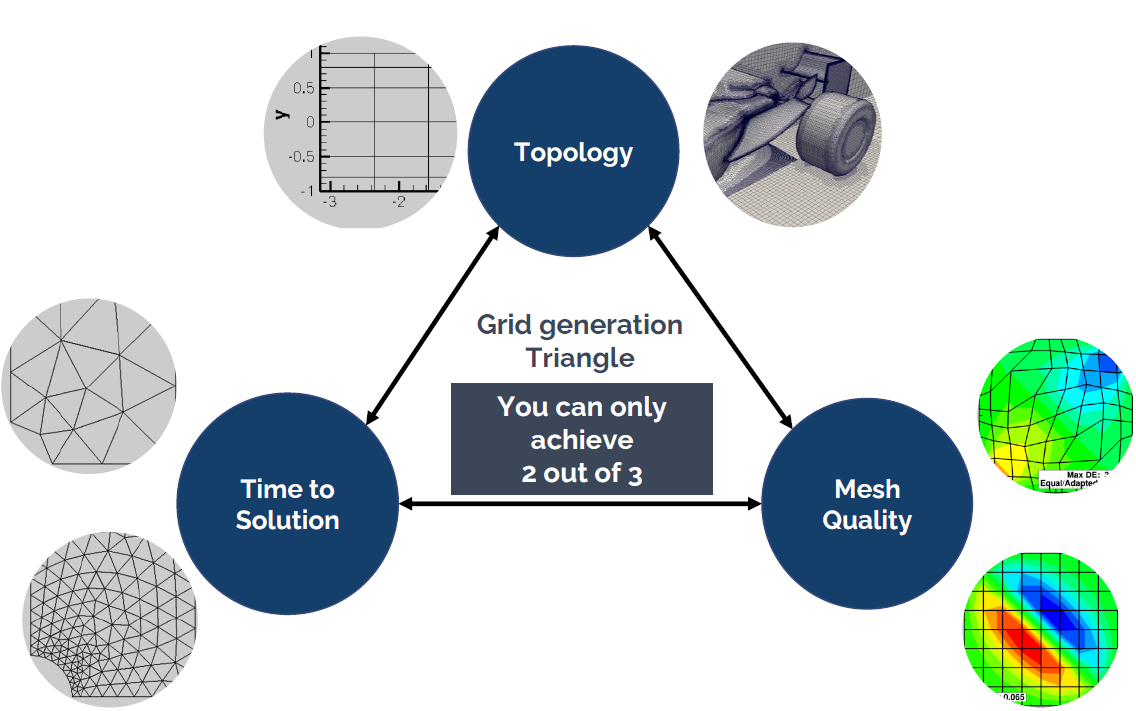

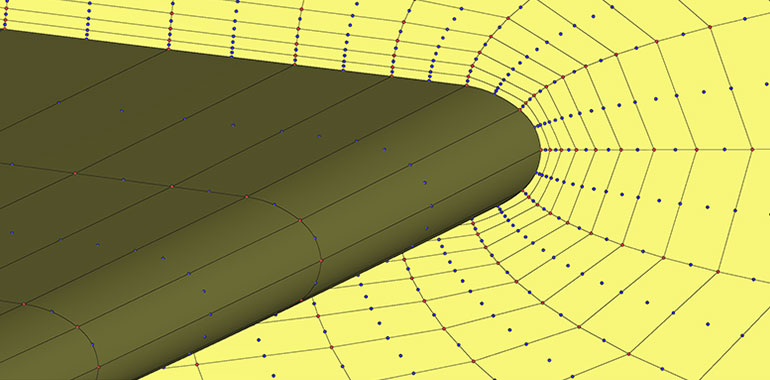

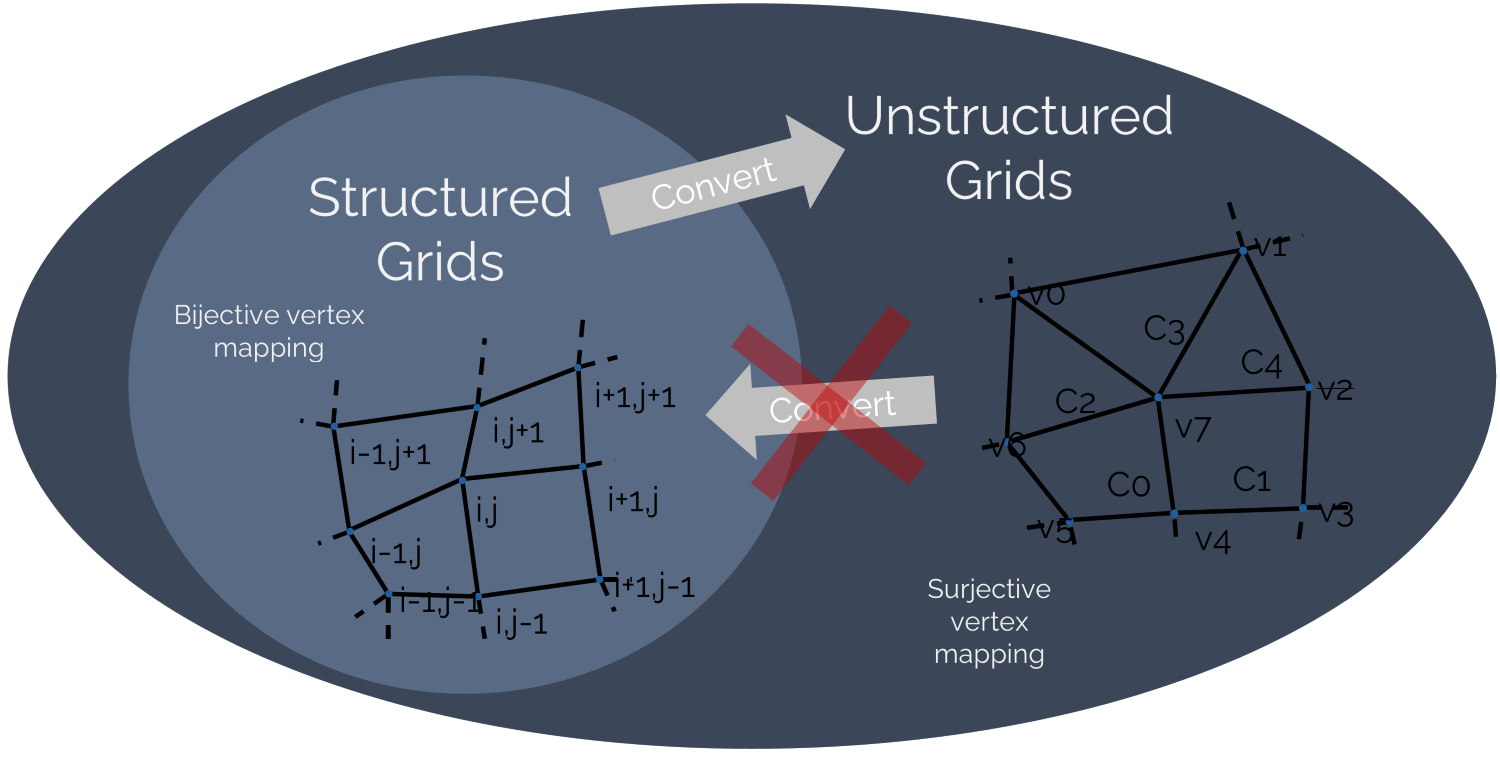

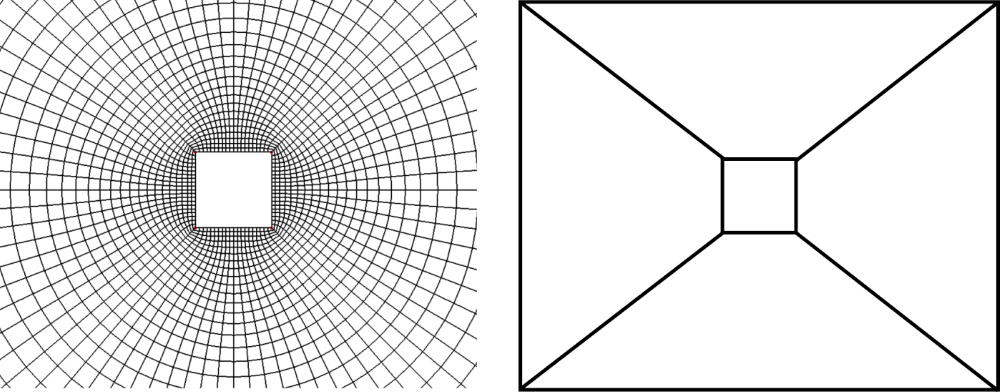

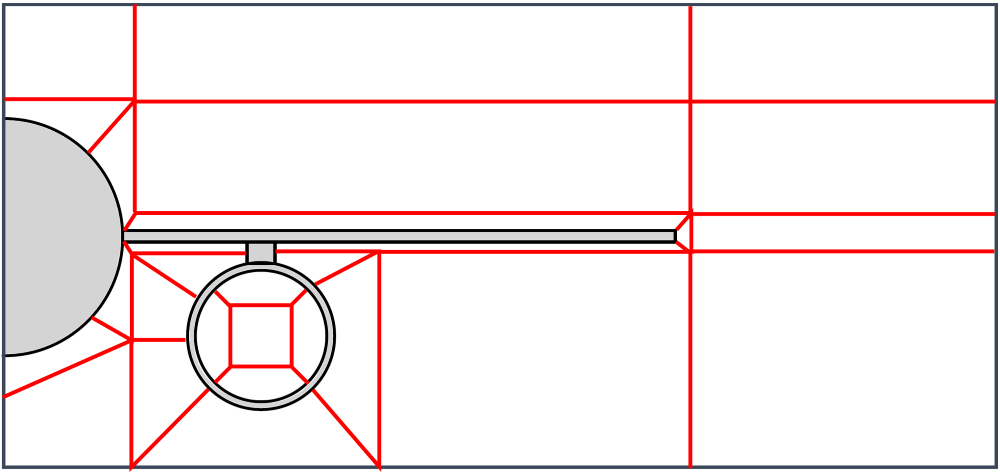

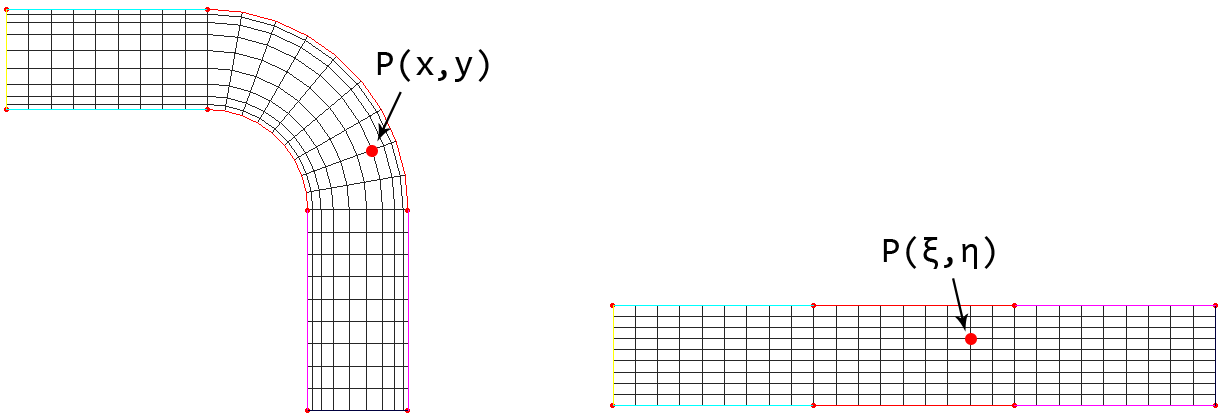

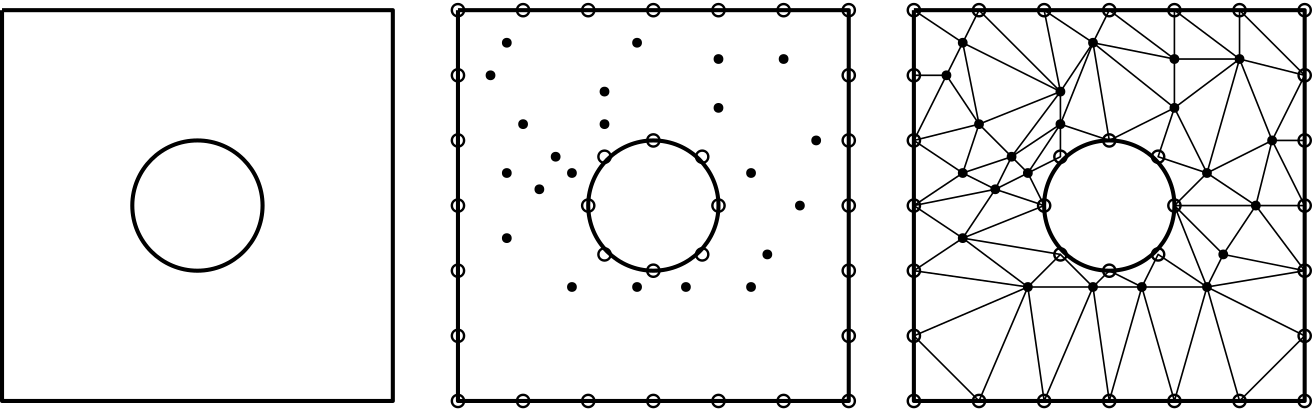

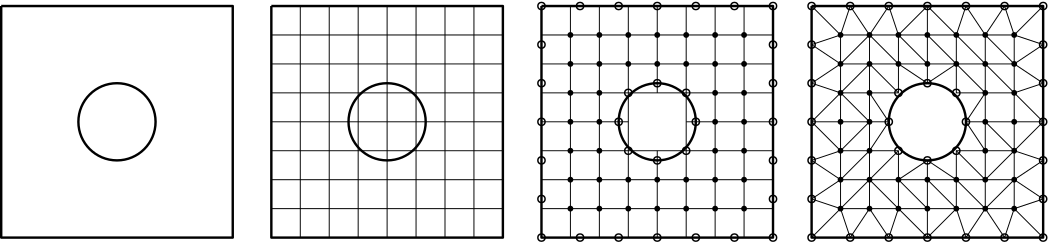

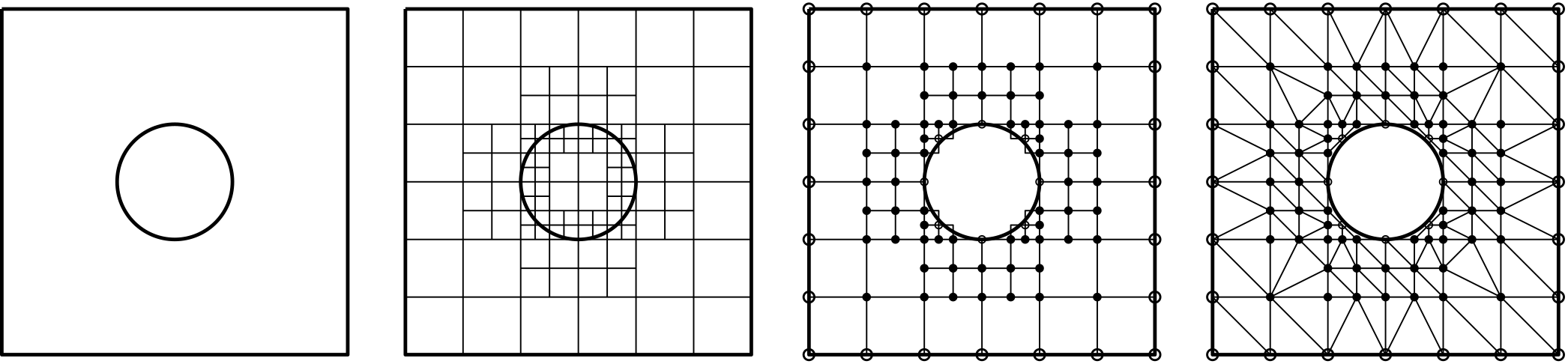

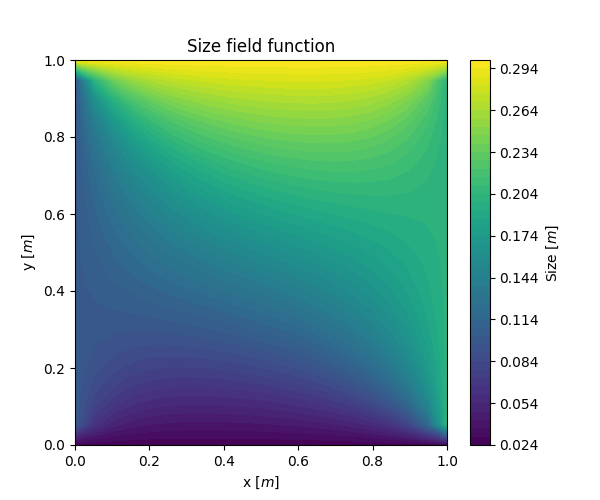

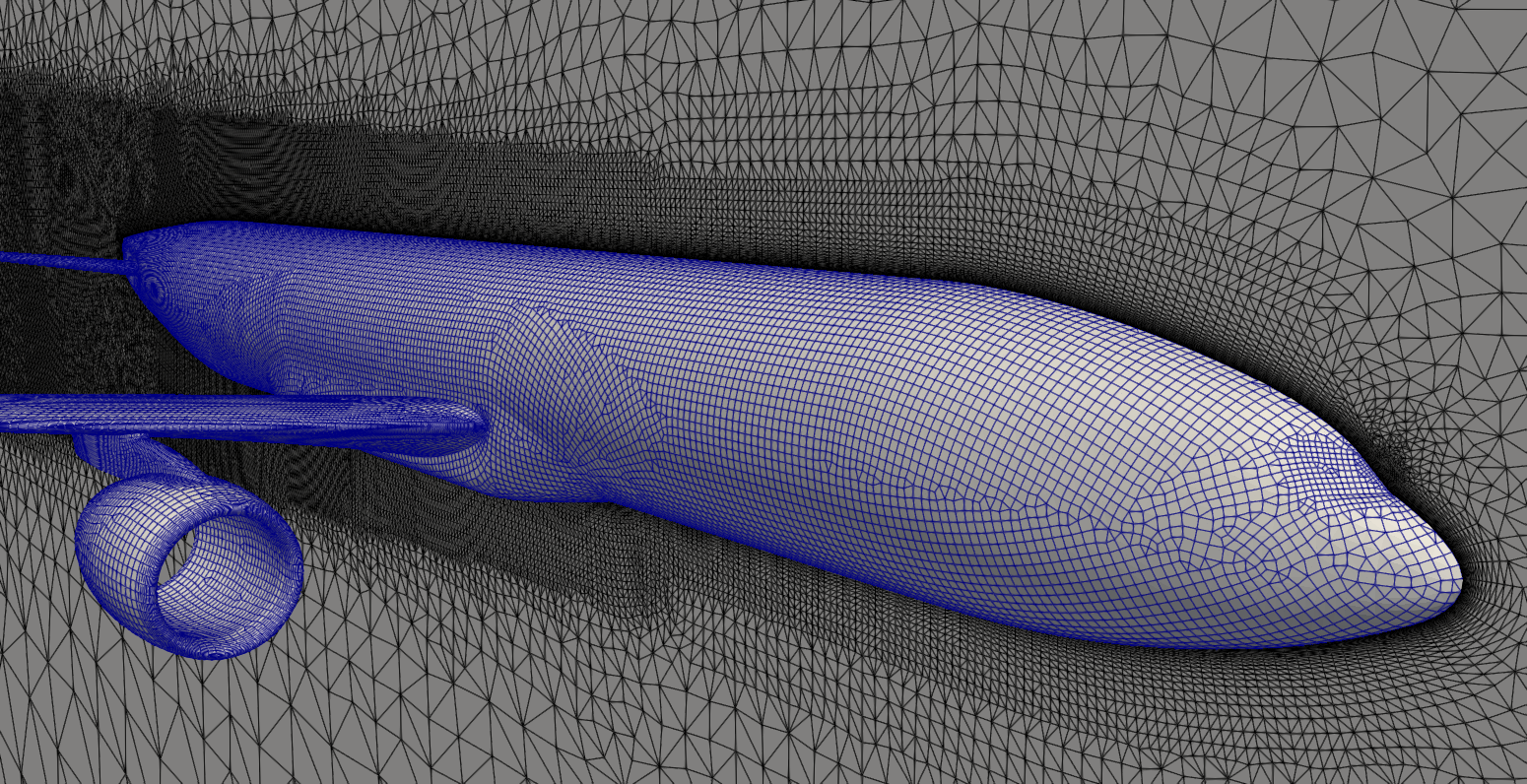

So let's review some common types of mesh generation approaches. The following figure shows the nose section of an aircraft, and a few of the most important mesh generation techniques are used all in a single mesh:

Here, we have the following grid generation techniques at play:

- Body-fitted mesh generation: The red mesh around the aircraft's fuselage is said to be a body-fitted grid, as it follows the geometry of the fuselage and adapts to its curvature.

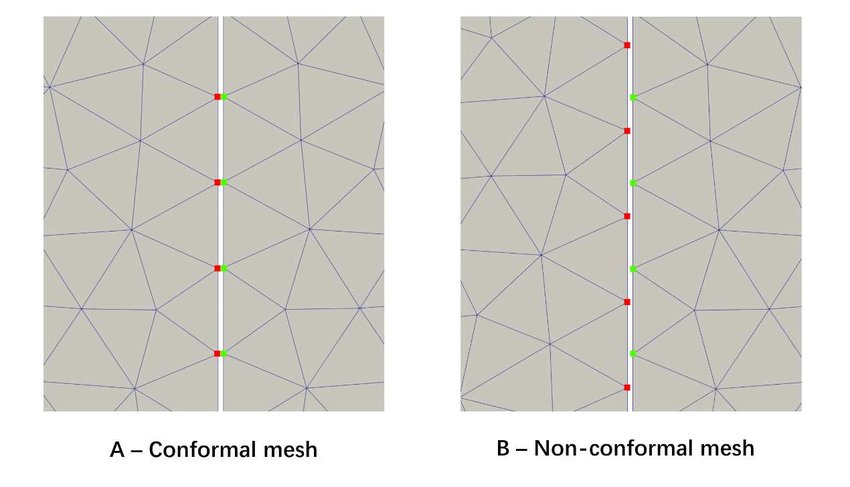

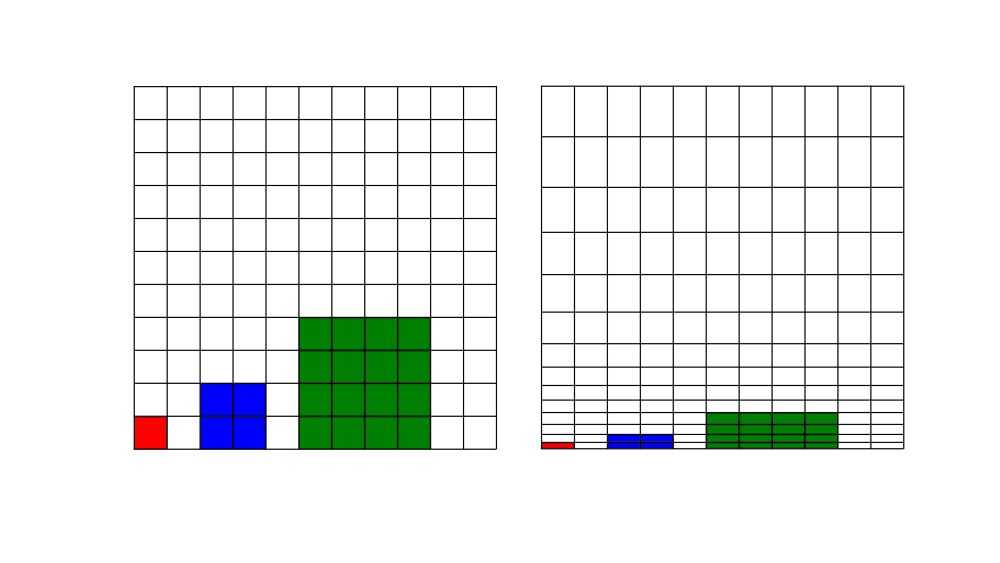

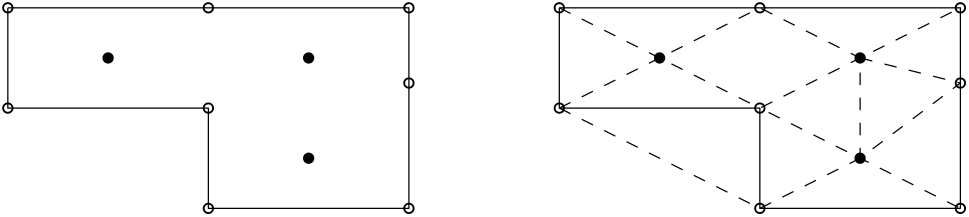

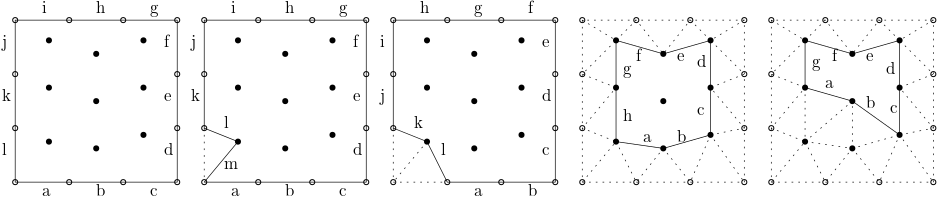

- Conformal / non-conformal mesh generation: A conformal grid is one where the vertices of two adjacent cells overlap. We can see that the blue background mesh has both conformal and non-conformal elements, where some vertices are attached to only one cell. These are also called hanging nodes. The following figure shows the difference between conformal and non-conformal mesh interfaces.

- Overset / Chimera mesh generation: Overset grids, also called Chimera grids, work by having two independent grids intersect each other. They share a common interface across which simulation data is transferred. We see that the body-fitted grid (shown in red) and the background grid (shown in blue) have an overlap, and we use the overset mesh generation approach here to let these two different grids exchange information. Overset grids are powerful for mesh movement, especially if we have complex movement or we don't know the trajectory of the mesh movement.

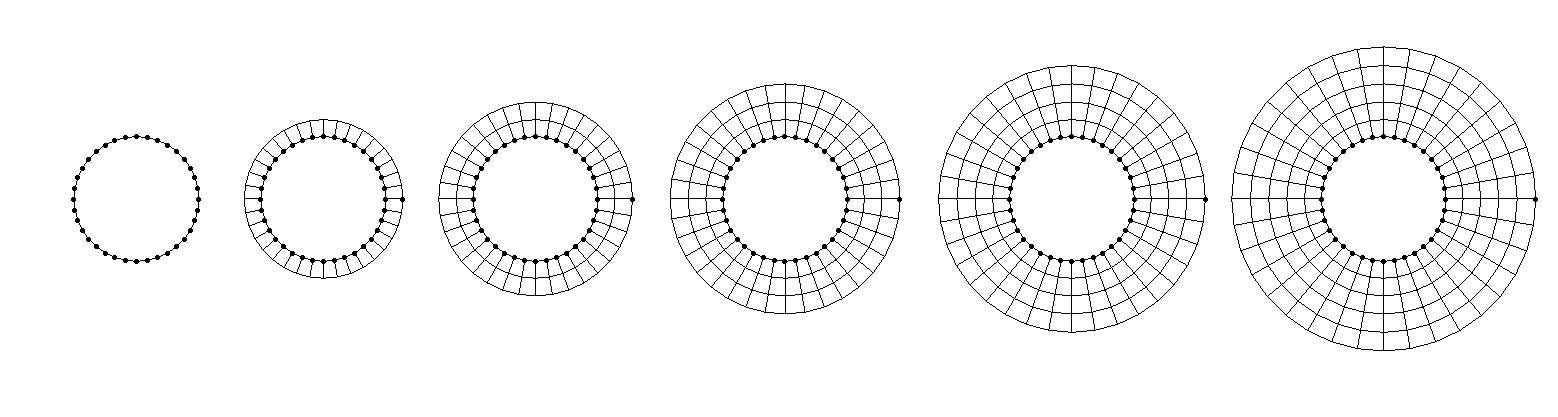

- Higher-order mesh generation: Typically, when you create a mesh element such as a triangle or quad element, you use linear elements. That is, all edges are composed of two vertices; one at the start, and one at the end. Higher-order mesh generation allows you to have non-linear elements, where edges of a cell may have more than two vertices, allowing for curved edges. This is helpful in resolving large curvatures of complex geometries with very few elements, as seen in the following figure:

Depending on our grid generation needs, as well as the capabilities of our mesh generator, we may adopt any of these techniques when generating our mesh. I should note here, though, that higher-order grids are typically used in conjunction with finite-element methods and thus structural mechanics. They are not yet widespread in CFD. Even if we were to be able to generate a mesh with higher-order elements, our solver also needs to be able to support that, and again, most CFD solvers don't support higher-order elements.

Post-processing is another issue. If you want to visualise higher-order elements, you need to have software that can visualise them for you. As of the time of writing, support for higher-order elements is sparse but some software do support higher-order elements. Suffice it to say, while higher-order elements are the norm for structural simulations, they have yet to become fully adopted in the CFD world.

So, with a basic understanding of meshing techniques and approaches to generate different grids, let us now get into the nitty-gritty details of mesh generation. First up: Mesh quality, and why you really should, nay, must, pay attention.

Mesh elements and their quality - why does it matter?

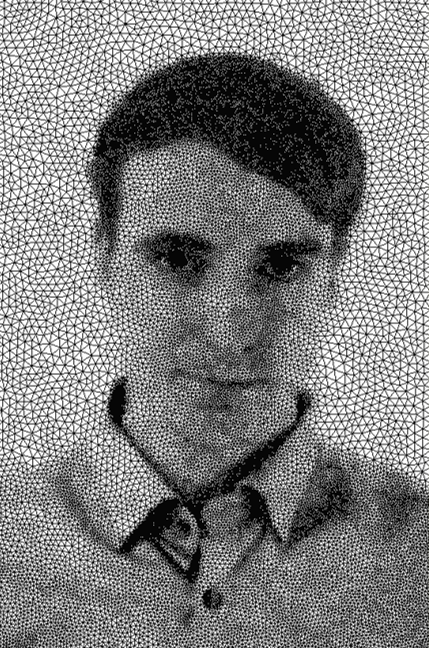

Mesh quality is underrated, underestimated, misunderstood, and frankly, not given the attention it deserves in the literature. Sure, if we want to study homogeneous decaying turbulence in a periodic box, then who cares about grid quality, right? The CFD literature is overly reliant on assuming all we ever want to do is to simulate the simplest of cases, but the real fun only starts once you leave the CFD toy examples behind and dare to mesh a car, an aircraft, or even yourself. This is my attempt:

Look how happy I am! Smiling from cheek to cheek. Well, this is likely the biggest smile you will get out of a German. Don't believe me? Look at the following famous Germans:

On the left, we have Angela Merkel, the former CEO of Germany after winning an election. In the middle, we have Michael Schumacher after winning another world championship in Formula 1. When he was not busy winning world championships, he always put his well-tempered personality on full display. On the right, you have Thomas Müller after winning a fourth world championship for Germany in 2014 in football. You see, in context, I am smiling quite a lot!

OK, concentrate, Tom, no one cares about your stupid side quests ...

As we have seen in the previous section, unless we are happy to burn the planet to fuel our CFD addiction, a complex geometry typically goes hand-in-hand with some form of poor mesh quality. We can't avoid it. Then, you may check what mesh quality limits your CFD solver recommends. These are not rules written in stone but rather experiential values. You generate your mesh, you may or may not respect these recommendations, your simulation diverges regardless, and you cry a little. This is normal.

To save you from a full-blown, hospitalisation-worthy mental breakdown, let us talk about mesh quality for a bit. After you understand the most important mesh quality metrics (of which there are 4, I would argue), life will become easy again. Well, or at least you will know if you should pre-emptively dial 999 as you start your simulation.

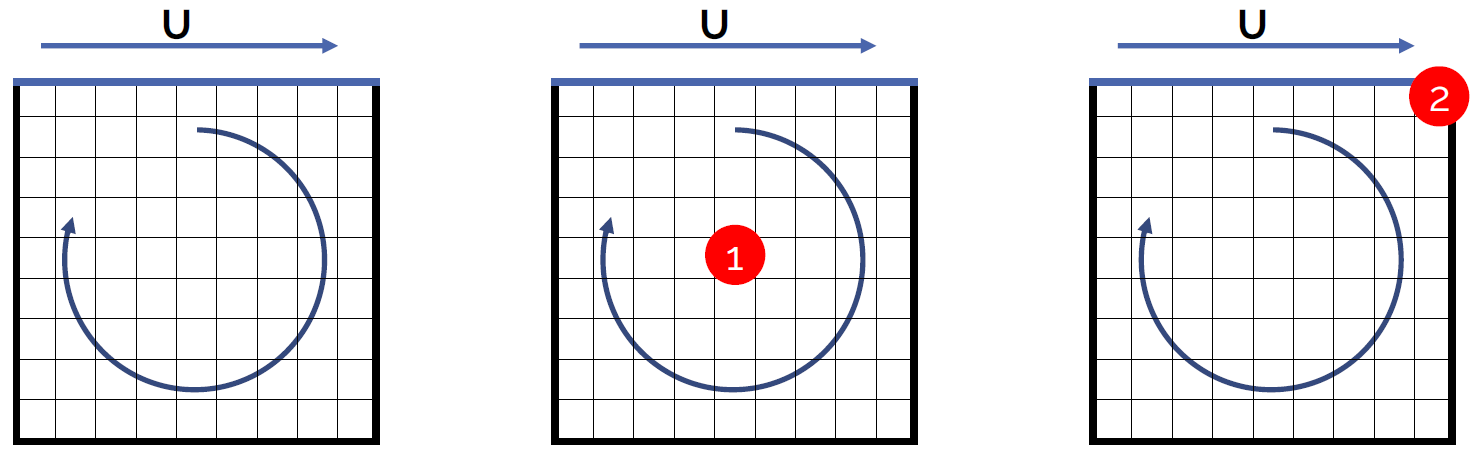

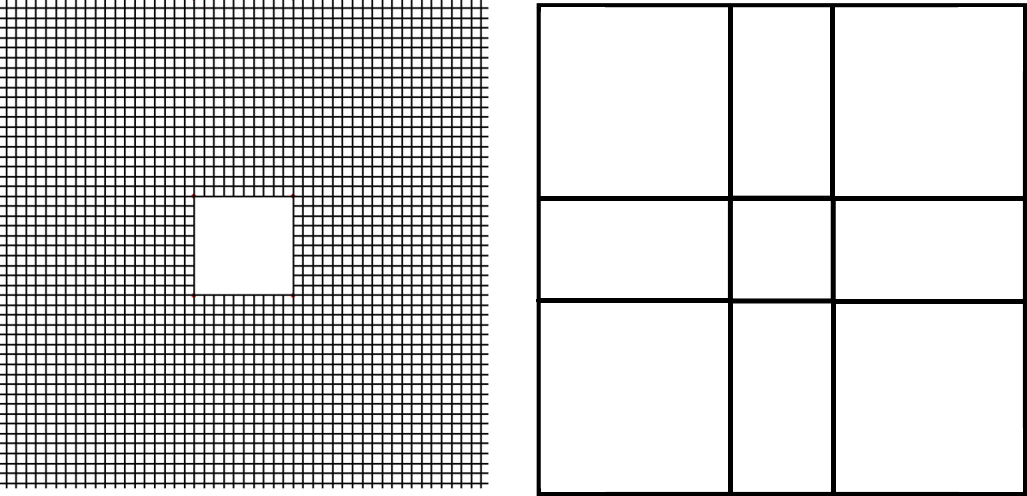

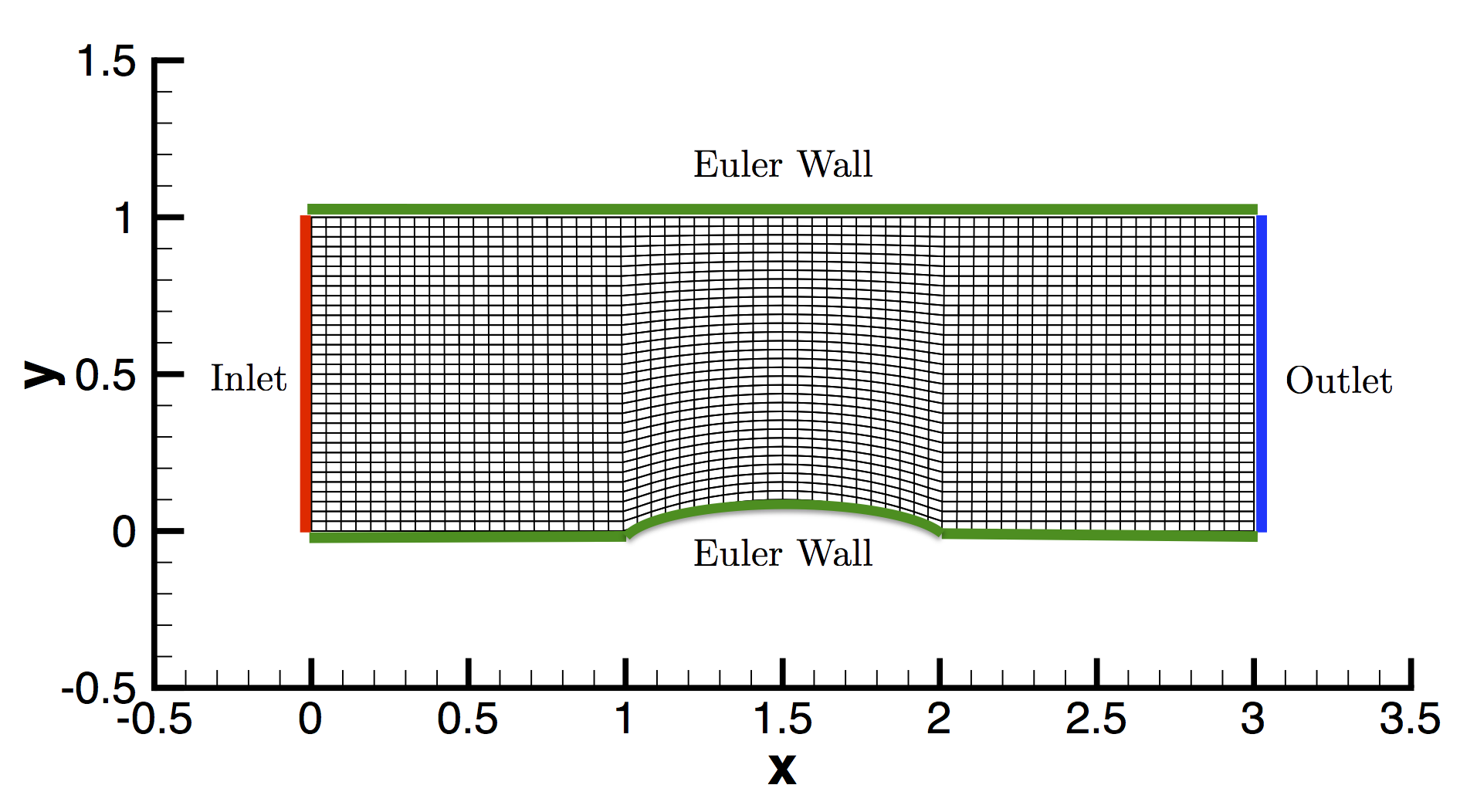

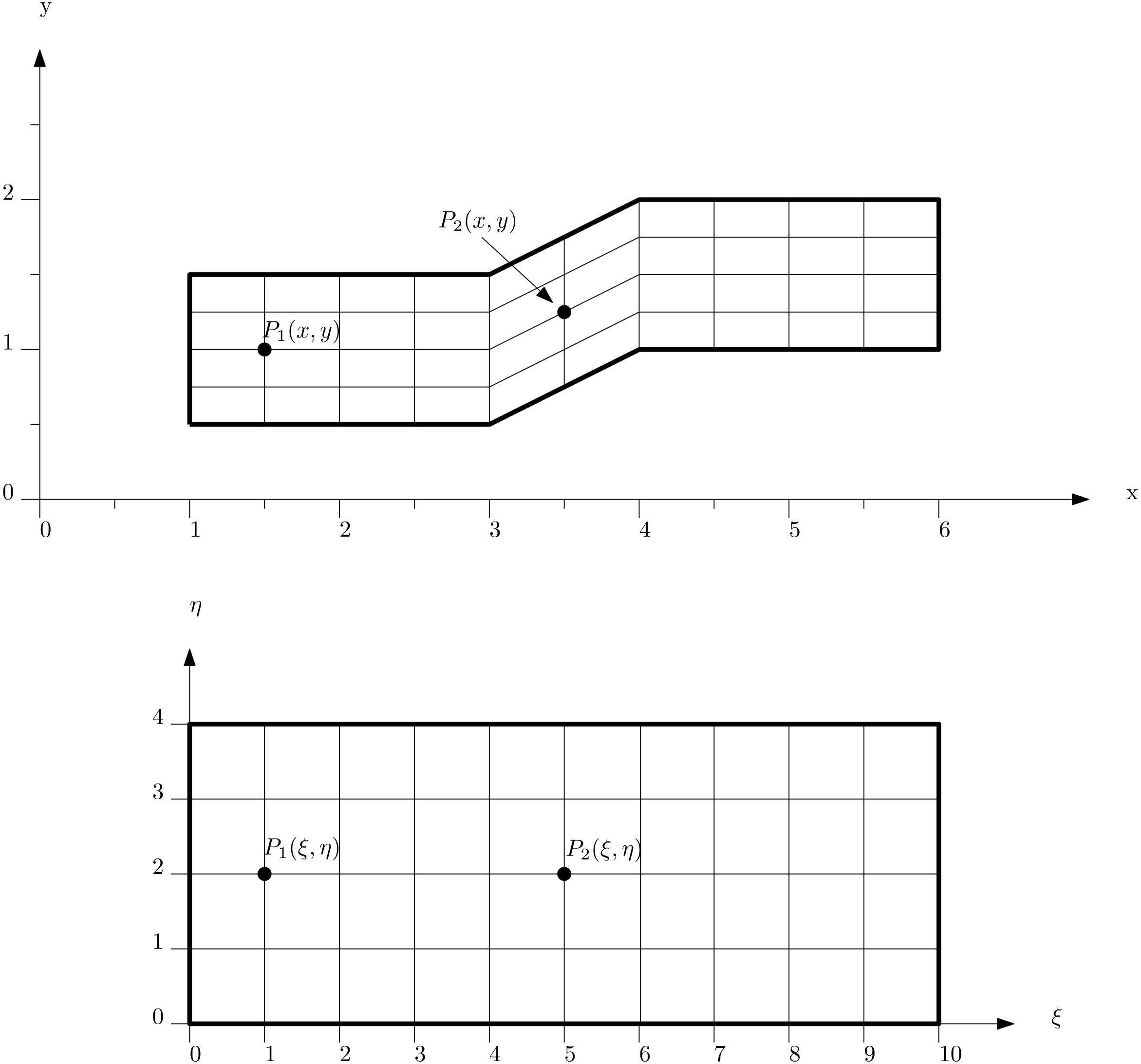

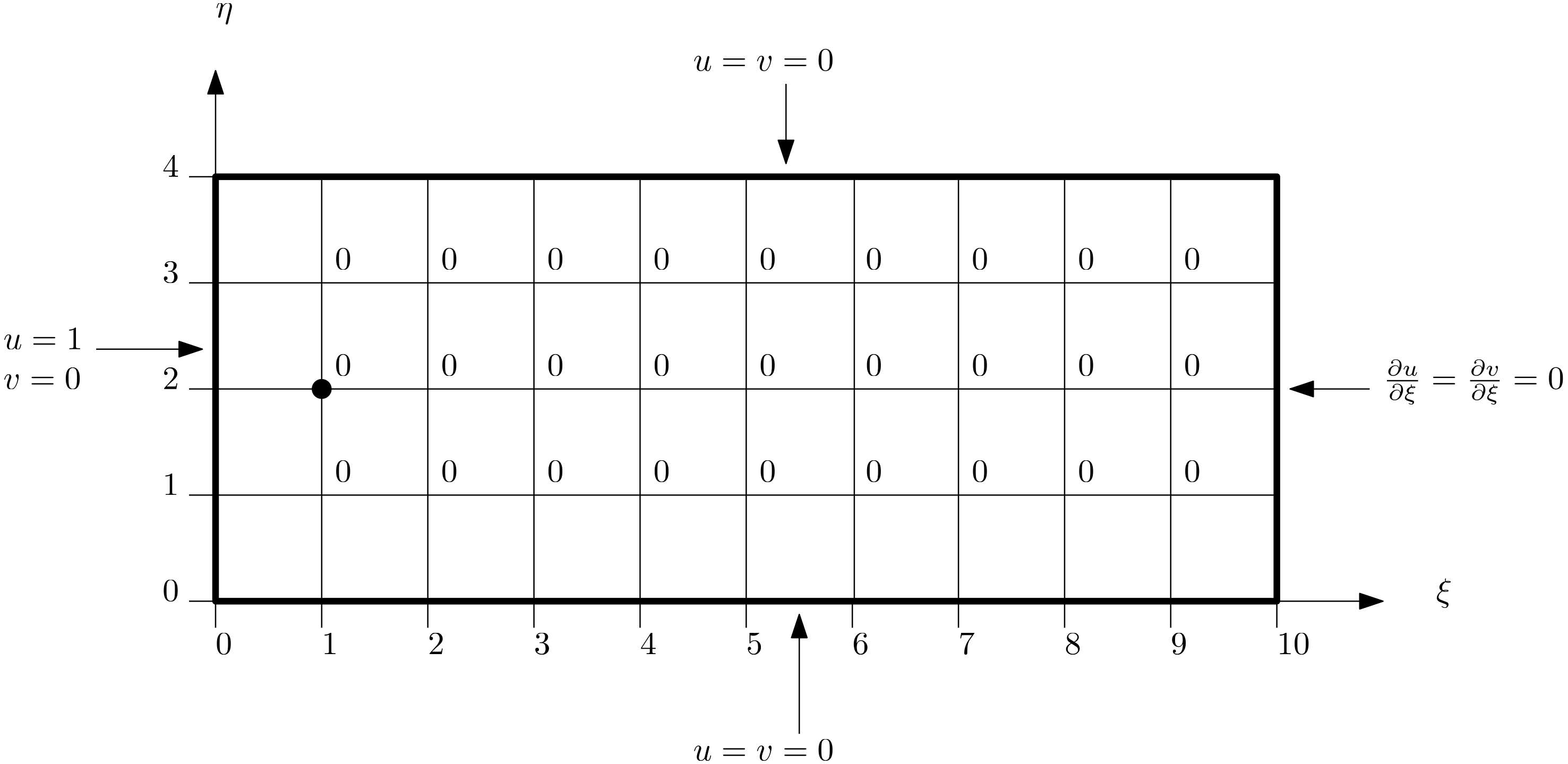

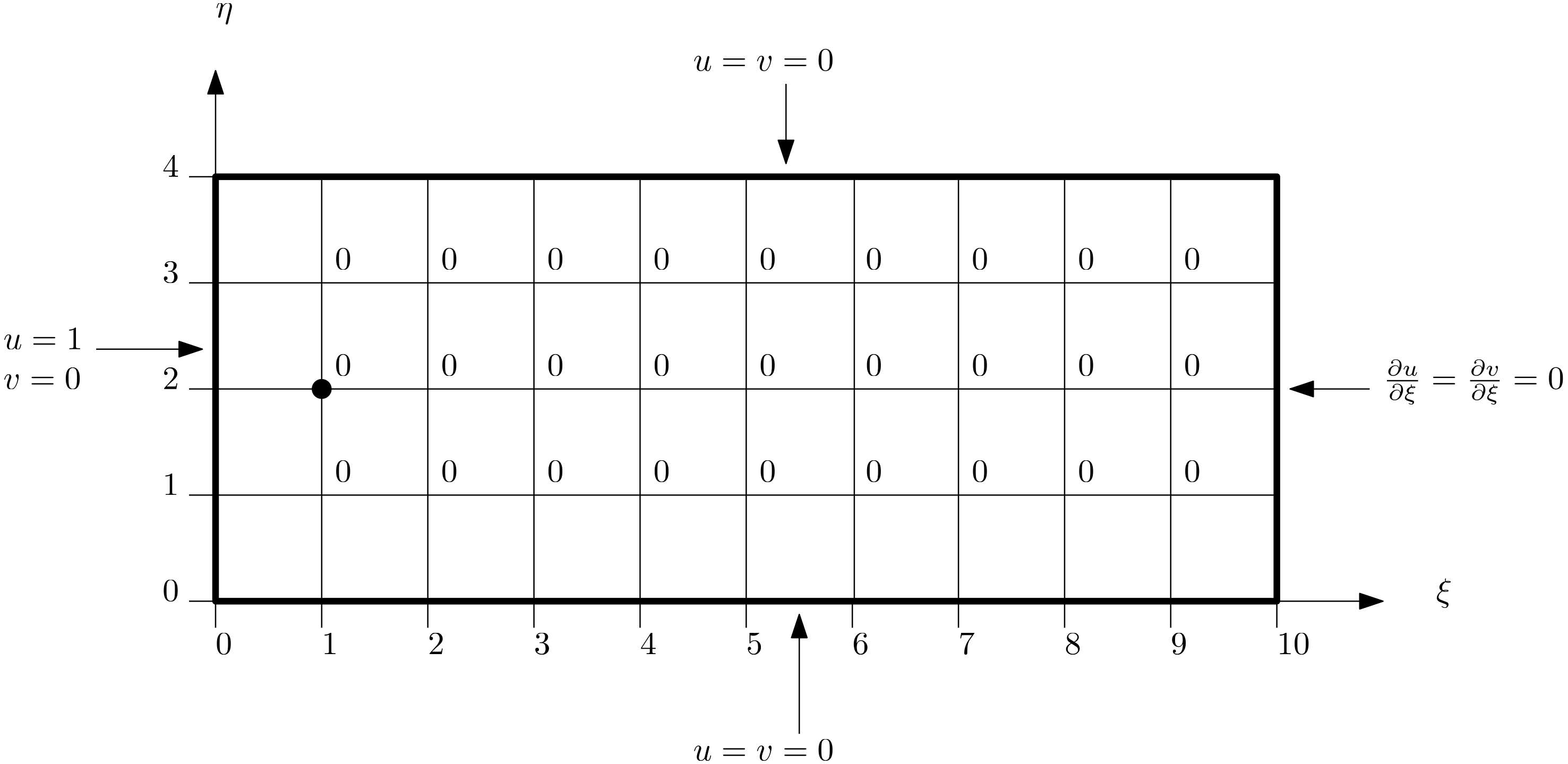

The best way to demonstrate my point is through a case study. We are going to take the most boring case in the world, at least if you have done your PhD in incompressible flows and spent more time with it than your partner (Anyone? Just me?). The case is schematically depicted below:

It's a box (yay!), where the left, right, and bottom boundaries are set as solid, stationary walls. The top boundary is also a wall, but it is moving from left to right. In an experiment, we would achieve this by having a box filled with a fluid, where we attach a conveyor belt at the top, simulating the moving wall boundary condition.

In the schematic shown above, I have given you three geometries. The left one is the baseline. This mesh was generated with the best possible mesh quality (we will get onto what that means later). For the case in the middle, I have moved nodes around in the centre of the mesh, so as to reduce the mesh quality in this region. I have done the same for the mesh shown on the right, with the only difference that I have manipulated the mesh in the top right corner.

So, we have the control (baseline), and two grids with reduced mesh quality in two different locations. The question now becomes, what happens to our simulation? Well, the following 5 scenarios are possible:

- Nothing happens; the simulation will be unaffected

- The simulation will diverge

- The convergence rate will be reduced

- The accuracy will be reduced

- The convergence rate and accuracy will be reduced

Make your choice. Ready? Let's move on then. After my overly dramatic introduction to mesh quality metrics, and how they are underrated and getting you hospitalised, we can probably guess that the first option is out. But what about the other 4? Well, let's have a look.

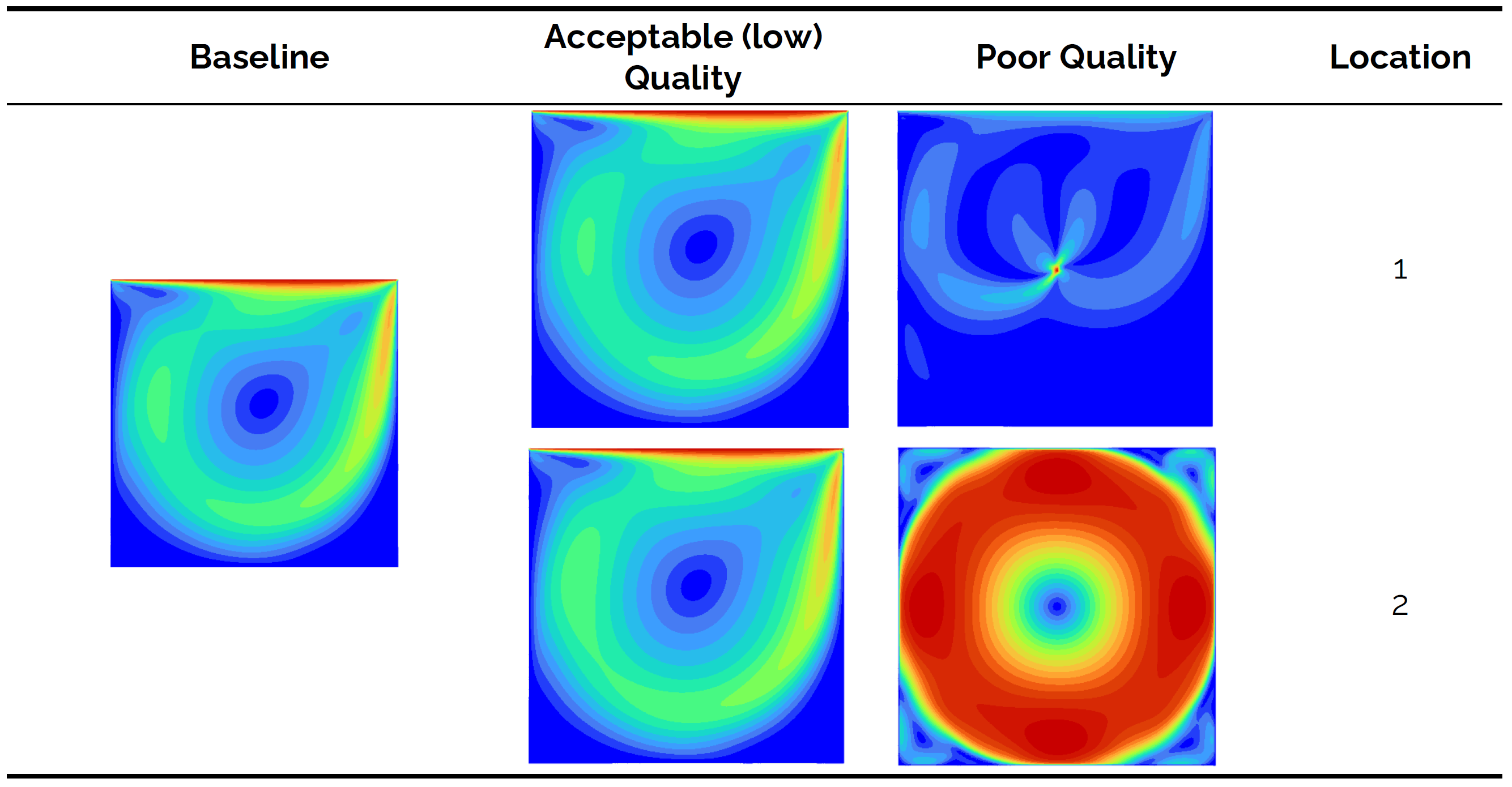

The following overview shows contour plots of the velocity magnitude for the control (baseline), as well as the two other grids. I have generated two grids at each location (location 1 in the centre of the domain and location 2 at the top right corner). I have generated these grids so that we have a case where we have low quality, which is still acceptable, and poor quality, which may affect your simulations negatively.

We see from the baseline that a vortex is formed in the centre of the domain. The Reynolds number isn't particularly high (it is 100 based on the length of the top boundary), so for all intents and purposes, this is a laminar flow. Let's look at the result for the low, but still acceptable, quality cases. Both locations 1 and 2 show that the results are slightly different to the control (if we inspect contour lines), but overall we can say that we still have obtained good results.

Looking at the poor quality case, however, we see that we get nonsensical results. For both locations 1 and 2, we get results that do not match the baseline, and for location 1, we can see that we get a singularity of some form that is located at the exact point where the poor mesh quality comes from. OK, so there is definitely an influence on the accuracy of the simulation, but what about the convergence rate? This is shown in the next overview:

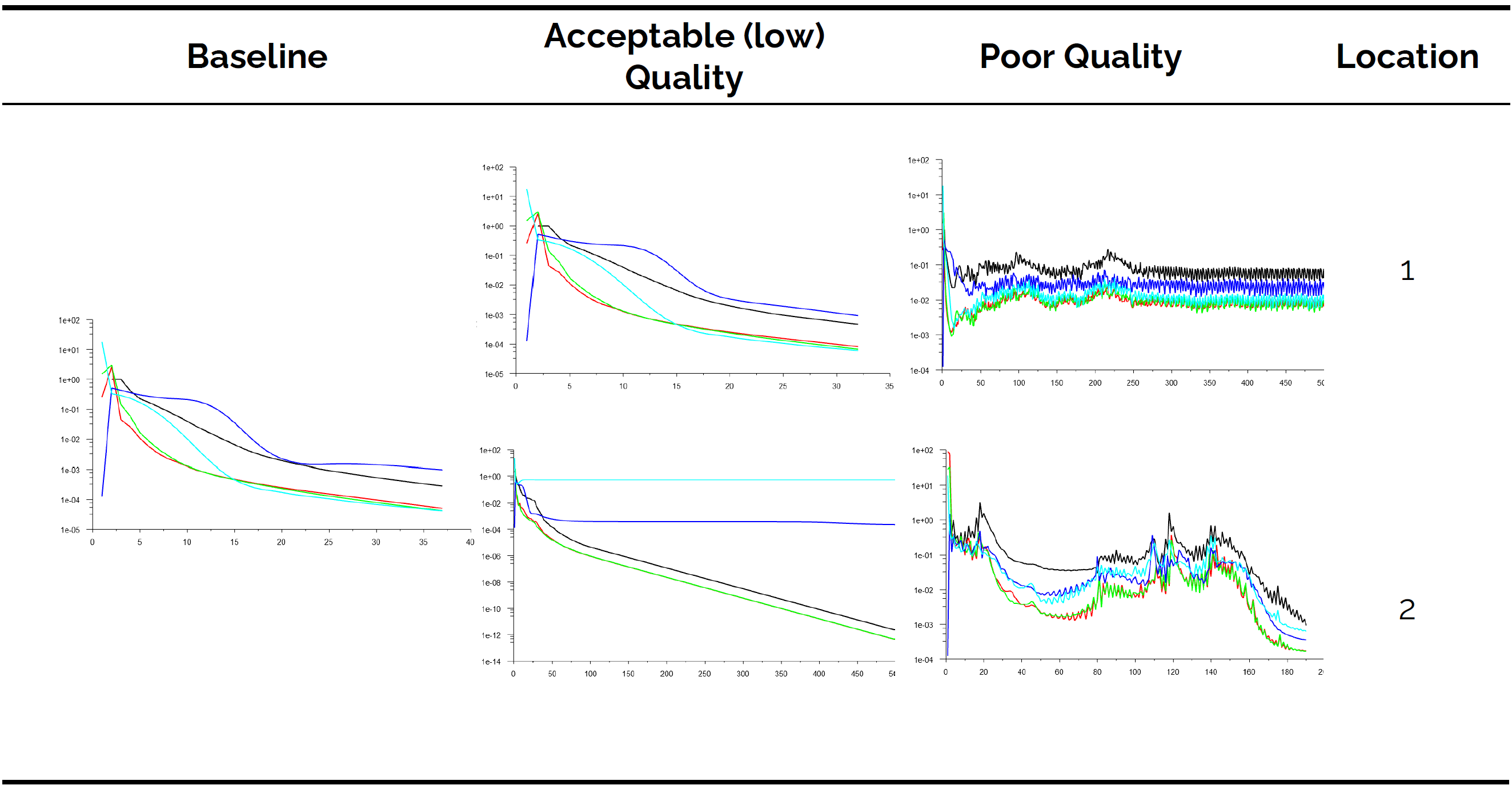

Here, we can see the residuals for the simulation. In general, looking at the residuals to judge convergence is rarely a good idea, but for simple cases without turbulence, you can typically get away with it. The baseline shows convergence after about 37 iterations. The exact value isn't important; we are interested in the trend.

Let's look at the low, but acceptable, quality case. For location 1, the flow is converging; no problem at all here. But at location 2, we see that the residuals for two variables have flatlined. Residual-based convergence checking would not be possible here, and we would typically resort to integral quantity-based convergence checking.

Now, let's turn our attention to the poor quality case. At location 1, there is no convergence. This isn't the biggest problem here. Looking at the residuals, they suggest that we are dealing with a turbulent flow, where residuals typically oscillate in exactly the same manner as we can see here on display. Even worse, at location 2, we get convergence. We know, from the previous contour plots, that we got non-physical results here; even so, we do get convergence in this case.

So what is going on here? Grid quality has the following properties:

- They reduce your convergence rate and may even result in no convergence at all.

- The accuracy may be affected, and you may even converge to non-physical results.

- Even though not shown here, a poor-quality mesh may be responsible for divergence.

To make matters worse, all it takes is just a single cell in which the quality is low. For the case study shown above, I just tampered with the quality of 1 cell. All it took was 1 cell to ruin the entire simulation. So, mesh quality affects the solution globally and not just locally where it occurs. Just like cancer, it spreads from one cell to another until the entire system is affected.

Thus, we have to be vigilant and ensure that we generate a mesh with the best possible mesh quality metrics. So, how do we know if a mesh is good or not? Well, we have mesh quality metrics that tell us exactly how good or bad our mesh is, and we will review them shortly. However, mesh quality is not just a function of the complexity of the geometry we try to simulate but also of the mesh element types we want to use. Let's take a look at them first, and then we will look at the 4 most influential mesh quality metrics.

The group of 10: The fundamental building blocks of any mesh

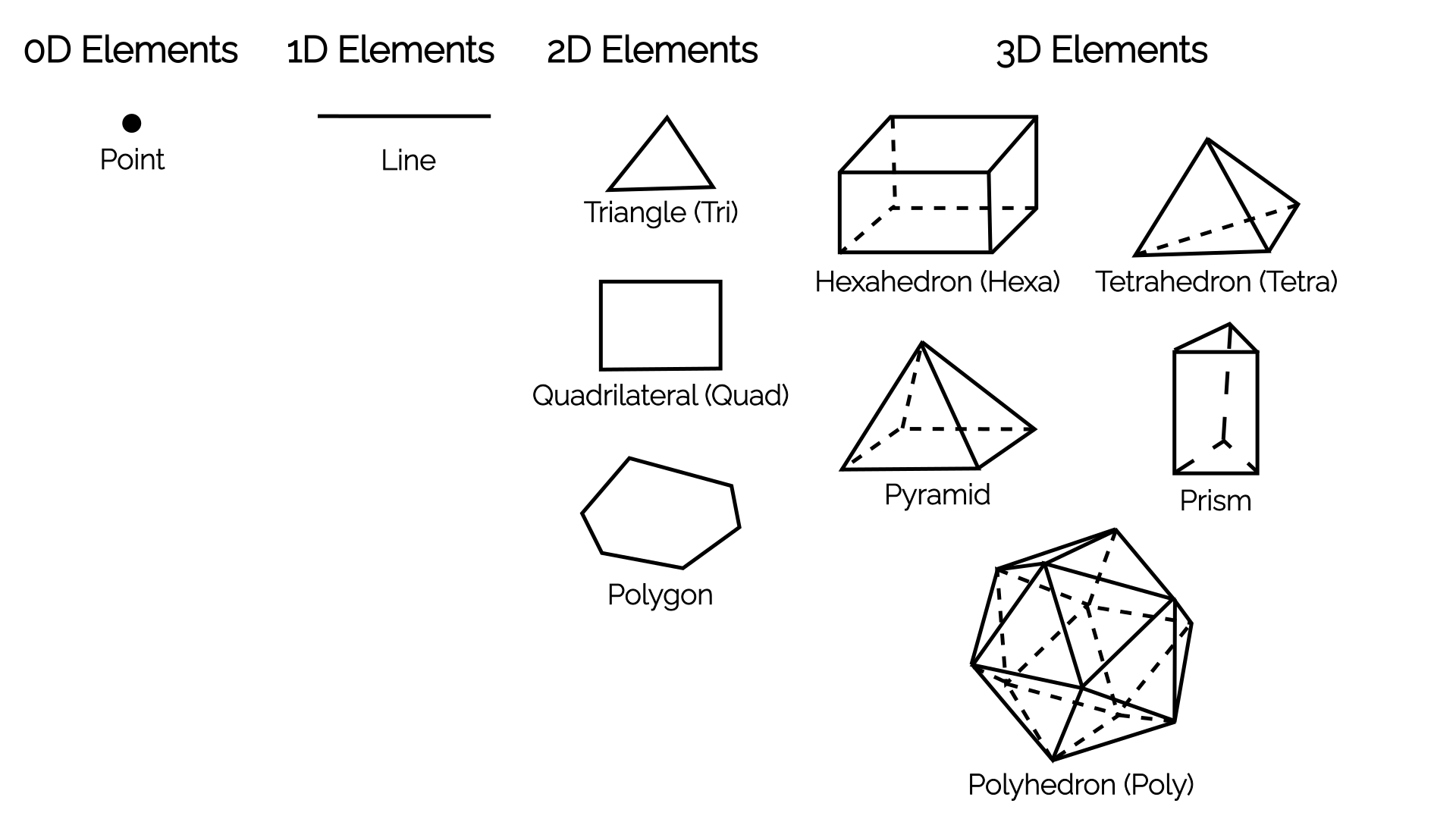

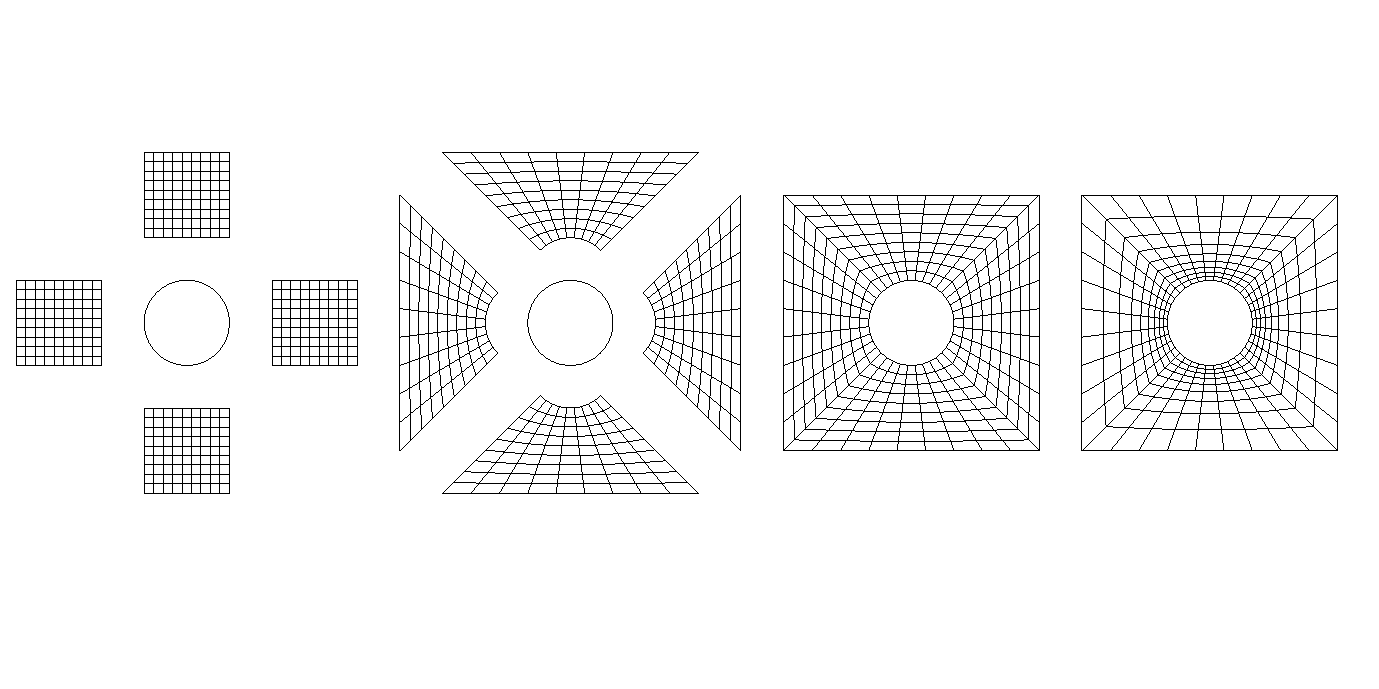

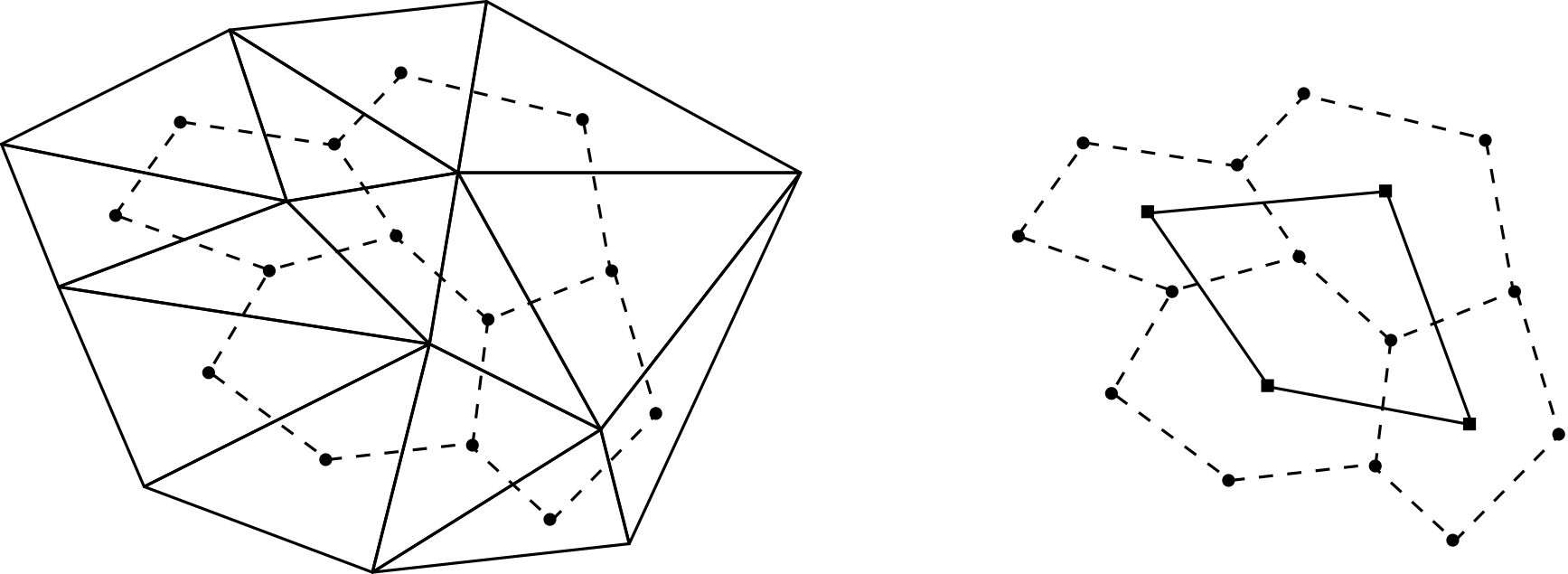

All you ever need is 10 elements. These are depicted in the following figure:

The overview shows elements grouped by their dimensionality. In 0D, we just have a single point, and in 1D, we have a single line. You may ask yourself, what is the point of having a 0D point? Well, if we write an [katex]N[/katex]-dimensional CFD solver, then our boundary conditions will be [katex]N-1[/katex]. So, for a 1D CFD solver, we would be using lines in the domain and [katex]N-1=1-1=0[/katex], i.e. 0D points on the boundaries.

Of more interest are the elements in 2D and 3D. The standard elements we have come to accept for 2D are the triangle and quad elements, while in 3D, we have tetra, hexa, prism, and pyramid elements. In 2D and 3D combined, we have 6 standard elements. However, we also have polygons in 2D and polyhedra in 3D. Polygons can represent any shape in 2D (including triangles and quads), and polyhedra can represent any shape in 3D (including tetra, hexa, prism, and pyramid elements).

So, you could say, all you ever need are just 4 elements: a point, a line, a polygon, and a polyhedron. I would agree with that (and, in fact, I would write my CFD solver in exactly this way, as I just have to implement a single element type and be able to work with any mesh element type), but, for legacy reasons, we like to keep the other 6 standard elements around. Some mesh generators can't generate polyhedra, some mesh formats can't represent them, and so we keep our inferior standard elements around.

They do serve a good educational purpose, though. If I told you that pyramids are a nightmare to deal with, then you may ask yourself, why do we use them in the first place? Well, there is a very specific use case for which we use them, even though we may prefer to avoid them. Can you spot it?

If I told you that a quad-dominant surface mesh is better than a triangle-dominant surface mesh, and that tetrahedra have the ability to fill out any 3D space without any issue, while hexahedra struggle, can you see why Pyramids are good? Both the pyramid and prism elements possess a property that the other elements don't have: they are composed of both quad and triangle surfaces.

If I have a quad-dominant surface mesh and I want to connect that to my tetra-dominant volume mesh, then I need to connect my quads from the surface mesh with triangles from my tetrahedra. Thus, I need a connecting element that has both triangle and quad surfaces. This is why I need pyramids. Prism elements serve exactly the same purpose, only that they connect a triangle surface mesh with tetrahedra in the far field, while allowing for nicely stacked layers between the surface and far field mesh.

These layers, often referred to as inflation layers, are important, as we will see later, to resolve the turbulent boundary layer.

OK, at this point, we need to talk about mesh quality metrics to get the full picture. We will then see how different mesh elements will directly influence the mesh quality, and this will help us appreciate which mesh generation technique may be best suited for the cases we want to simulate.

Mesh quality - your biggest enemy

I have already stressed that it is your mesh quality that will make or break your simulation. If you then decide to be a good CFD practitioner and you want to generate a mesh with high quality, you might look at what mesh quality metrics are available and realise that there are way too many. Yes, if you open any serious mesh generator, you will likely find 20 - 30 different mesh quality metrics. Does it mean we have to check each?

Thankfully, no! Mesh quality metrics are interrelated. You change one, and others will also change. Depending on the mesh quality metrics we look at, it is usually impossible to get a good quality with one metric and then a bad quality with another one. They typically change together, and if you have generated a bad mesh, you won't be able to cherry-pick one or two quality metrics that look good and then claim that your mesh is perfect, despite your simulations diverging.

There are exceptions, and some mesh quality metrics change independently. Regardless, in my opinion, there are only 4 quality metrics you should pay attention to when inspecting your grid. These are:

- Orthogonality or non-orthogonality

- Skewness

- Aspect ratio

- Area or volume ratio

All of these mesh quality metrics will influence your simulation differently, and in the next sections, we will define and establish the influence each mesh quality metric has on your simulation.

Orthogonality/Non-orthogonality

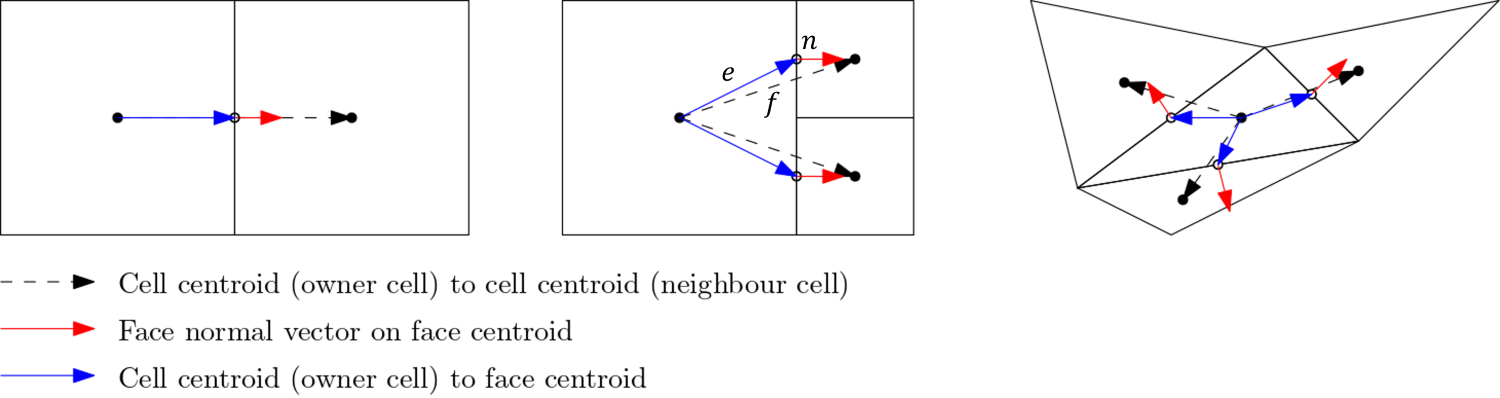

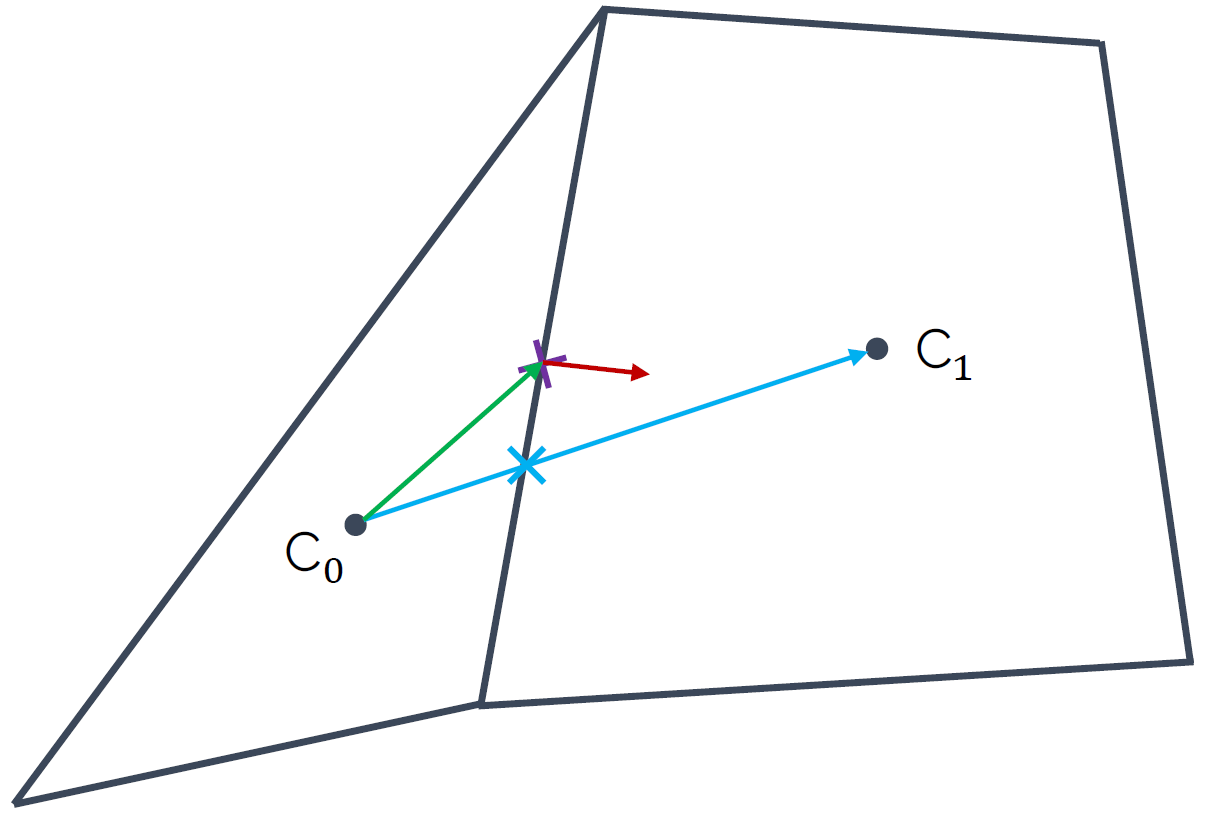

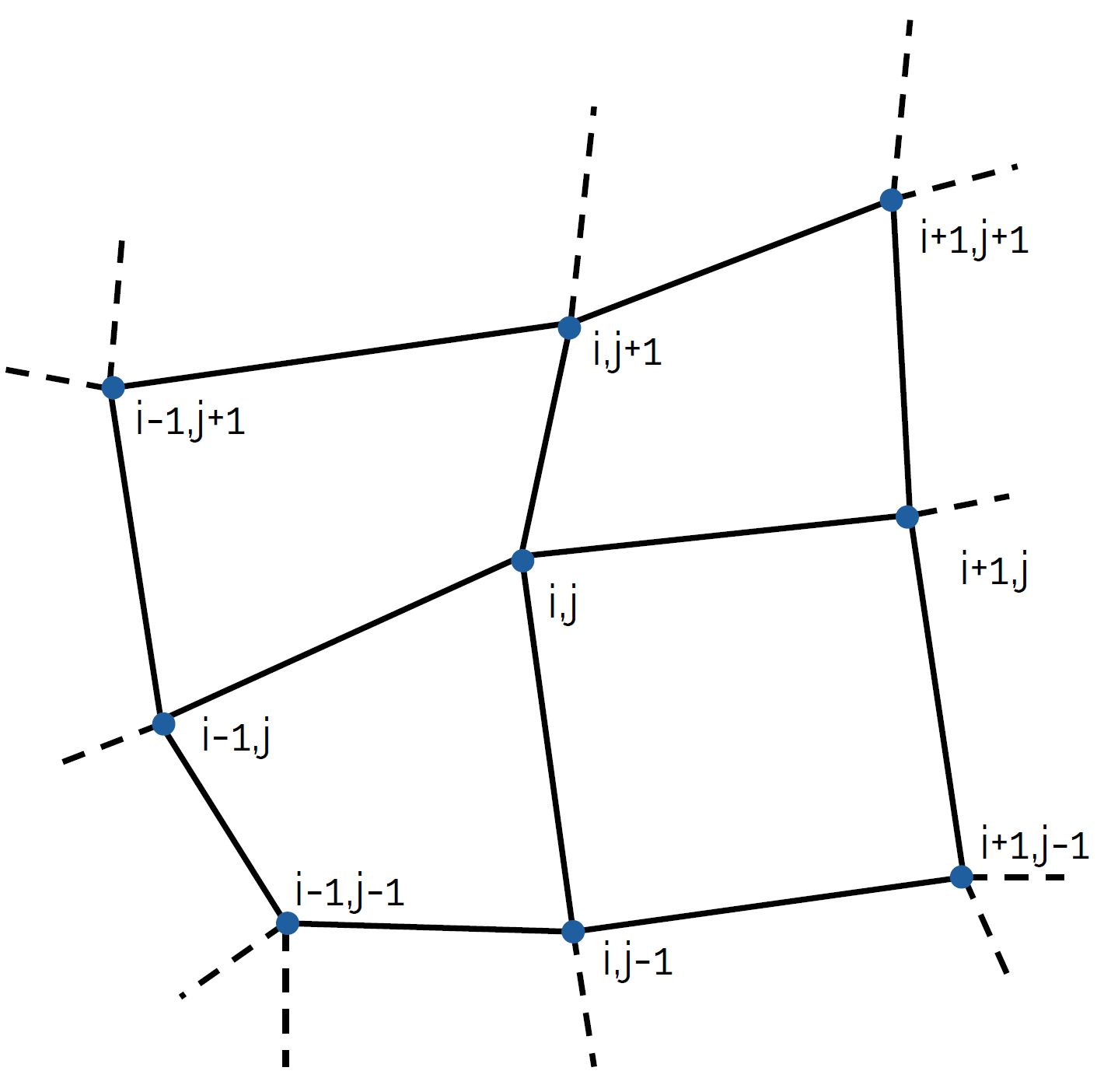

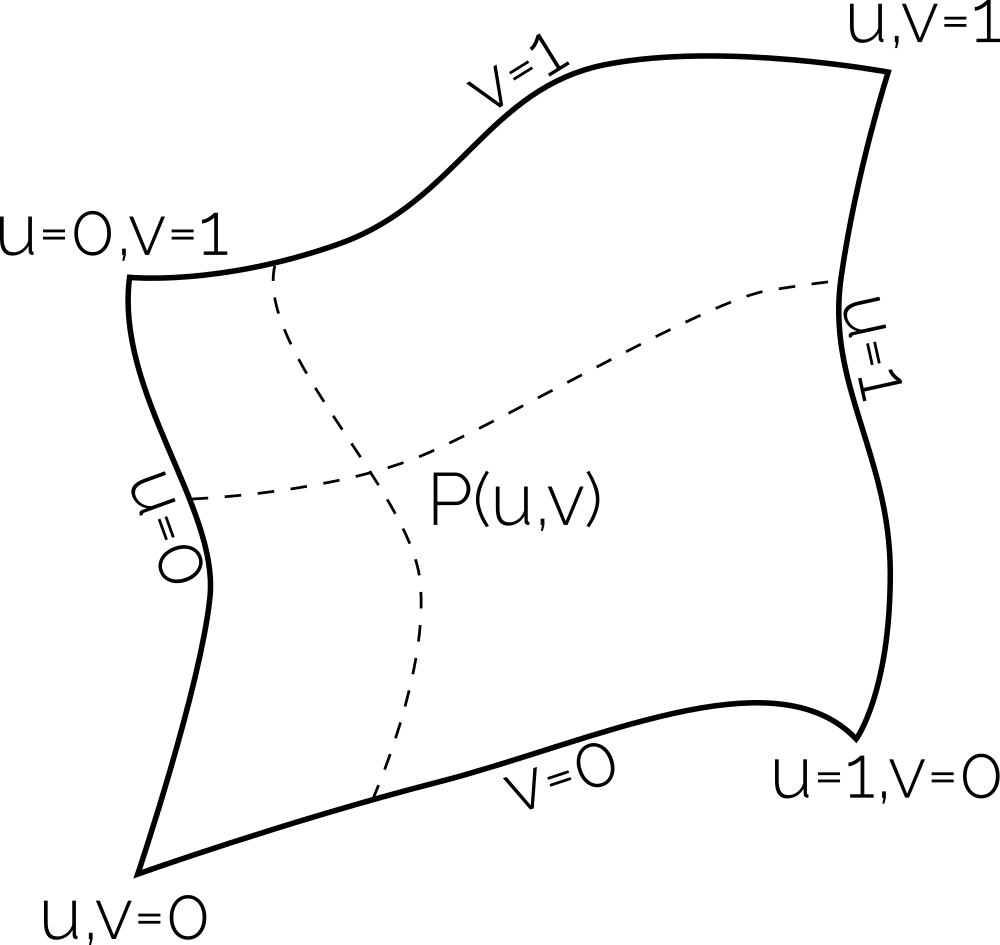

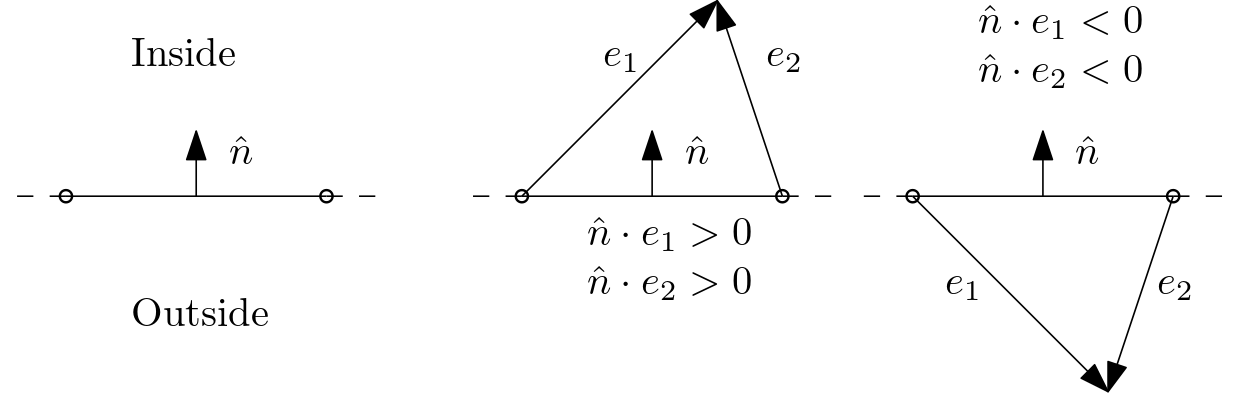

First up, let's talk about the orthogonality property. For this, let us look at the following schematic:

Here, we define three vectors:

- The vector [katex]\mathbf{f}[/katex], shown as a dashed black line, which connects the centroids of two adjacent cells.

- The vector [katex]\mathbf{e}[/katex], shown in blue, which connects a cell centroid with the midpoint of a face.

- The vector [katex]\mathbf{n}[/katex], shown in red, which represents the surface normal vector of the face which connects two adjacent cells.

We use these three vectors to compute the so-called orthogonality property, which is given as:

Q_{Orthogonality}=\min\left(\frac{\mathbf{n}A\cdot \mathbf{f}}{|\mathbf{n}A|\cdot |\mathbf{f}|},\frac{\mathbf{n}A\cdot \mathbf{e}}{|\mathbf{n}A|\cdot |\mathbf{e}|}\right)

\tag{1}

Here, [katex]A[/katex] is the area of the face that connects two adjacent cells. Let's look at what this equation evaluates to. Ignoring the area [katex]A[/katex] for a moment, we see that both numerators in Eq.(1) perform two dot products, or scalar products. We can geometrically interpret the dot product as:

\mathbf{n}\cdot\mathbf{f} = |\mathbf{n}|\,|\mathbf{f}| \cos\theta\tag{2}Here, [katex]\theta[/katex] is the angle between the two vectors. We can solve for the angle [katex]\theta[/katex] and get:

\frac{\mathbf{n}\cdot\mathbf{f}}{|\mathbf{n}|\,|\mathbf{f}|} = \cos\theta\tag{3}Having the magnitude of both vectors in the denominator means that we are now getting a normalised result, i.e. the left-hand side will always evaluate to something between -1 and +1. This is what we would expect for a cosine function. We know that the [katex]\cos(0^\circ)=1[/katex], so if the dot product evaluates to 1, that means that there is no angle between our vectors and they are overlapping.

Looking back at the figure given above, this is the case for the cell arrangement shown on the left. Here, we have all vectors, i.e. [katex]\mathbf{f}[/katex], [katex]\mathbf{e}[/katex], and [katex]\mathbf{n}[/katex] on top of one another. Since there is no angle between these vectors, and we have, as a result, a (normalised) dot product of 1, this means that Eq.(1) must also evaluate to 1, and we have [katex]Q_{orthogonality} = 1[/katex].

However, once we have angles between our vectors and they are no longer aligned with one another, then we get scalar products that are less than one, and as a result, our orthogonality measure will go down. This is shown in the figure above for the cell arrangement in the centre and on the right.

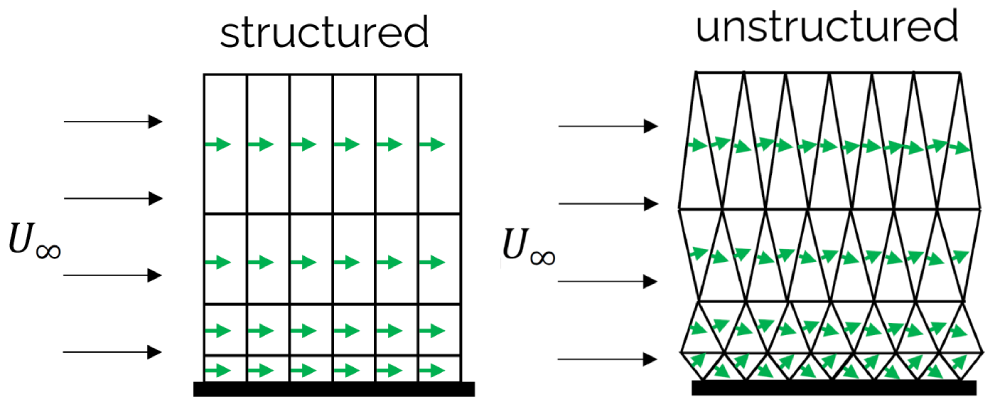

What we can see from this figure as well is that structured grids, consisting of quad (2D) and hexa (3D) elements, will promote high orthogonality out of the box. Sure, curvature in our geometry means that we will get some non-orthogonality, but by itself, quad and hexa elements preserve a high orthogonality. Triangles (2D) and tetra (3D) elements, on the other hand, naturally produce lower orthogonality values, and great effort has to be taken to increase their orthogonality values.

Therefore, we can say that a mesh with perfect orthogonal quality must have a value of 1. A mesh with bad orthogonal quality will approach a value of 0.

Sometimes, you will find people using non-orthogonality instead of orthogonality as a quality metric (yes, I am looking at you, Pointwise, and yes, I know that you changed your name after being bought by Cadence, and I refuse to call you by your full name). This is simply defined as:

Q_{non-orthogonality} = 1 - Q_{orthogonality}\tag{4}In our example above, if we have perfectly aligned vectors, then we have [katex]Q_{orthogonality}=1[/katex] and therefore [katex]Q_{non-orthogonality}=0[/katex].

OK, we know how the orthogonality is defined, so what? Why bother, and why should I care? Excellent question, and to my surprise, no one really describes in the literature what the orthogonality does, and what influence it has on our solution. So let's look at that, but first, we need to quickly discuss numerical integration to make sure we are all on the same page.

Imagine you want to integrate a function but you have no idea what the function is. All you have is several points, which you may have obtained from experimental measurements. What do you do? Well, numerical integration. If you go back to high-school or undergraduate math, you may remember some of the numerical integration techniques, such as the midpoint, trapezoid, or Simpson rule.

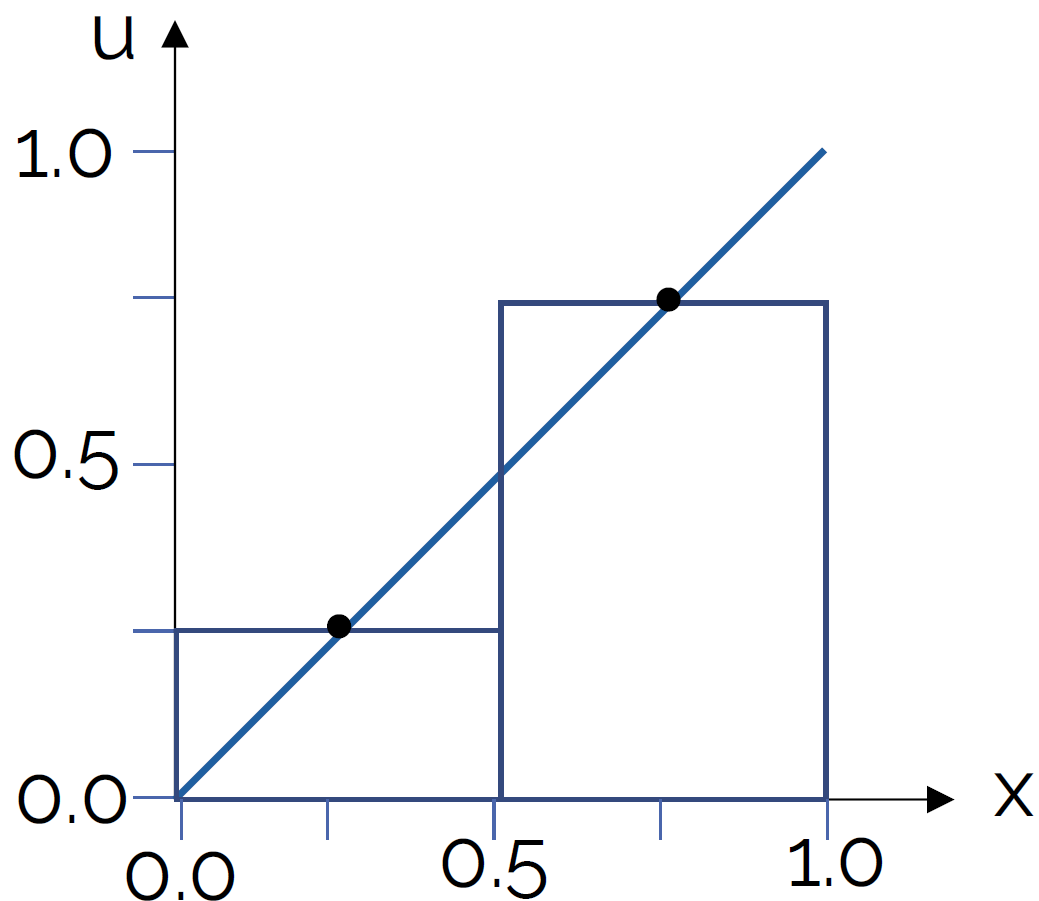

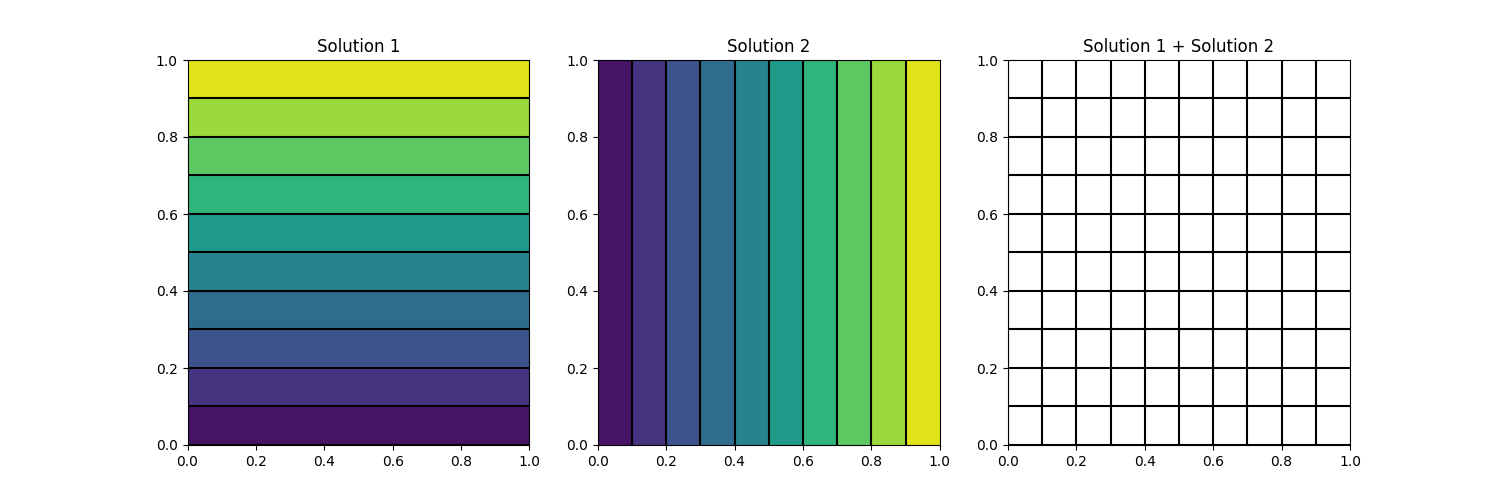

Let's take the midpoint rule, and see how we can use that to approximate the area under the function [katex]x=y[/katex] in the interval 0 to 1. Let's say we approximate that with two rectangles/bar elements as shown in the following figure:

The height of each element is such that the function (or point measurement from an experiment) coincides with the centre of the rectangle/bar element. Then, the idea is to calculate the area of each rectangle/bar element and to sum up these individual areas, which should give us a close prediction of the area under the curve.

The important part here is that we are placing the rectangles/bar elements in such a way that their vertical centerline coincides with the function or point measurements. This means that we will over- and under-predict some area left and right to the function value or point measurement, but these should balance and overall cancel out, as long as the width of our rectangles/bar elements is small enough.

Let's evaluate this integral first analytically, and then numerically. The analytic solution for the integral of [katex]y=x[/katex], or [katex]f(x)=x[/katex] in the interval from 0 to 1 can be obtained as:

\int_0^1 x\mathrm{d}x=\frac{1}{2}x^2\bigg|_0^1=\left(\frac{1}{2}1^2 - \frac{1}{2}0^2\right)=0.5\tag{5}So we would expect the exact area of [katex]y=x[/katex] between 0 and 1 to be 0.5. Just looking at the figure above, we could have probably reasoned that with some intuition without having to evaluate the integral, i.e. we essentially integrate the area under a triangle. If we take another triangle of the same size, and we place it in the upper left part of the plot, then we have two triangles that make a square with an edge length of 1. Therefore, the square must have an area of 1, and so two equally sized triangles must have an area of 0.5.

Ok, let's evaluate the integral numerically. This is done with the following formula:

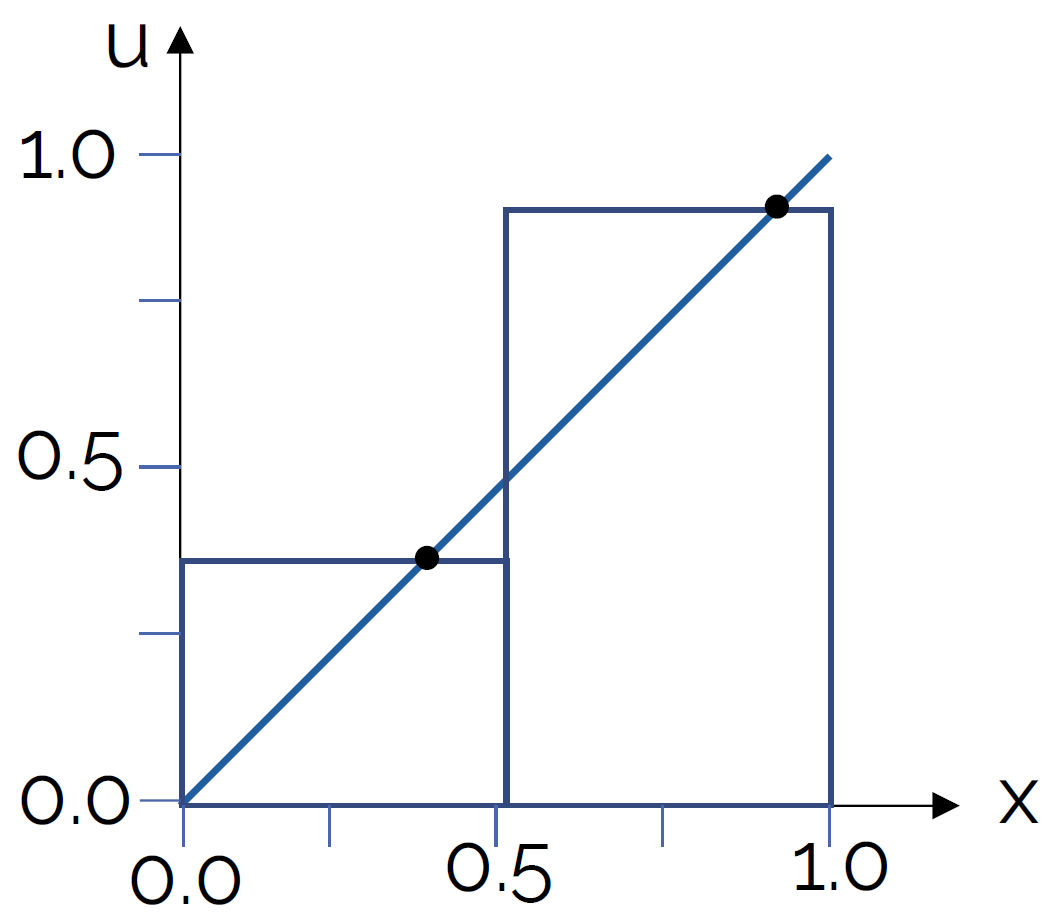

\int_0^1 x\mathrm{d}x\approx \sum_{i=1}^2 f_i\Delta x = 0.25\cdot 0.5 + 0.75\cdot 0.5 = 0.125 + 0.375 = 0.5\tag{6}Lo and behold, the numerical and analytic evaluation produce exactly the same result. Fantastic! We can throw everything we know about analytic maths out the window and rely entirely on numerical approximations. What's the point of integrals, right? Well, let's look at another example. In the following figure, I have violated the midpoint rule by evaluating the function not at the midpoint of the rectangles/bar elements, but rather some distance to the right. This is shown in the following:

Let's say that the function values, or height of the rectangles/bar elements, now evaluate to 0.4 and 0.9, respectively. Now my numerical integration becomes:

\int_0^1 x\mathrm{d}x\approx \sum_{i=1}^2 f_i\Delta x = 0.4\cdot 0.5 + 0.9\cdot 0.5 = 0.2 + 0.45 = 0.65\tag{7}Hmmm, you might want to open that window and get back all of your analytical math stuff you just threw out of it. Make sure to scoop up all the integrals, you'll need them later ...

So what happened here? We have slightly moved away from the centre of the rectangles/bar elements, and now we have a pretty bad approximation of the area under the curve. This makes sense, as the difference in the area to the left and right of our rectangles/bar elements no longer balances.

But wait, we have only used two elements to numerically evaluate the area, surely we can just throw more than 2 elements at the problem and eventually get the right answer, no? Ok, let's try:

- With a total of 10 elements, we get a value of [katex]\int_0^1 x\mathrm{d}x\approx \sum_{i=1}^{10} f_i\Delta x = 0.53[/katex]

- With a total of 100 elements, we get a value of [katex]\int_0^1 x\mathrm{d}x\approx \sum_{i=1}^{100} f_i\Delta x = 0.503[/katex]

So, well, yes, if we just throw enough resources at our problem, we can get pretty close to the real value, though we will only asymptotically approach the exact value (I'm an engineer by training, I'm ok with asymptotes, if this gives you anxieties, perhaps CFD isn't for you ...).

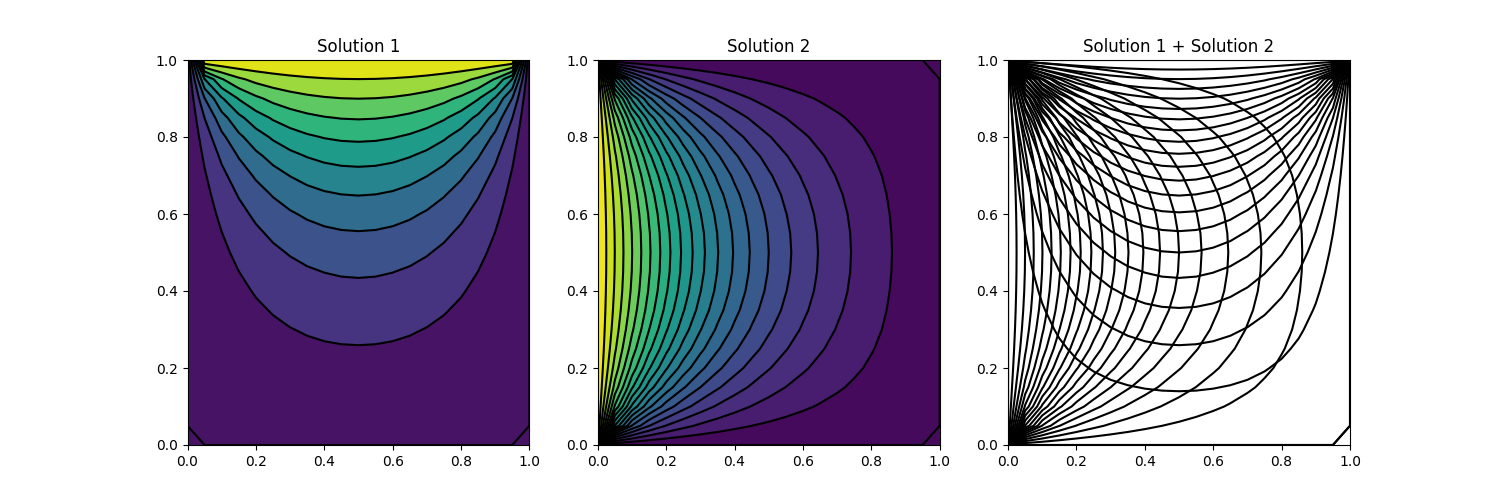

OK, so how does this all relate to orthogonality or non-orthogonality in our mesh? Well, let's look at the following mesh arrangement:

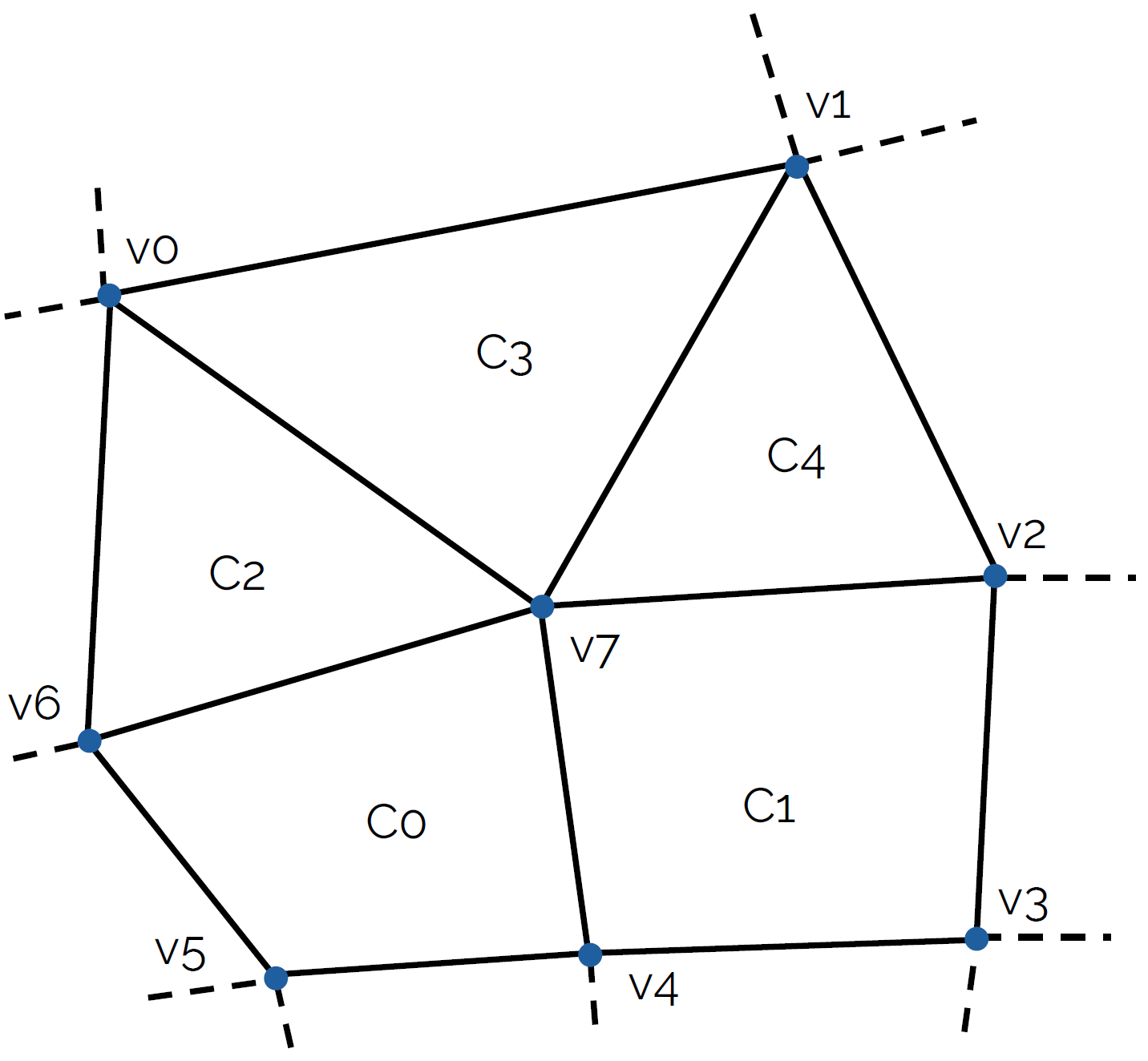

Imagine we discretise now our governing equations, most likely the Navier-Stokes equations, and we solve them on a mesh that contains the above shown cell arrangements. Given that we have a triangle in there, we have lost the ability to express our mesh as a structured mesh, and so we can only use the finite volume method here (or, finite element method if you feel so inclined) to discretise the equations.

Let's look at the convective term [katex](\mathbf{u}\cdot\nabla )\mathbf{u}[/katex] and discretise it in 1D. We could do it for two or three dimensions as well, but the results will be exactly the same with just more terms, so I am keeping it simple here. Using the finite volume discretisation, we get:

\int_V u\frac{\partial u}{\partial x}\mathrm{d}V = \int_S \mathbf{n}\cdot u \,\mathrm{d}S \approx \sum_{i=0}^{nFaces}\mathbf{n}_i u_i A_i

\tag{8}

First, we take the convective term, i.e. [katex]u(\partial u/\partial x)[/katex] and integrate that over a control volume, which coincides with the volume (area in 2D) of our computational cell (i.e. the two that we see above in the figure). Then, we use the Gauss theorem to transform the volume integral into a surface integral, which we do as surface integrals are conservative while volume integrals aren't. If you want to know why, have a look at Why is the Gauss theorem king?.

Also, if you need a refresher on the finite volume method in general and how to use it to discretise the various terms in the Navier-Stokes equations, I have written an entire section on that, which you can find in my article How to discretise the Navier-Stokes equations.

We approximate the surface integral by summations, where we are now looping over each face (3 for the triangle, 4 for the quad) and then compute the product given after the summation symbol, i.e. [katex]\mathbf{n}_i u_i A_i[/katex]. Here, [katex]\mathbf{n}_i[/katex] is the surface normal vector, pointing in the normal direction of the surface (this is the red vector shown in the figure above). [katex]A_i[/katex] is simply the surface area (or length in 2D). What about [katex]u_i[/katex]?

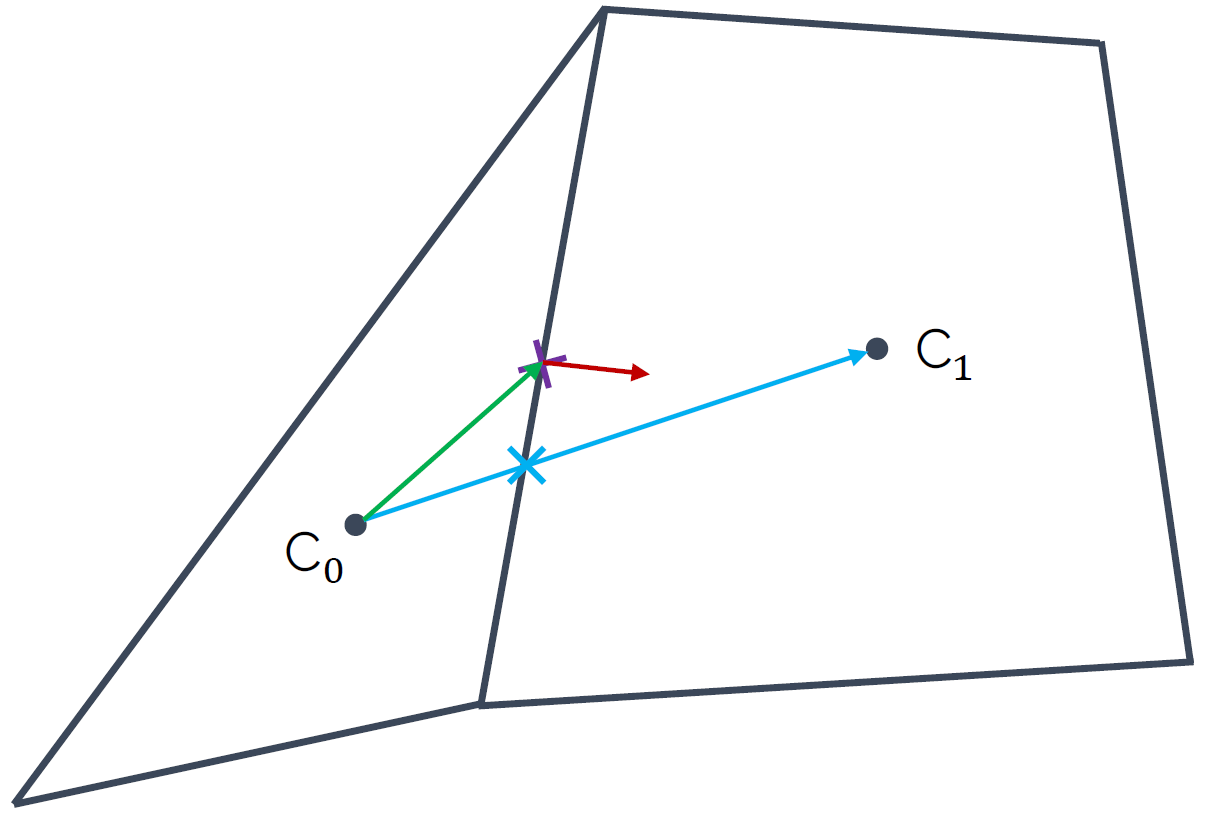

Look at the figure above again. [katex]u_i[/katex] is the velocity component at the face centre, i.e. where the normal vector (shown in red) is located. But we only have the velocity available in each centroid; this is where we store our flow variables like velocity, pressure, temperature, and so on. Thus, we need to interpolate it to have it at the face, which we do by taking a weighted average of the velocity component [katex]u[/katex] from both centroids, i.e.:

u_f = w_1 u_{C_0} + w_2 u_{C_1}\tag{9}The weights are chosen so that the value [katex]u_f[/katex] is obtained on the face. But where is this value of [katex]u_f[/katex], i.e. the velocity component on the face [katex]f[/katex], now available? Look again at the figure above, we get [katex]u_f[/katex] where the blue vector intersects the face. This is marked with the blue cross, and we can see that this is not in the centre.

Bringing this back to our discussion on numerical integration and the inaccuracies we get by evaluating integrals away from the midpoint of the rectangles/bar elements, we see that non-orthogonality in our mesh results in flow variables being evaluated off-centre on cell faces, resulting in inaccurate surface integral approximations.

In other words, the more non-orthogonality we have in our mesh, the more inaccuracy we will get in our results. For this reason alone, despite having developed sophisticates solvers that can work with pretty much any mesh you throw at them, if we had a choice and we lived in an ideal world, people would still use structured grids, even for complex geometries, just because it gives you an edge in terms of accuracy.

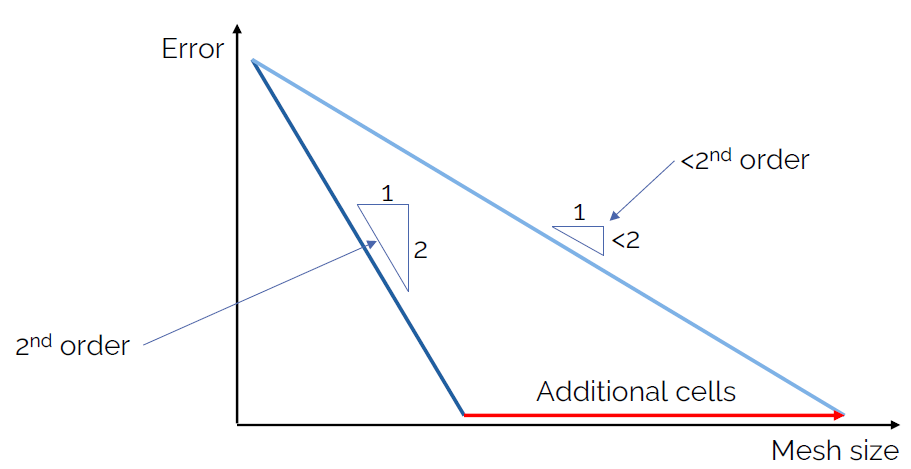

We can summarise the effect of non-orthogonality in our mesh with the following schematic plot:

If we assume that we could measure the error (i.e. we have an analytic solution available, which is the case for the simplest of flows), then we can compare our solution to it and compute what the error is. The is what is plotted on the y-axis. On the x-axis, we have the number of cells we use in our mesh.

Looking at the dark blue line first, which is on the left of the plot, we see that for every step we take to the right, we go 2 steps down in our plot. We can say that the slope is 2, and this means that whatever numerical scheme we used to obtain this result is second-order accurate. This is something you can see very well for yourself if you implement various first- and second-order numerical schemes for the advection equation, for which we have an analytic solution available.

Ok, so now, let's introduce some non-orthogonality. We said that this will make our result less accurate, but we also saw that if we just use more integration points (or, more cells in our mesh), then we will be able to eventually reach the same result, at least asymptotically (at least in theory, in practice, we make so many approximations that we won't see this asymptotic behaviour). This is the light blue line in the figure above.

As a consequence, we can see that if we go one unit to the right, we will not be able to go down 2 units, and thus, the order of our scheme has dropped from second-order to whatever value we are obtaining now from this plot. This, non-orthogonality manifests itself by reducing the order of our numerical schemes.

If you carry out a grid dependency study, you will get, among other results, also a measure for the numerical order. Thus, you can directly compute the order that you actually achieved in your simulation. You can get unrealistic values for the order if you don't carry out the grid dependency study correctly, so keep this in mind.

Finally, if we talk about non-orthogonality, you might have read a bit more, and you know about non-orthogonal correction. What is this all about?

We have looked at what happens when we interpolate a velocity field from centroids to the faces, or, let's use a generic variable [katex]\phi[/katex], which could be velocity, pressure, temperature, etc. But, when we deal with diffusive terms in the Navier-Stokes equation, i.e. [katex]\nu\nabla^2\mathbf{u}[/katex], our discretisation looks slightly different. Let's look at its finite volume approximation for a 1D case as well:

\int_V \nu\frac{\partial^2 u}{\partial x^2}\,\mathrm{d}V = \int_S \nu\cdot \mathbf{n}\frac{\partial u}{\partial x}\,\mathrm{d}S \approx \nu \sum_{i=0}^{nFaces} \mathbf{n}_i \frac{\partial u}{\partial x}\bigg|_i A_i\tag{10}Here, we use the Gauss theorem to express a volume integral as a surface integral, losing one derivative. However, since we have a second-order derivative, instead of losing the entire derivative, we only reduce it to a first-order derivative. If this is unclear, we could have also written the expression as:

\int_V \nu\frac{\partial^2 u}{\partial x^2}\,\mathrm{d}V = \int_V \nu\frac{\partial}{\partial x}\frac{\partial u}{\partial x}\,\mathrm{d}V\tag{11}Now, using the Gauss theorem, we loose the first part of the derivative, i.e. [katex]\partial/\partial x[/katex]. Thus, when we approximate the diffusive term, we have to interpolate gradients now to the face, instead of just the scalar or vector quantities as we did for the convective term before. Let's return to the two cells we saw before, here reproduced for simplicity (we all hate scrolling, don't we?):

At the cell centroids [katex]C_0[/katex] and [katex]C_1[/katex], we now need to compute the gradients, i.e. [katex]\partial u/\partial x[/katex], or, more generally, [katex]\nabla \mathbf{u}[/katex], and we need to now interpolate these gradients to the face. Thus, we can use our weighted interpolation we saw before as:

\nabla\mathbf{u}_f = w_1 \nabla\mathbf{u}_{C_0} + w_2 \nabla\mathbf{u}_{C_1}\tag{12}Fluxes at faces are evaluated along the normal direction of that face, i.e. along the red arrow in the figure above. But our gradient is now available along the blue arrow, and both the blue and red arrows, or vectors, are not parallel. Thus, if we were to use this gradient now, we would be making an error in our viscous flux calculation. Therefore, we split our gradient into an orthogonal-like and a non-orthogonal-like contribution:

\nabla\mathbf{u}_f = \underbrace{\nabla\mathbf{u}_f \cos\theta_{\mathbf{n},r_{C0C1}}}_\text{orthogonal-like} + \underbrace{\nabla\mathbf{u}_f \cos (1-\theta_{\mathbf{n},r_{C0C1}})}_\text{non-orthogonal-like}\tag{13}Here, the angle [katex]\theta_{\mathbf{n},r_{C0C1}}[/katex] is the angle between the normal vector at the face (red arrow) and the vector connecting the two centroids [katex]C_0[/katex] and [katex]C_1[/katex] (blue arrow). For orthogonal grids, without any non-orthogonality, we have [katex]\theta_{\mathbf{n},r_{C0C1}}=0[/katex].

Let us now evaluate the gradient directly, i.e. we write it in a discretised form. Then we get:

\nabla\mathbf{u}_f = \underbrace{\frac{u_{C_1} - u_{C_0}}{r_{C0C1}} \cos\theta_{\mathbf{n},r_{C0C1}}}_\text{orthogonal-like} + \underbrace{\frac{u_{C_1} - u_{C_0}}{r_{C0C1}} \cos (1-\theta_{\mathbf{n},r_{C0C1}})}_\text{non-orthogonal-like}\tag{14}Here, [katex]r_{C0C1}[/katex] is again the vector connecting the two centroids. So, with this equation, we have found a way to correct our gradient so that it points now in the direction of the normal vector, so that our viscous force calculation is corrected (by the non-orthogonal correction step). Our inviscid fluxes (i.e. our convective term) are unaffected by this, and we still have inaccuracies (reduction in the order of the numerical scheme) due to non-orthogonality.

So, just because we apply non-orthogonal correction, it doesn't mean we get rid of the underlying problem. A high-quality mesh, with as little non-orthogonality as possible is still what we would desire. There is no free lunch, I suppose ...

Skewness

The second quality metric I want to look at is the skewness. Before we do that, I have to warn you that there are as many different skewness definitions as there are Kim Jong-Un body doubles (yes, why not, I am using the sun as a source here, legit journalism).

The skewness definition I will introduce here is the equiangle skewness. This is the easiest to understand in my view, and you will find it commonly being used in the wild. The good news is that all the different kinds of skewness definitions measure the same thing, but they are just differently defined. So, even if your software uses a different skewness definition, the discussion in this section will still be relevant (but have a look at how it is defined).

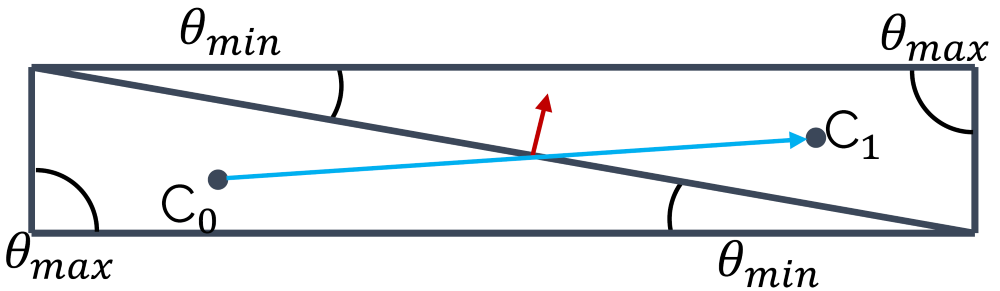

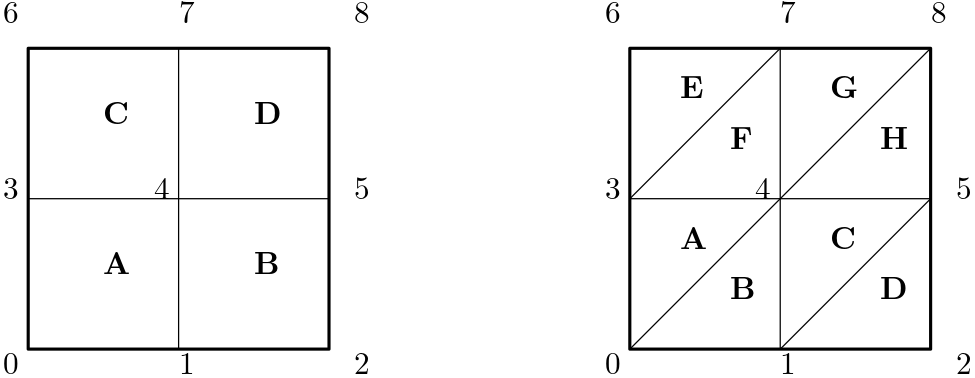

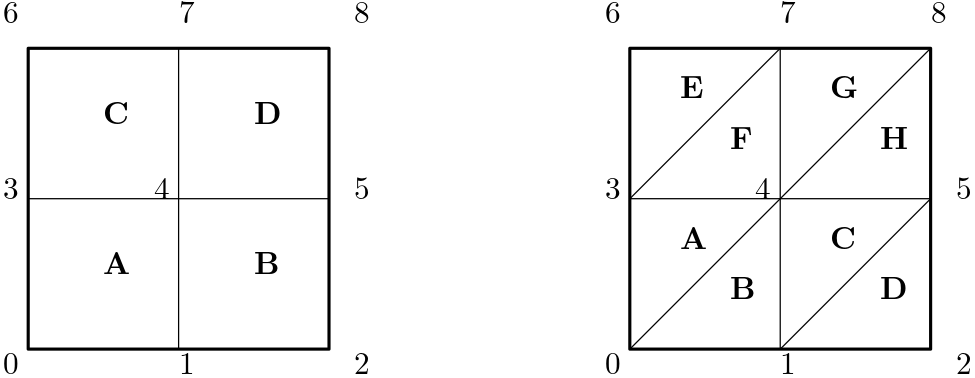

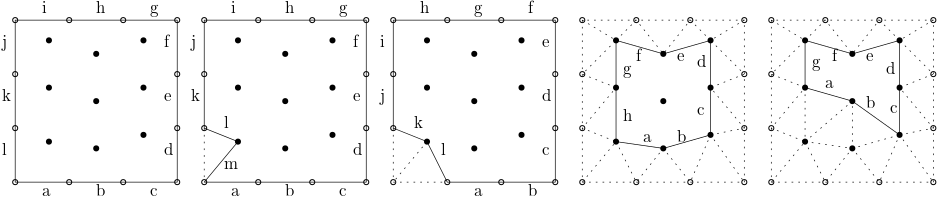

The equiangle skewness states that elements that have the same angle in all corners have no skewness. A triangle in which all corners have an angle of [katex]60^\circ[/katex], for example, will have no skewness. A quad element where all angles have a value of [katex]90^\circ[/katex], will also not have any skewness. Thus, equiangle skewness is a measure by how much the largest and smallest angles will deviate from this ideal angle. This is shown in the following figure:

Here we have two triangles where the maximum angle [katex]\theta_{max}[/katex] is [katex]90^\circ[/katex], which is [katex]30^\circ[/katex] more than its ideal angle (the equiangle). The smallest angle [katex]\theta_{min}[/katex] is also well below [katex]60^\circ[/katex] for both triangles. In order to compute the equiangle skewness, we need to determine [katex]\theta_{min}[/katex] and [katex]\theta_{max}[/katex], as well as the equiangle [katex]\theta_e[/katex], i.e. we have [katex]\theta_e=60^\circ[/katex] for a triangle and [katex]\theta_e=90^\circ[/katex] for a quad element. Then, we can compute the equiangle skewness using the following formula:

Q_{skewness}=\max\left(\frac{\theta_{max}-\theta_e}{180^\circ - \theta_e}\, , \,\frac{\theta_e - \theta_{min}}{\theta_e}\right)\tag{15}We can see from this formula that if we have [katex]\theta_e=\theta_{max}=\theta_{min}[/katex], then [katex]Q_{skewness}=0[/katex]. Thus, unlike the orthogonality quality metric, our mesh will have the lowest skewness for a value of 0. As we approach a value of 1, we will start to see skewness-related problems. This is the point where you should check how your skewness is defined in your solver. It might be the same way, it might be the opposit way around.

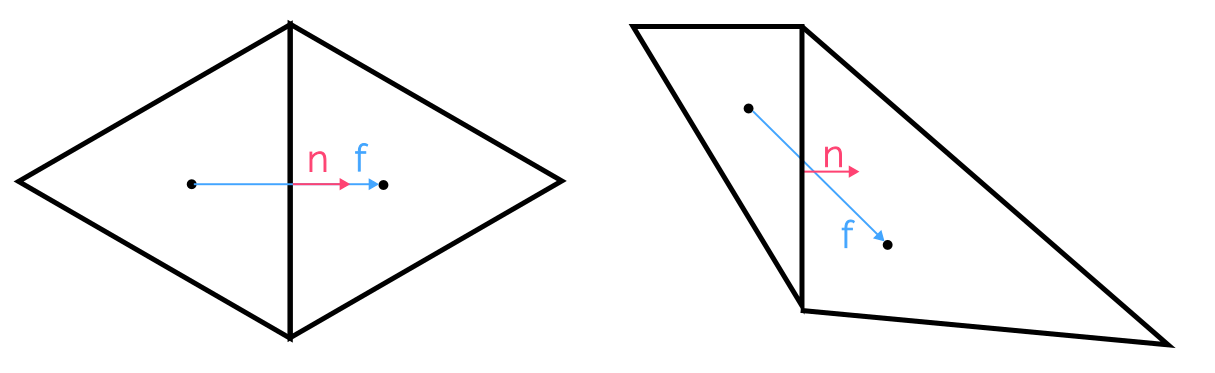

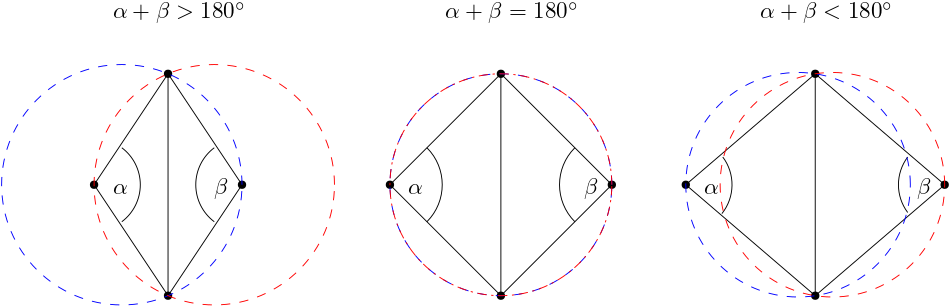

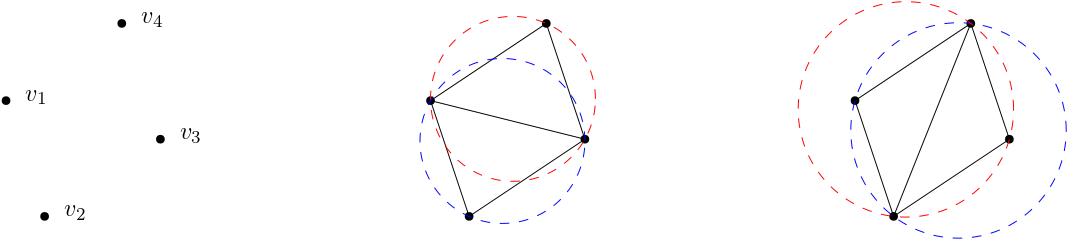

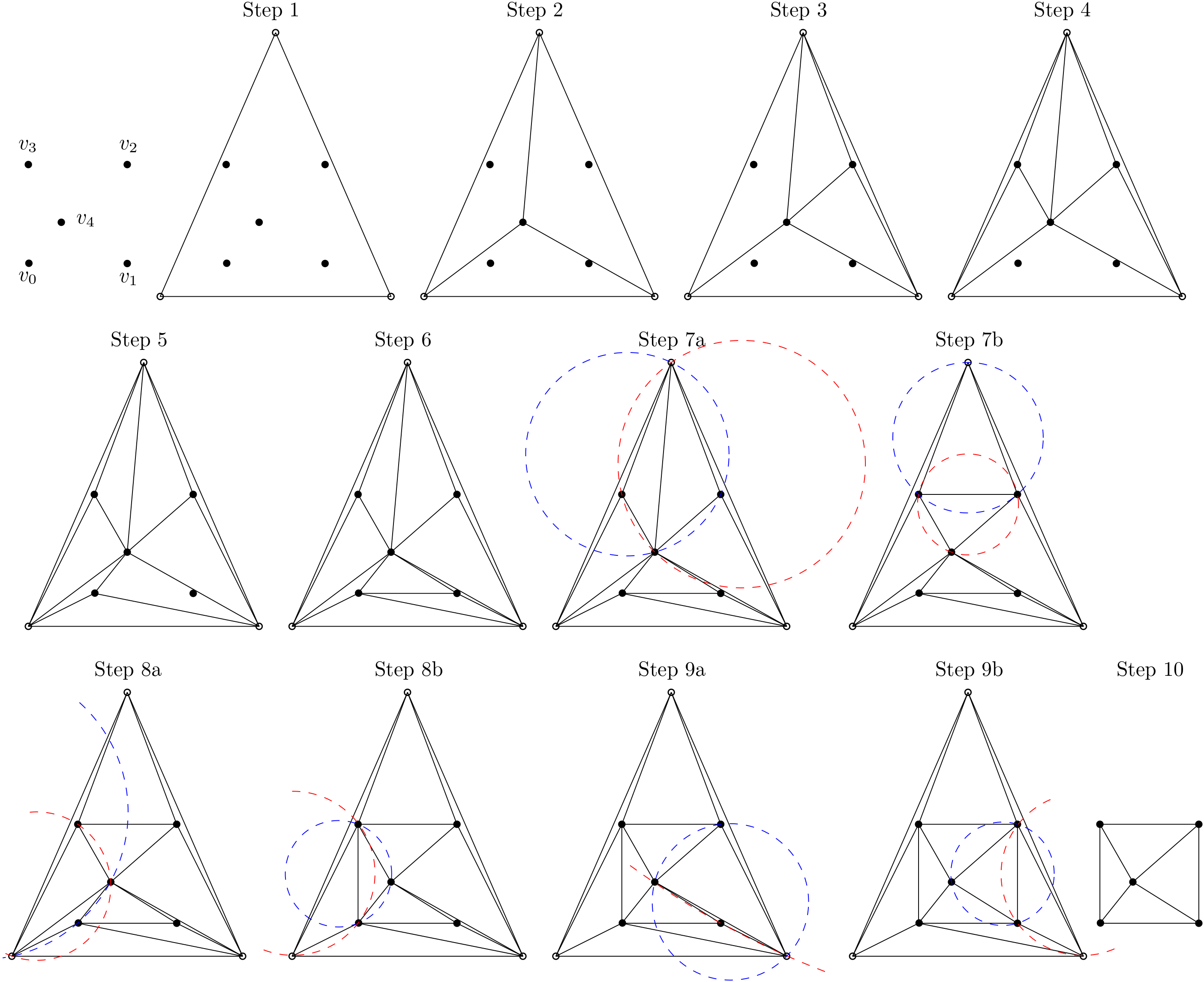

Skewness and mesh non-orthogonality are very closely linked. In general terms, if you have a low orthogonal quality, your skewness will not be much better. Take a look at the following figure:

Here, the cell arrangement on the left shows two triangles where each corner has an angle of [katex]\theta=60^\circ[/katex], i.e. both are equilateral triangles and the skewness will be zero as a result, i.e. we have [katex]Q_{skewness}=0[/katex]. The cell arrangement on the right, however, shows two triangles which have different angles in each corner, and, as a result, we get [katex]Q_{skewness} \gt 0[/katex].

At the same time, we can see what the skewness does to our vector [katex]\mathbf{f}[/katex], which connects the two cell centroids, and the normal vector of the face [katex]\mathbf{n}[/katex]. As the skewness increases, we see that these two vectors become more and more misaligned with each other. This will then result in non-orthogonality. Why is this important?

Generally speaking, we can look at either the skewness or the orthogonality and try to improve one of these metrics. If we do, we can expect to proportionally improve the other quality metric as well (I can't think of an example in which this would not be the case; if you can think of an example, send me an email at ElonMuskOffice@TeslaMotors.com and I'll happily engage with you in an in-depth discussion on mesh quality metrics!).

The equiangle skewness is defined for cell properties, i.e. we need to know what the angles are within each element which is easy to conceive for 2D elements, but it may already get more difficult for 3D elements, such as a prism element, where we have both triangles and quad elements (in which case, we may want to use a different skewness definition). If you try to define an equiangle for a polyhedron, you'll soon realise that the equiangle skewness definition only works for simple shapes.

A common workaround is to look at just the angle and use that as a quality measurement. If angles get close to [katex]0^\circ[/katex] or [katex]180^\circ[/katex], then we are in trouble. It is common to say that we do not want to go below [katex]30^\circ[/katex] or above [katex]150^\circ[/katex] if we are looking at just the angle.

Alternatively, we can ignore the skewness and just look at the orthogonality. As we have established, both quality metrics are closely linked, but the orthogonality metric is entirly defined by the properties of the face and centroid properties; the shape or type of the cell is irrelevant. Thus, the orthogonality quality metric can always be defined, even for arbitrary polygons (2D) or polyhedra (3D), and as a result, you will find it used in most solvers as the default quality metric.

As an example, Fluent meshing uses skewness to measure the surface mesh quality (which is always generated with triangles, thus it can be easily computed). But once you generate a volume mesh, the quality metric changes to orthogonality, as we are now dealing with tetrahedra, hexahedra, and polyhedra, i.e. arbitrary mesh elements.

Since skewness and orthogonality are closely linked, all of the issues we get with non-orthogonality are also true for skewness, i.e. our numerical schemes will see a reduction in their order, and we need more cells to get to a similar level of accuracy compared to a grid without any skewness or non-orthogonality.

However, there is a different issue that arises specifically for skewed cells. For this, we need to investigate a somewhat academic example, but it will help us to understand what skewness is doing. For this, we first need to understand what a passive scalar is. A passive scalar is just that, a scalar field which is passive, i.e. it moves together with the bulk flow but it does not influence it (otherwise it would be an active scalar).

A passive scalar field could represent anything, but typically, we think of passive scalars as some form of concentration field. For example, if you run a water channel and you introduce some dye, the dye would follow the main flow, but it would not change it. If you run a wind tunnel test and you introduce particles for PIV measurements, these would be passive scalars as well. Or you use smoke to visualise pathlines, the smoke would also be a passive scalar, as seen in the following video:

Clouds could also be seen as passive scalars, well, they certainly have their own dynamics, but if there is a strong current of air, the clouds will move with the air and deform according to local shear stresses. I think (or hope?!) you get the idea ...

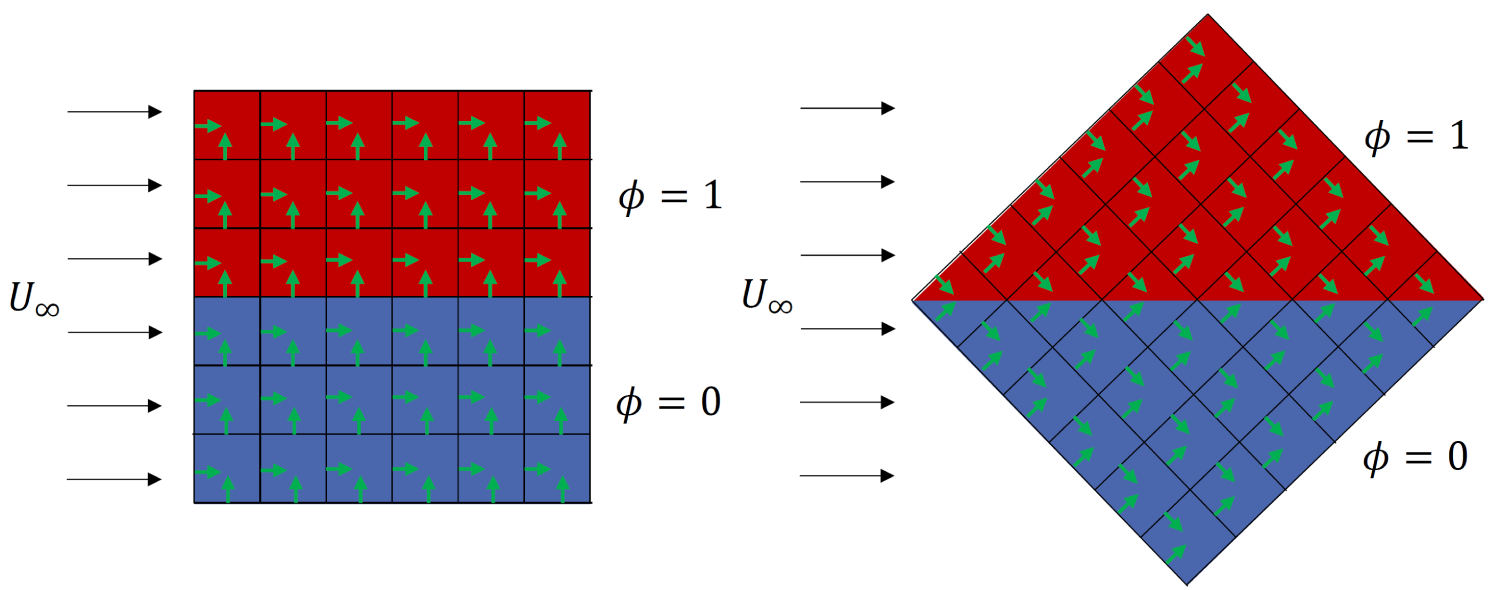

With our new knowledge on passive scalars, let's put that into action. Imagine we want to simulate the flow through a square domain, where we have a constant inflow velocity on the left of the domain. The flow is going through the domain and leaving it on the right side of the square domain. We solve for the passive scalar everywhere in the domain, and we say that we have a concentration of 1 in the upper half of the domain, and a concentration of 0 in the lower half of the domain.

This is shown in the following figure on the left:

Here, the red region indicates the concentration of the passive scalar of 1 (i.e. 100% concentration, think of this area being filled with smoke) while the blue region indicates no passive scalar concentration (i.e. no smoke can be found here). And now, I rotate the domain by [katex]45^\circ[/katex], though my boundary and initial conditions remain the same, i.e. the flow is still coming from the left, going to the right, and the passive scalar is still initialised the same way, with a horizontal separation between the two phases.

At this point, we need to talk about the mathematics of the passive scalar. The transport equation of a passive scalar is just a generic transport equation, which we have already (ab)used a lot when we looked at creating transport equations for turbulence RANS and LES models. It is given by:

\frac{\partial \phi}{\partial t} + (\mathbf{u}\cdot\nabla)\phi = \Gamma \nabla^2 \phi + S

\tag{16}

Here, the first term is responsible for evolving the passive scalar [katex]\phi[/katex] in time (think of looking at a fixed point in space and see how the passive scalar is changing here), the second term is responsible for its transport (think of it as a particle moving with the mean flow [katex]\mathbf{u}[/katex]), the third term is how it diffuses in space, and the forth term is a source term.

In our case, let's simplify things and set [katex]S=0[/katex], i.e we don't consider any sources. Let's also set [katex]\Gamma=0[/katex], i.e. we don't want to have any diffusion. If we suppress any diffusion, we would expect the passive scalar to simply move from the left to the right, and we would not expect to see any changes at the interface, i.e. where the passive scalar jumps from 0 to 1.

In the following, I have simulated this on three separated domains. The first simulation, shown on the left in the video below, is the case where the domain is not rotated. The diffusion coefficient [katex]\Gamma[/katex] is set to zero. The second simulation, shown in the centre, is the same as the first, except that the diffusion coefficient is set to something greater than zero, i.e. we have [katex]\Gamma \gt 0[/katex].

Finally, the third simulation, shown on the right, shows the simulation with [katex]\Gamma=0[/katex], i.e. no diffusion, but with a [katex]45^\circ[/katex] rotated domain. Also, I have placed a vertical line through the centre of each simulation, and this is shown at the bottom, where the y-axis shows the concentration field and the x-axis the distance along the vertical line.

When the domain is rotated (shown on the right), even though the diffusion is turned off by setting [katex]\Gamma=0[/katex], it shows the same behaviour as the domain that is not rotated but where the diffusion coefficient is set to a non-zero value (shown in the middle), i.e where we have [katex]\Gamma \gt 0[/katex]. What's going on here?

To understand what is happening, I need to give you one additional piece of information, but first, let's look back at the figure above, where I introduced the cases. I have, conveniently, left the face normal vectors in the domain. In the case where the domain was not rotated, all normal vectors were either perfectly aligned with the flow or perfectly normal to it. In the case of the rotated domain, all normal vectors had a [katex]\pm 45^\circ[/katex] angle to the flow direction.

The final piece of information I need to give yoiu is how the variables were interpolated to the faces. I used an upwind-based scheme here. But you may ask yourself, why does this matter?

In any upwind-based scheme, we go to a face and check the direction of the flow. Based on this direction, we determine which is the upwind direction, i.e. the direction against the flow, and then we select properties from the cell that is in the upwind direction, for stability. We looked at upwind and stability of interpolation in my article on numerical schemes, which you may want to go through if you need a refresher.

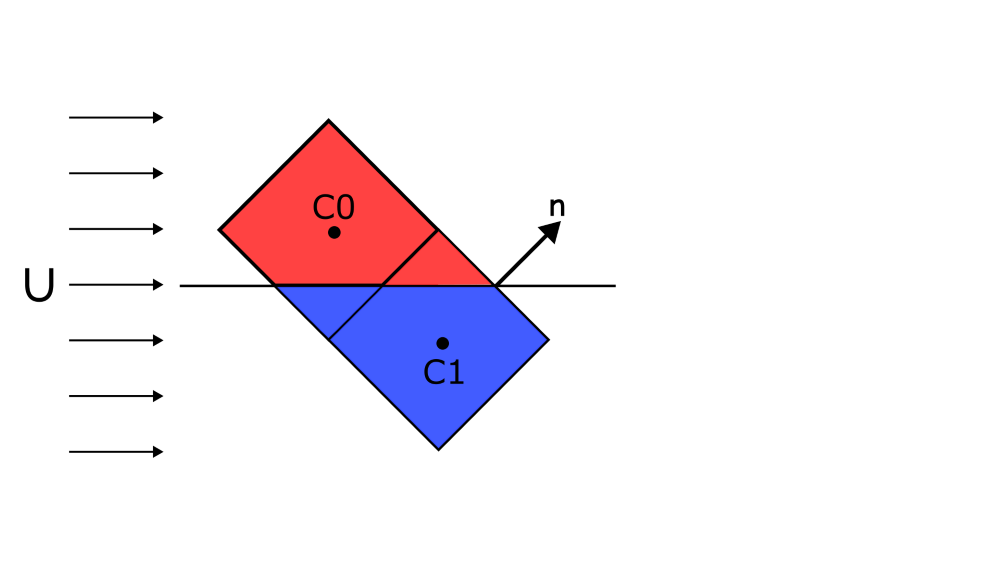

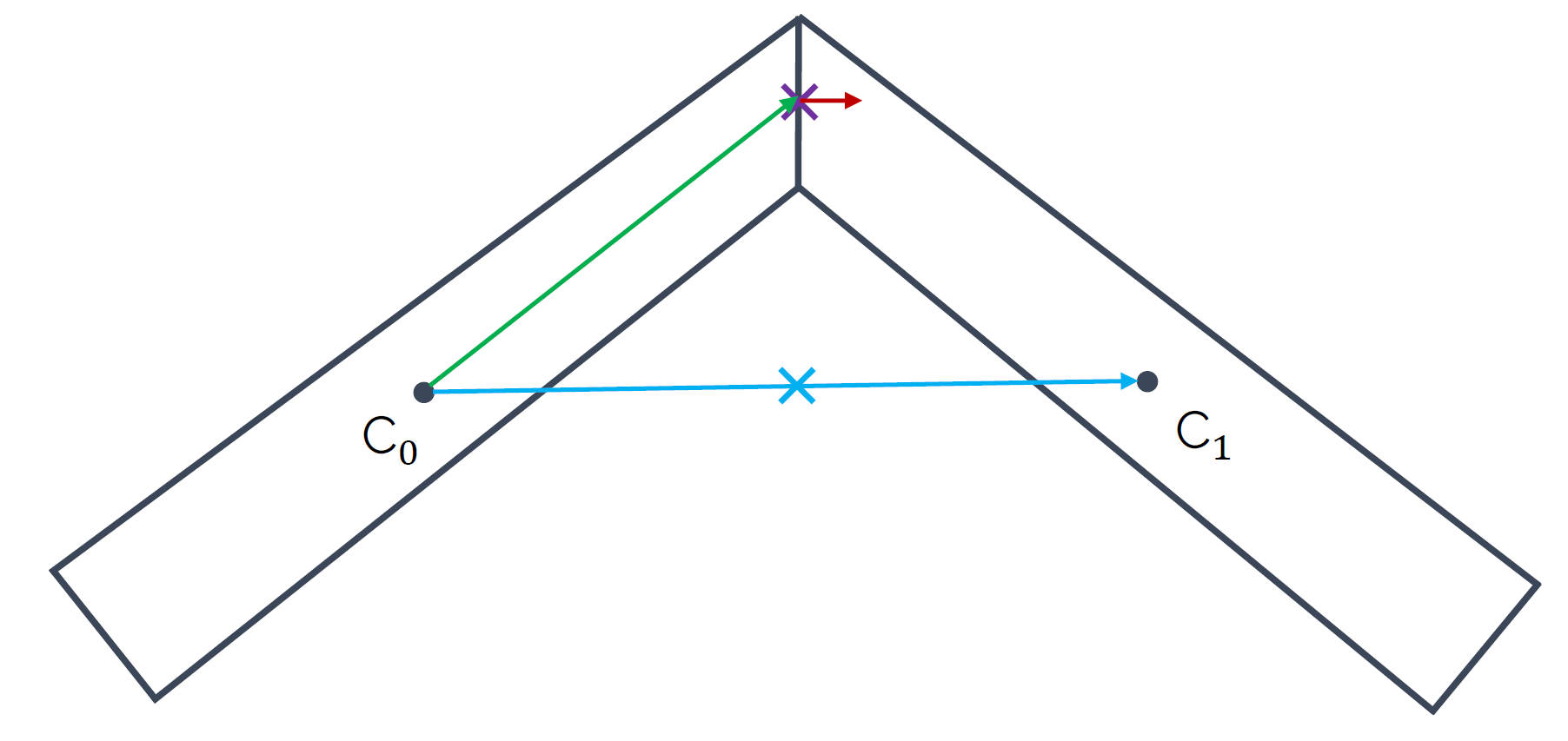

If we are now on a face which has a [katex]45^\circ[/katex] orientation to the main flow, the upwind direction is somewhat arbitrary. Take a look at the following image:

For the normal vector [katex]\mathbf{n}[/katex] as shown in the figure, what is the upwind cell? [katex]C0[/katex] or [katex]C1[/katex]? If we changed the flow direction so that it would have a slight flow component in the positive vertical direction, then the upwind cell would be clearly [katex]C1[/katex], and if we gave the main flow a slight negative vertical component, then the upwind cell would be clearly cell [katex]C0[/katex]. But in this case, and in the simulation we have looked at above, there is no clear upwind cell, and both are equally likely.

Which one does the simulation take? Well, this is a random event over which we have no influence. Sometimes it will be [katex]C0[/katex], and sometimes it will be [katex]C1[/katex]. The problem with this is, as shown in the figure above, at least schematically shown, if [katex]C0[/katex] has a concentration field of [katex]\phi=1[/katex] and [katex]C1[/katex] has a concentration field of [katex]\phi=0[/katex], then this random selection of the upwind direction means that we sometimes get [katex]\phi=1[/katex] and sometimes [katex]\phi=0[/katex] when we use an upwind scheme to get the value ofg [katex]\phi[/katex] at cell faces that are near the interface.

This results in a phenomenon that is called false diffusion, for obvious reasons: If we look at the simulation above again, we can see that despite having turned off the physical diffusion, i.e. by setting [katex]\Gamma=0[/katex], we get numerical (false) diffusion in the case where we have rotated our domain. How does this relate to skewness?

Well, to make one thing clear, the simulation I have performed above has no skewness and no non-orthogonality. But, skewness, in general, has the effect of having misaligned normal face vectors with the mean flow direction, especially if you are considering a triangular, unstructured mesh. So the simulation mimics for us what would happen if we had misalignment due to skewness, even though we do not have any skewness in this case.

Thus, the effect of skewness is non-physical numerical (false) diffusion. The more skewness we have, the more false diffusion we get, resulting in gradients that are smeared/smoothed, which reduces the overall accuracy of our simulation.

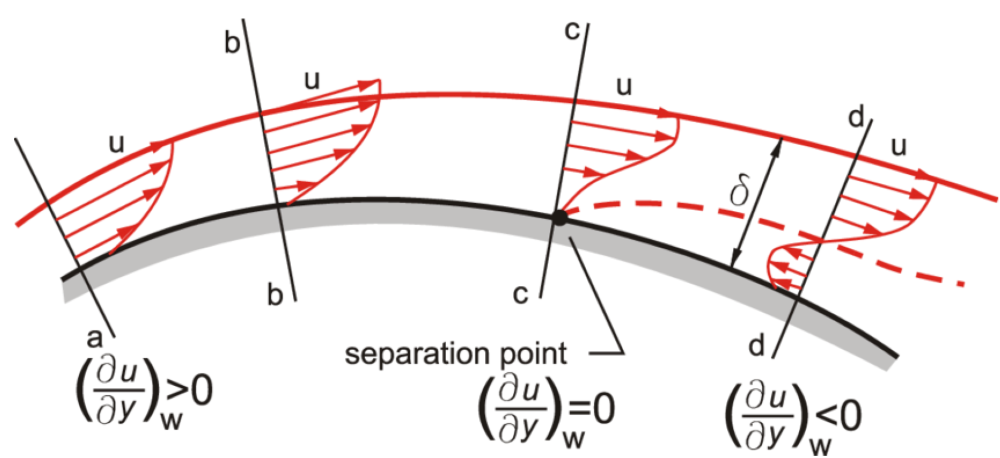

Apart from the obvious fact that we probably want to have a highly accurate simulation, let's look at the real danger of skewness. For that, let us look at the flow past a curved surface, where an initially attached boundary layer detaches at a specific point. This is schematically shown in the following image:

Going from the left to the right, we see that we have a positive velocity gradient, with all the velocity components in the velocity profile pointing from the left to the right, i.e. in flow direction. As the boundary layer develops along the curved surface, it entrains more and more fluid from the undisturbed freestream and grows in size. Due to viscous effects, the growing boundary layer is slowing down, which results in the near-wall pressure rising.

Once the near-wall pressure reaches the same magnitude as the pressure in the freestream, the boundary layer is no longer able to follow the curved surface and detaches from it. This is shown at location c. As we move further downstream, for example, to location d, we can see that the detached boundary layer has developed a recirculation area underneath it, resulting in a negative velocity gradient in the wall-normal direction (now the flow points from the right to the left, against the main flow direction).

We often use the velocity gradient in the wall-normal direction to judge when a flow has separated. We can see from the figure above that this is the case when the velocity gradient is exactly zero in the wall-normal direction and changes its sign, in this case, from positive to negative. But flow separation is not the only time we care about the velocity gradient. Let's take a look at the wall shear stresses, which are defined as:

\tau_w = \mu\frac{\partial u}{\partial n}\bigg|_{wall}\tag{17}Here, I have used the notation [katex]\partial u/\partial n[/katex] to indicate that we are looking at the velocity gradient in the wall-normal direction, which I find clearer than the generic [katex]y[/katex] variable, which would only hold for flat plates. In any case, the wall shear stresses include the velocity gradient as well. We use it in many applications, typically when we want to get an idea for viscous drag.

The skin friction coefficient, for example, is given as:

c_f = \frac{\tau_w}{\frac{1}{2}\rho u_{\infty}^2}\tag{18}The drag coefficient is composed of both the skin friction and pressure drag. For an airfoil, 80% or so of the total drag is due to the viscous profile (skin friction) drag. So, if you are interested in capturing flow separation accurately, or you want to have a semi-decent drag prediction, you want to make sure that you determine the velocity gradient at the wall (where the wall shear stresses are defined) with the highest accuracy.

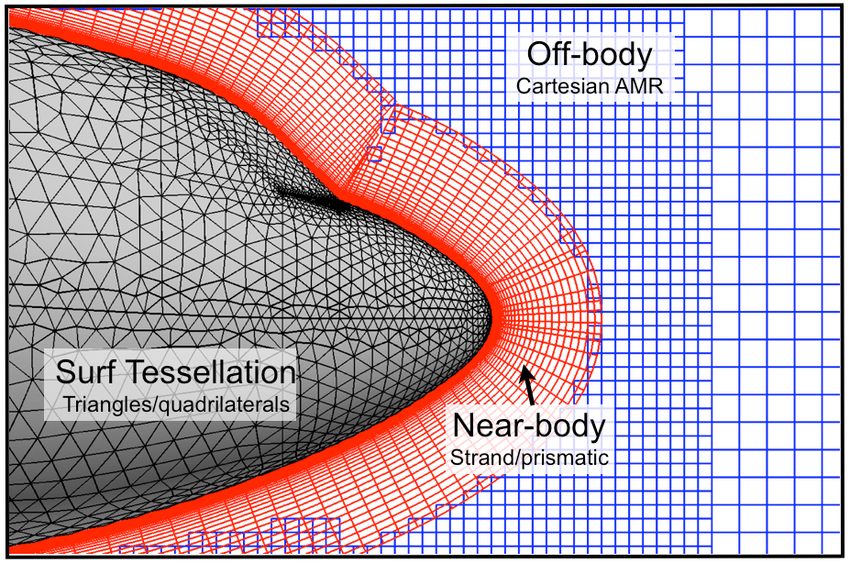

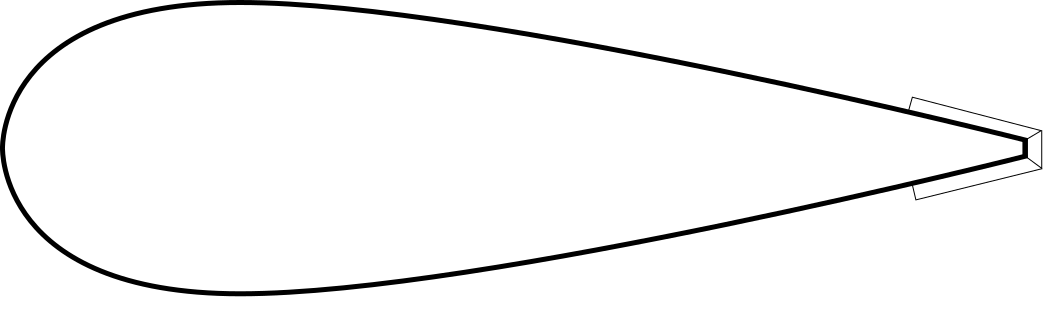

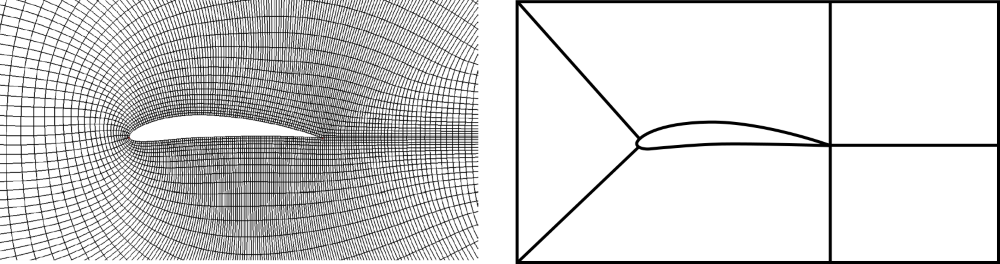

This means we want to have a good grid and high mesh quality metrics in this region. So let's look at two different grid arrangements we can have near the wall:

On the left side, we have some nicely stacked quad elements, growing in size away from the wall. Since the boundary layer will usually follow a solid wall (unless it is separating), the mean flow direction and the orientation of the surface normal vector are mostly aligned. If we contrast that with the triangular elements on the right, which have a similar spacing and growth away from the wall, we see misalignment between the mean flow and the surface normal vector, which becomes larger as we approach the wall.

This growing misalignment introduces false diffusion as we have seen above, which means that gradients in this region would be smoothed or dampened. However, we just said that we really want to have a really good prediction of our velocity gradient in this region to get an accurate drag prediction. Thus, we try to avoid triangles (2D) or tetrahedra (3D) near solid walls.

The solution is to use inflation layers. These offer a controlled way of wrapping a solid object in layers that ensure that most of the boundary layer flow is aligned with the surface normal vectors, resulting in low false diffusion and the best possible accuracy to predict the velocity gradient near walls. So if you ever wondered why you need inflation layers, this is the reason.

The question then becomes, how many layers do you need? Well, ideally, you want to capture the entire boundary layer. This is so that you capture the entire velocity profile. If you only had one inflation layer, you wouldn't capture the velocity profile well, and this would influence the computation of your velocity gradient near the wall. Conversely, if your entire velocity profile within the boundary layer is captured with inflation layers, you get a good velocity gradient and the best possible drag prediction.

As a rule of thumb, use 15-30 inflation layers if your target [katex]y^+[/katex] value is 1. If your target [katex]y^+[/katex] value is 30 or larger, start with 3-5 layers. These are experiential values, and they may differ for your specific case, but they give you a starting point to experiment with.

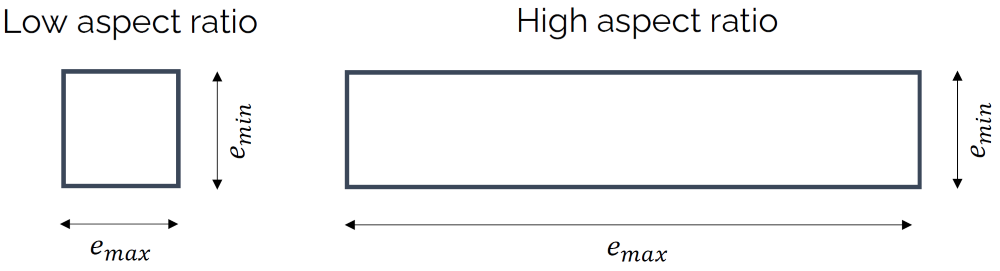

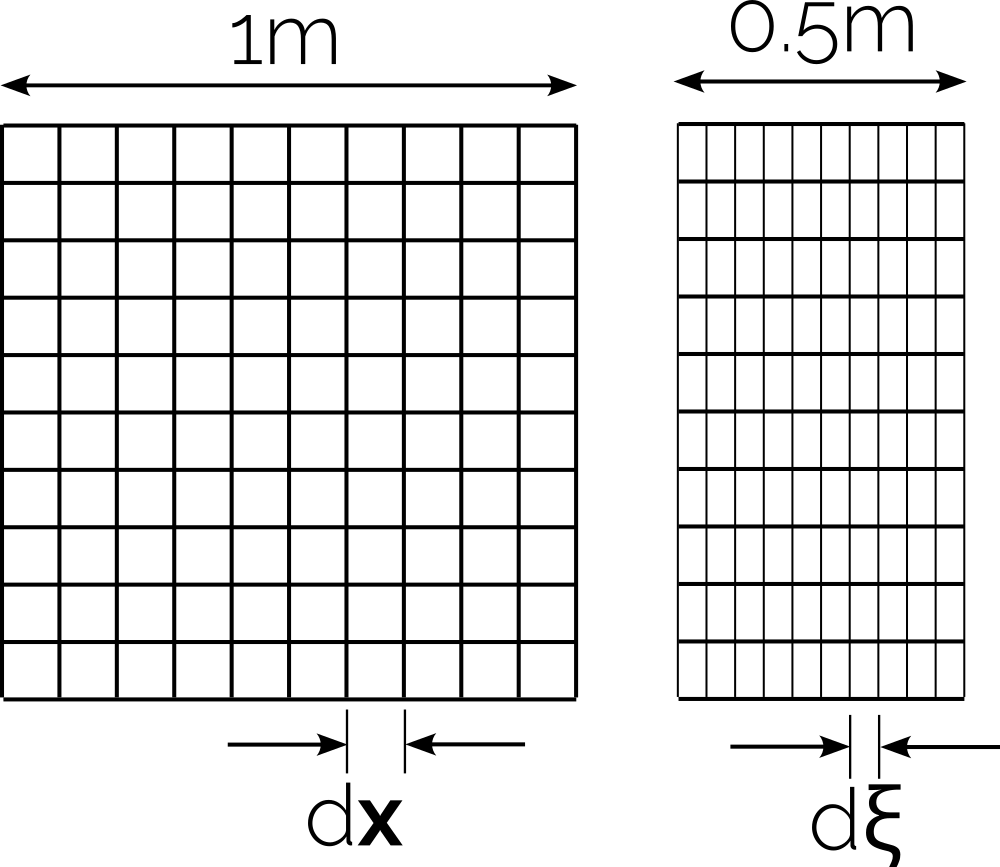

Aspect ratio

OK, we have now covered both the orthogonality and skewness quality metrics, which are arguably the most influential ones. In this section, I want to look at another important quality metric: the aspect ratio of a cell. It is somewhat detached from the skewness and orthogonality metric; that is, you can have a grid with no skewness and non-orthogonality, but still have a mesh containing high aspect ratio cells.

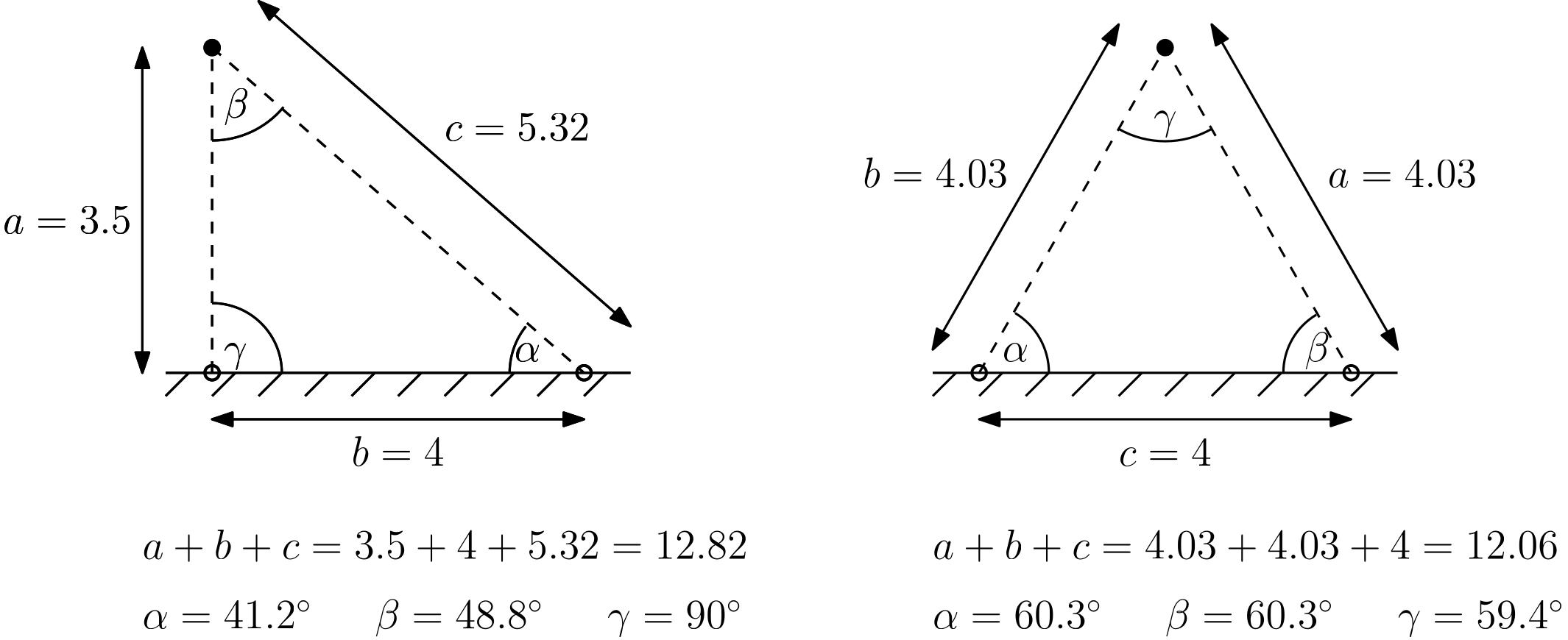

Let's look at the definition first. The general definition of an aspect ratio is the ratio of the largest to the smallest edge of a cell, as shown in the following figure:

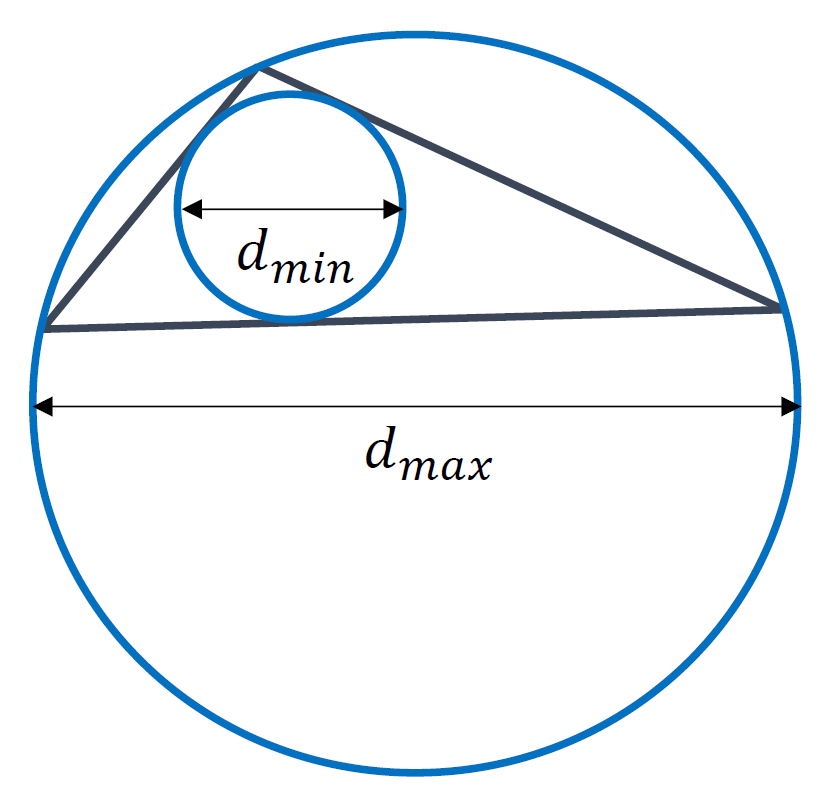

This works great for quad or hexa elements, but for triangles or tetrahedra we use a different definition. Here, we place two circles inside and outside the triangle. The inner circle will touch all edges of the triangle tangentially, while the outer circle will touch all vertices of the triangle. This is shown in the following figure:

Thus, we can define the aspect ratio as:

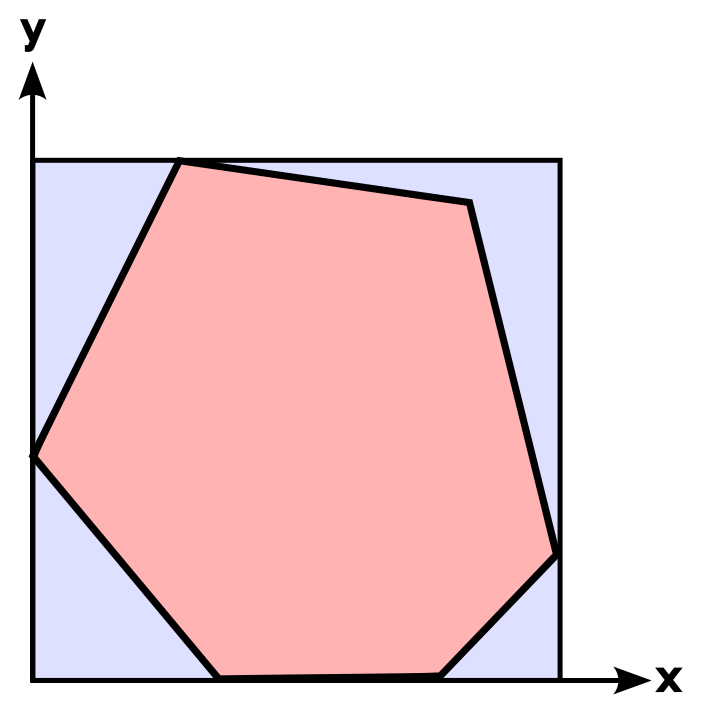

Q_{aspect-ratio} = \frac{e_{max}}{e_{min}} = \frac{d_{max}}{d_{min}}\tag{19}This still only holds for quads and triangles, but we can generalise it. Instead of placing a circle around a given cell, as we did for the triangle above, we place a square (in 2D) or a cube (in 3D) around a cell, which fully encloses it. This square/cube is aligned with the coordinate axes. If you want, you can think of the square/cube as the axis-aligned bounding box (AABB).

Next, we compute the area/volume of the square/cube and the area/volume of the cell, which will be, by definition, smaller than or equal to the area/volume of its bounding square/cube. This is shown in the following figure, where we compute the aspect ratio of a general polygon in 2D, which is fully enclosed by a square. All vertices of the polygon touch the edges of the square:

Then, instead of using the edge length or diameter to compute the aspect ratio, we use the ratio of the areas or volumes of these two objects, i.e. we divide the square's/cube's volume by the cell's area/volume. We can write this as:

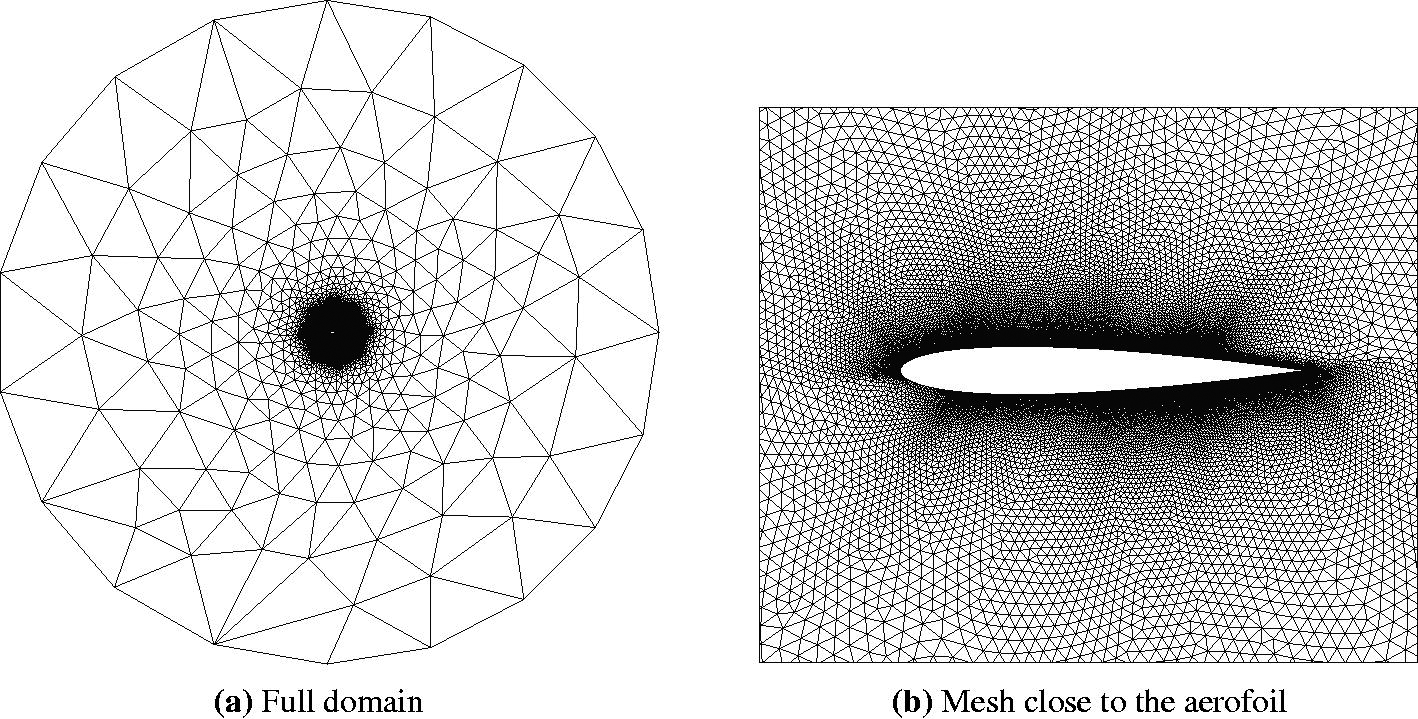

Q_{aspect-ratio}=\frac{A_{AABB}}{A_{cell}}=\frac{V_{AABB}}{V_{cell}}\tag{20}This works in 2D and 3D for any type of object. With this definition in mind, let's explore what some of the problems are with this definition. Imagine we are meshing an airfoil. We want to have [katex]y^+=1[/katex] everywhere, and we have a relatively large Reynolds number, say, above one million. This means that our first cell height will be orders of magnitude smaller than the chord length of the airfoil.

A common issue that we face, unless we take good care of our mesh, is that we get large aspect ratio cells at the trailing edge. If we don't set the spacing near the trailing edge equal to the first cell height, then we are going to get large aspect ratio cells near the trailing edge. This is schematically shown for the cells near the trailing edge of an airfoil:

Is this a problem? Well, let's increase the aspect ratio a bit and look at two cells in isolation, which meet at the trailing edge of the airfoil, as shown in the following figure:

A large aspect ratio may result in completely non-sensical values for the interpolated quantities at the cells' faces. We see that the blue arrow connecting both [katex]C_0[/katex] and [katex]C_1[/katex] intersects the face at a location which is not even on the face anymore. Clearly, whatever value we interpoalte to this location will not have much physical meaning.

The airfoil example is a good one, and, if you can generate a mesh and run that case on your machine, I would encourage you to do so. Most CFD solvers will struggle with this exact meshing issue (I have tried it in Fluent and OpenFOAM, and neither likes it). To avoid this, we could either:

- Reduce the spacing near the trailing edge to ensure we have an aspect ratio close to one (recommended)

- Connect both cells to another quad element that sits at the trailing edge where both cells connect. This avoids large deformation of cells at the trailing edge (and thus skewness) but also introduces a large area/volume ratio, which is the quality metric we will look at in the next section.

So, based on the airfoil example, we could say that aspect ratio alone is not a problem, only if we have skewness and non-orthogonality as well at play, do we get an issue, where interpolated values may not even be on the cells' faces anymore, right? Well, even for cases where there is no non-orthogonality and skewness do we get into trouble, potentially? Let's look at another example.

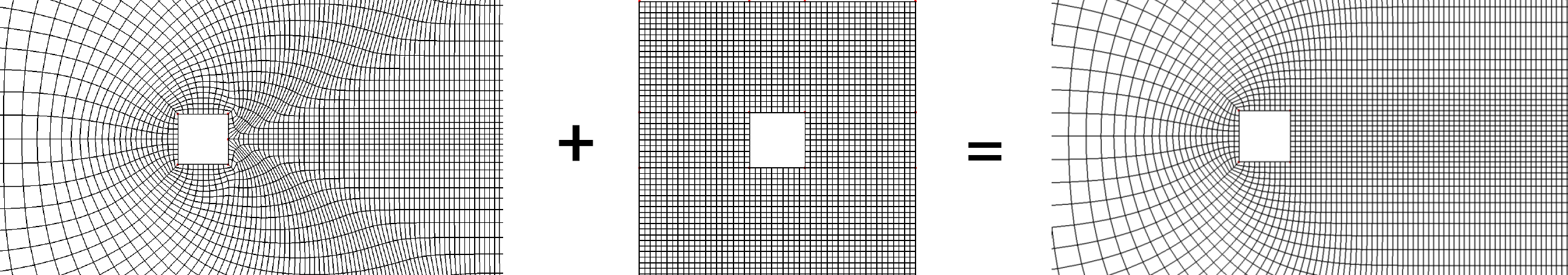

The following image depicts two grid arrangements. On the left, we have a Cartesian grid with no skewness, no non-orthogonality, and all cells have an aspect ratio of one. On the right, we see the same Cartesian grid, only now we have introduced some clustering, for example, to resolve the boundary layer near the bottom wall, which has led to an increase in the aspect ratio, while no additional skewness or non-orthogonality was introduced.

What I have also shown here is the cell sizes on a multigrid with three levels. If you have never heard of a multigrid before, think of it as a series of grids, which get coarser and coarser, on which we compute the solution. The advantage, in a nutshell, is that on the coarsest level, we typically only have a few hundred cells. Our simulation will converge pretty quickly on this grid, even if the full mesh has millions of cells.

After we have achieved convergence on the coarsest grid level (with a few hundred cells), we interpolate the solution onto the next finest grid level, perhaps with a few thousand cells. Since we already have an initial solution from the coarse grid, we don't have to iterate long to get a converged solution on this finer grid. This continues until we get down to the full grid.

If done correctly, a multigrid can be blisteringly fast and, for elliptic equations, nothing comes even close to how fast a multigrid operates. The pressure Poisson solver is an example of an elliptic equation, and for that reason, we like to use a multigrid for the pressure when dealing with incompressible flows, but with modifications, we can make the multigrid work for hyperbolic flows as well (e.g. compressible flows), but it is not as straight forward and requires some tricks to get working properly.

Back to the figure above, the cells shown in red, blue, and green indicate the size of our cells we would have on levels 0, 1, and 2 of our multigrid. That is, as we go from one level to the next, we combine cells together and their size increases.

One of the advantages of a multigrid is that by increasing the cell size, information can travel faster from one side of the domain to the other, and that is where it mostly derives its speed from. However, when we introduce high-aspect ratio cells, like we do in boundary layer flows, then even if we combine cells to make them larger for our multigrid, the cells on higher levels of the multigrid will still be rather small, at least if we compare the cells to those on a grid without hight aspect ratio cells.

If we look at the figure above again, I have given the same red, blue, and green cells on the right grid as well, where we have higher aspect ratio cells. We can see that the cells themselves are not as tall as the ones on the left grid, i.e. where we don't have any aspect ratio. Thus, high aspect ratio cells will decrease the efficiency of a multigrid solver, and thus increase the time it takes to get to a solution (computational cost is increased).

This isn't as much of an issue if we were to use something like a conjugate gradient method for the pressure, though in my experience, high aspect ratio or not, given the elliptic/parabolic and hyperbolic nature of the incompressible and compressible Navier-Stokes equations, respectively, there is very little in terms of performance gains between the conjugate gradient and multigrid approach.

I should also say that there will be opinionated CFD practitioners who will be deeply offended by that statement. For some, multigrid is a must, no matter what, and depending on your application, I can agree with that view. From a general view, though, whether the conjugate gradient or multigrid method is better suited for you will depend on your application.

Be that as it may, if we have the chance, we should try to avoid high aspect ratio cells. From experience, my observation is that once the aspect ratios get too large, your simulation will not converge anymore. Increase that aspect ratio a bit more, and your simulation will diverge. Some solvers are more resilient than others. For example, in Fluent, you may get away with aspect ratios that are of the order of one million, while OpenFOAM is known to struggle for anything that goes beyond an aspect ratio of 10,000.

As the saying goes (which I have just invented): Trashy mesh, no success. I rest my case.

Area/Volume ratio

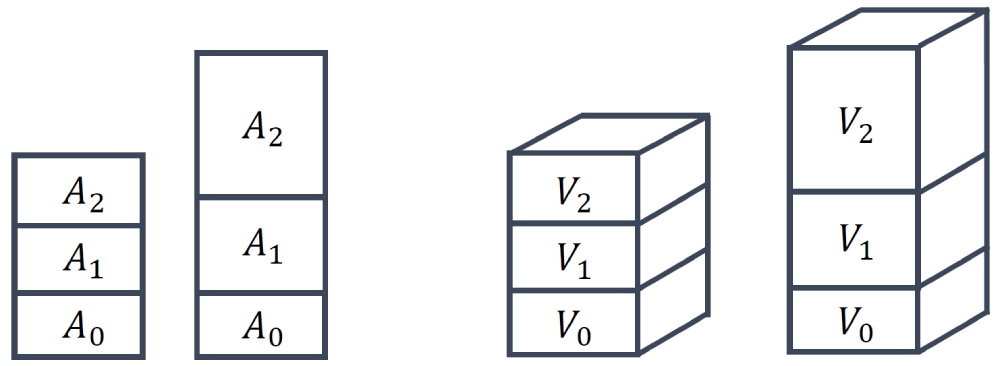

The final mesh quality metric we want to look at is the area ratio (2D) or volume ratio (3D). The quality metric is rather straightforward and it quantifies how the area or volume changes between two subsequent cells, as shown in the following figure:

The area or volume ratio can then be computed as:

Q_{area-ratio} = \frac{A_1}{A_0}\\[1em]

Q_{volume-ratio} = \frac{V_1}{V_0}\tag{21}By definition, we choose [katex]A_1[/katex] or [katex]V_1[/katex] to always be the largest of the two areas/volumes, and [katex]A_0[/katex] or [katex]V_0[/katex] to be the smallest of the areas/volumes. Thus, the area or volume ratio can never be smaller than 1 by definition, and it can only be larger than 1 if the areas or volumes differ.

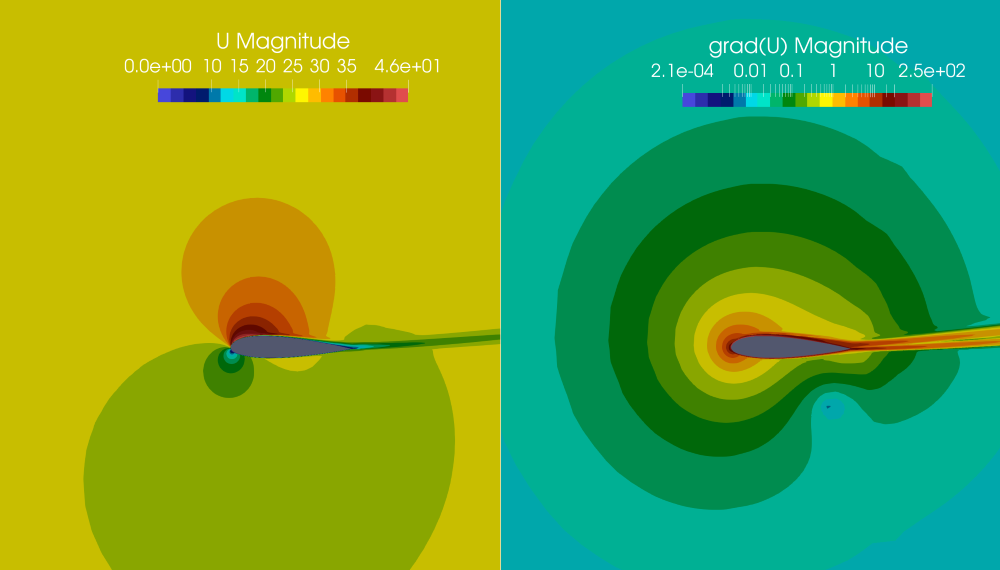

This quality metric is much more straightforward to interpret compared to the other metrics. Take a look at the following airfoil simulation, for example, where we see the velocity magnitude on the left, and the magnitude of the velocity gradient tensor:

Here, I have used a log-scale for the contours on the right to show their variation a bit better. If I asked you to describe the variation of the contours, would you say these vary smoothly or abruptly? We can probably all agree that we have a very smooth variation. And this is something very typical for CFD applications. Even if we have shock waves or interfaces with a sharp discontinuity, these discontinuous changes are limited to small regions. Most of the domain will have smoothly changing variables in space.

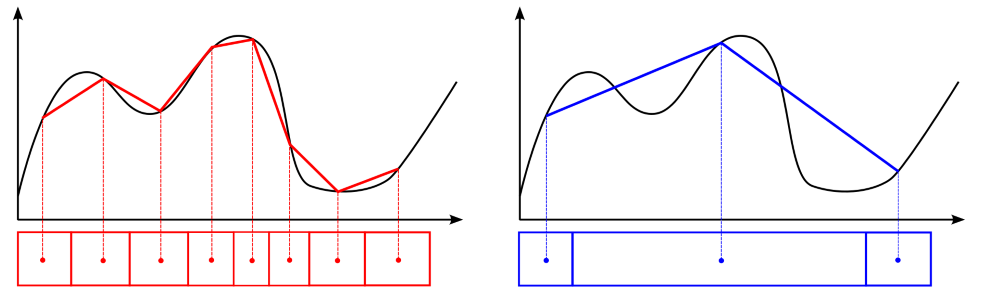

Let's make this even simpler, let's just look at an xy plot, where we try to numerically approximate a given function (here shown by the solid black line):

I have drawn two different grids here, one with a low area ratio (shown on the left, in red, below the plot) and one with a large area ratio (shown on the right, in blue, below the plot). If we assume we store values at cell centroids, as indicated by the dots, then we can find which values we would be storing in them, assuming we would be able to perfectly approximate the underlying function (shown in black). This is a strong assumption, but it won't change our discussion.

Because our variables in space change relatively smoothly, in general, we want to have cells without large jumps. As we can see on the right, once we introduce large area ratios, we jump over quite a bit of detail in our original function. If we look at the function we actually obtain by our numerical approximation, shown by the solid blue line on the right, it shows drastic deviations from the original function, shown in black.

However, by keeping the area ratio relatively small, as we can see on the left, the numerical approximation of the original function, shown by the solid red line, is much better compared to the blue line on the right. Thus, the main goal of keeping the area and volume ratio to a minimum is to enhance our predictive capabilities of gradients within our simulation.

And, just in case, to some it may appear obvious why we want to have a good gradient approximation (think, for example, back to our discussion on the skin friction and drag coefficient, which depend on the velocity gradient near the wall). However, there is an even stronger case for why we care about gradients so much. Have you seen this equation before?

\frac{\partial u}{\partial t} + u\frac{\partial u}{\partial x} = -\frac{1}{\rho}\frac{\partial p}{\partial x} + \nu\frac{\partial^2 u}{\partial x^2}\tag{22}Of course, you have, it is the Navier-Stokes momentum equation, here given in 1D. Each term is expressed as a derivative, and so if we want to find an accurate solution to this equation (which generally is what we try to do), we'd better make sure our gradients are approximated as accurately as possible.

See, I told you, this quality metric didin't take that much space to discuss, we have reached the end of the quality metric discussion. Next up, some best practices so you know if your mesh quality is "mediocre", "bad", "poor", "go home", or "perhaps CFD isn't right for you" (yes, this is the official quality scale, and yes, there is no such thing as a "good" mesh (unless you spend life on easy mode and only work with square Cartesian grids (but that's cheating! (please don't check my PhD thesis)))).

Mesh quality best practices

After we have gone through all of the definitions, we may know what all of these different quality metrics are, how they are defined, and what part of the simulation they influence. However, if we don't know what a sensible threshold is for each quality metric that we don't want to cross, then we may as well not bother at all with them.

So, in this section, I want to give you some of my best practices. These are the quality metric limits I typically try to achieve during meshing. These are not commandments set in stone but rather experiential values, as well as averaged limits gathered from various solver developers. Use these as a starting point, and adjust if your simulation is showing trouble converging.

The following tables show what the highest (ideal) value for our quality metric is. We typically only achieve these values on Cartesian grids without mesh refinement or other mesh deformation. For most practical applications, we want to ensure that we are within the acceptable quality metric column and avoid the poor quality metrics.

| Quality metric | Ideal quality metric | Acceptable quality metric | Poor quality metric (try again) |

|---|---|---|---|

| Orthogonality | 1 | 1 - 0.15 | [katex]\lt[/katex] 0.15 |

| Skewness | 0 | 0 - 0.85 | [katex]\gt[/katex] 0.85 |

| Aspect ratio | 1 | 1 - 10,000 | [katex]\gt[/katex] 10,000 |

| Area / volume ratio (boundary layers) | 1 | 1 - 2 | [katex]\gt[/katex] 2 |

| Area/volume ratio (boundary layers) | 1 | 1 - 4 | [katex]\gt[/katex] 4 |

If you are dealing with structured grids and you have a high Reynolds number, you might struggle getting below an aspect ratio of 10,000, especially if you have solid walls and want to have a low [katex]y^+[/katex] value. The trick is to have small aspect ratios in areas of strong gradients and put the high aspect ratio cells into areas of low gradients. This typically works quite well.

For the area and volume ratio, I have given two different definitions. Typically, within the boundary layer, we would like to achieve a growth rate of inflation layers (another way of expressing the area/volume ratio) that is not above 2. For academic applications, you will often find a value of 1.2, though for more complex cases, a value somewhere between 1.5 and 2 is a good compromise between accuracy and computational time.

For any other part of the mesh, e.g. the surface mesh and the far field, our area/volume ratio may be larger, and typically a value of 4 is acceptable. You may say this is a rather large value, and it is, but you will also find that if you create a mesh manually and you have to adjust spacings on complex geometries to get an acceptable area/volume ratio, a value of 4 can be difficult to achieve, or at least, it will require a lot of manual fine-tuning.

If you use an automatic mesh generation software, e.g. Fluent, StarCCM, OpenFOAM, ConvergeCFD, Numeca, etc., these will all try to create a mesh with acceptable area/volume mesh properties, so you don't really have to worry about this metric here. But if you create your mesh manual using Pointwise, ANSA, or Grid Pro (to name but a few), you will need to take care of that.

As mentioned before as well, check which way around your skewness is defined; it may be the opposite of how I defined it above. Because all other element metrics have an ideal value of 1, sometimes people like to say that a skewness of 1 is best and a value of 0 is worst, in which case the definition is flipped.

And, finally, if you ever face issues with mesh generation, there are only two things you can do to fix it:

- Spend more time on your mesh and optimise your mesh spacing and distribution. Typically requires some manual input on the mesh. If you don't have that, you can still resort to the second option

- Use more cells

In pretty much all cases where you have poor quality metrics, if you know where these are (in space), you can almost always get rid of these poor quality cells by just throwing more cells at the problem. You trade computational cost for grid quality. Remember the figure we looked at in the very beginning?